A Novel and Intuitive, Yet Human-Inspired Deep Learning Approach for Mango Disease Classification

Published in Computational Sciences and Agricultural & Food Science

Three years back, when I attended the university for my orientation day, I was amazed by the dense and calm mango forest that was along the parking area with a jogging path. I went there often to ease out my stress and to experience some solitude and in that quiet moment of isolation, all I had was to observe the irregular shapes of mango trees that were blocking the sunlight. It was a no-go area for the girls, but you could encounter random groups of boys from the Agriculture department roaming there for their labs and experiments, and they made me wonder what kind of work they were supposed to do with these massive trees, which are many folds older than them.

Fast forward to the summer break of my second year of undergraduate studies, I was going through different datasets on Kaggle for experimentation, and I stumbled upon a mango fruit dataset that contained four classes, which provided us a positive sign to move forward. I discussed it with my supervisor and my friend, who was supposed to work along with me on this, and he later helped me find some other datasets that we could merge together to improve the generalization of our models. However, after a few days, he had to abandon this project due to some extenuating circumstances and I was left alone to carry it out, which was way too intimidating for me. Well, after mourning for a while, I came up with some datasets of mango disease classification via leaves instead of fruits, which were our initial problem source, so it was complicated for me to decide on a single modality to go with, as both were good options for me at that time.

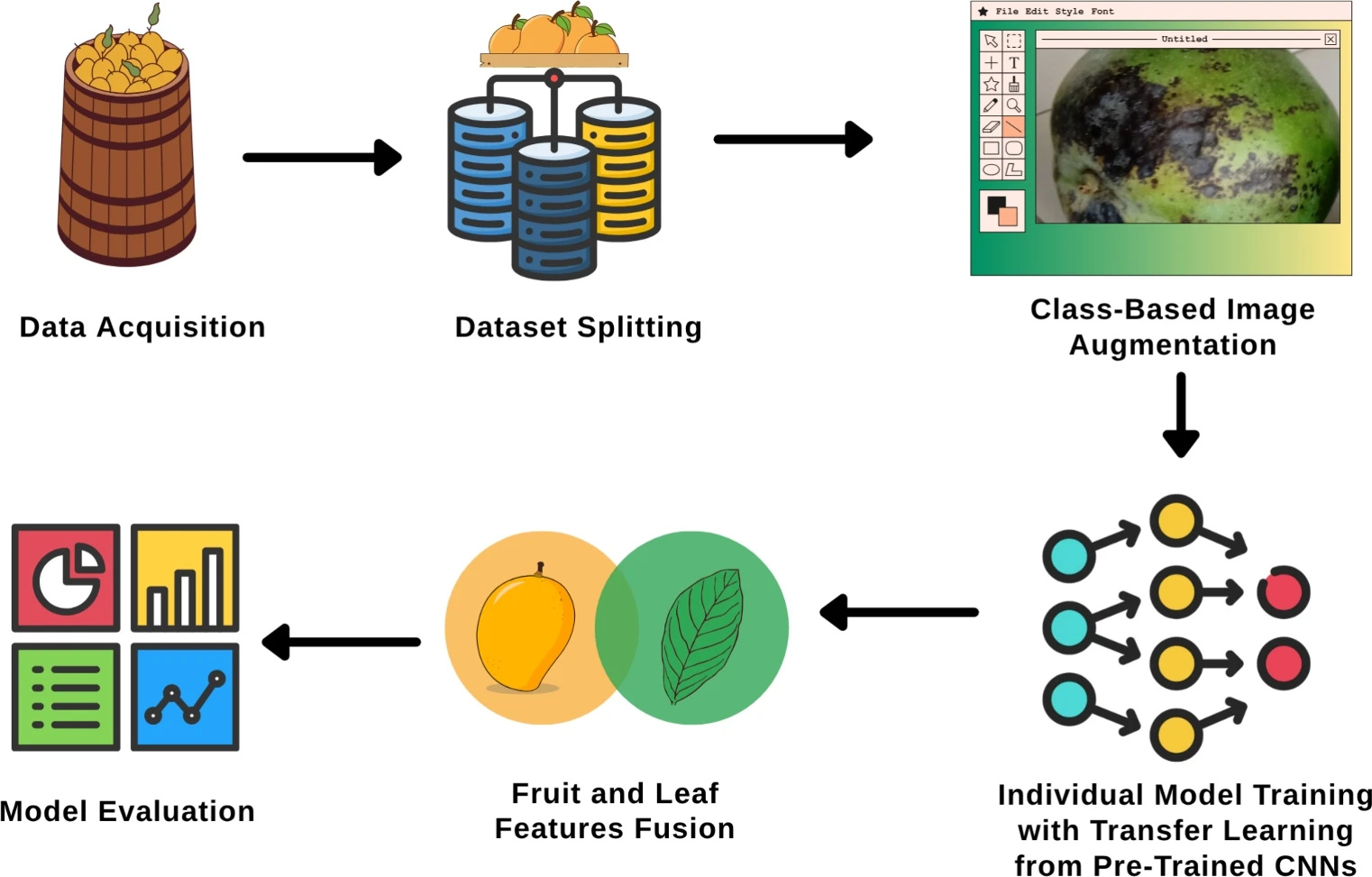

One night, I did some research and discussed this issue with different existing chatbots to suggest a solution through which I could mitigate these concerns, and fascinatingly, I did come up with a novel approach of fusing both of these modalities together and it was something I do have general idea of but didn't had the exact way or method to implement. Metaphorically, it is like considering both of these images—image of leaf and fruit—simultaneously to come up with a final conclusion. I shared this idea with my supervisor the very next moment, and when I started actually working on this, things became far more interesting.

For instance, it was generally a great idea to fuse two modalities, but what could be the best way to do it? Like, do I need a static fusion where I simply merge the complete features, or should I introduce something more optimized and novel? So, for the solution to this question, we finalized a dynamic attention-based fusion, which looks at both features on the go and shifts the weights accordingly by prioritizing the modality that contributes the most at the class level.

However, the major concern arose in having a reliable dataset that contained images of both modalities—leaf and fruit—along with the same classes, and it was painstakingly difficult to find such data. But thanks to Kaggle, Mendeley, and some other data sources, I was able to gather the required information needed to apply transfer learning on pre-trained models. But there is a twist here—in this last line, we did not need to train our models from scratch because there is a modern technique called transfer learning that already exists, in which different models such as ResNet, MobileNet, and many others are trained on millions of images in the ImageNet dataset. All we need to do is tweak the final layers with our images so that the output of the neural network aligns with our needs. This means the initial layers are used to differentiate major curves and edges, while the final layers help us focus on the actual parts of the image. We do not need to reinvent the wheel when we already have one, do we?

Also, there is one integral process in deep learning that we implement during preprocessing, known as augmentation, where we augment the dataset in various dimensions (left, right) or by tweaking brightness, contrast, or other parameters to increase the number of images in the training set, which helps us generalize better. For that, I introduced class-aware augmentation because each class of mango species holds different features that need to be adjusted accordingly. I will not ignore the fact that it did not prove worthy during the ablation study, but theoretically, it is indeed a great approach, which I can try to implement again in my future experiments instead of just going with generalized and fixed parameters for augmentation.

Well, in between this experimentation and writing, there were many other hindrances that I faced, but it was really amazing to come up with a solution to something this novel to fix a local problem. Even though the dataset and its classes were limited, it still provided a solid theoretical ground to reimplement this methodology when we have enough data across multiple classes of multiple fruits.

You can read the complete Article here :

https://www.nature.com/articles/s41598-025-26052-7

~Mohsin

Follow the Topic

-

Scientific Reports

An open access journal publishing original research from across all areas of the natural sciences, psychology, medicine and engineering.

Related Collections

With Collections, you can get published faster and increase your visibility.

Computational biology and mathematical modelling of biological systems

Publishing Model: Open Access

Deadline: Jul 18, 2026

Water pollution and advanced treatment processes

Publishing Model: Hybrid

Deadline: May 31, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in