Optoelectronic graded neurons for bioinspired in-sensor motion perception

Published in Electrical & Electronic Engineering

Dynamic motion usually generates abundant vision data and demands lots of computation resources. In contrast, flying insects can agilely perceive motion in an exceedingly complex and dynamic world with a very tiny vision system. For example, the motion speed (~8 km/hour) of flying insects is much lower than that of a swatter (~50 km/hour), but their highly agile vision system still allows them to escape ahead of time. The agility of the visual system can be quantitatively described as flicker fusion frequency (FFF). The FFF (70-100 Hz) of the flying insect vision system is higher than that (10-25 Hz) of the human vision system.

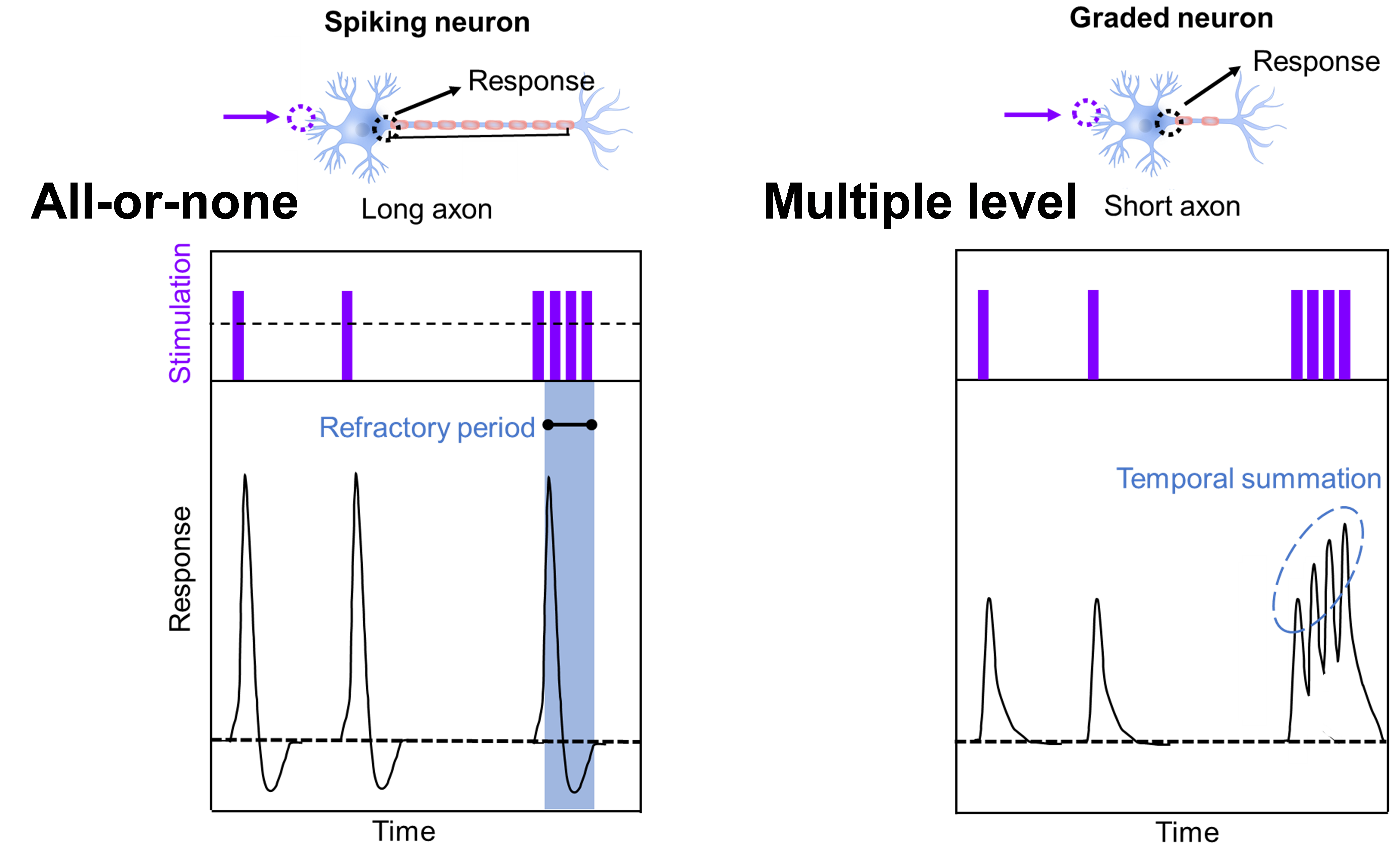

The high agility of the vision system of flying insects results from two major factors. The first is the short distance between the eye and the brain, which greatly reduces signal transmission distance. The second is the unique neural structure in the insect visual system. The graded neurons can effectively encode temporal information after receiving histamine from the photoreceptor. The non-spiking graded signal transmission rate can reach up to 1,650 bit s−1, approximately 5 times higher than that in spiking neurons (~300 bit s−1) of the human visual system. The graded neurons do not have a refractory period (in spiking neurons) and can encode spatiotemporal information into multilevel states (Figure 1), thus exhibiting high information transmission rate.

Figure 1. The structure (top) and response characteristics (bottom) of a spiking neuron and a graded neuron. A graded neuron can respond to sequential stimulation with nonlinear temporal summation characteristics.

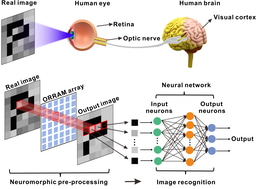

The shallow trapping centers in MoS2 phototransistors exhibit charge dynamics that are similar to the characteristics of graded neurons. We adopted these two-dimensional devices to emulate the optoelectronic graded neurons, showing an information transmission rate of 1,200 bit s−1 and effectively encoding temporal light information. Furthermore, we constructed a 20×20 optoelectronic graded neuron array to perceive the dynamic motion with different directions and speeds, showing the information processing functionalities that are unavailable in conventional image sensors.

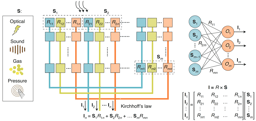

Our research team has been focusing on studying in-sensor computing in past years to process visual information at sensory terminals (Nature, 2020, 579, 32-33; Nature Electronics, 2020, 3, 664-671; Nature Electronics, 2022, 5, 483-484; Springer & Nature Publisher, ISBN: 978-3-031-11505-9). We previously demonstrated the contrast enhancement of static images (Nature Nanotechnology, 2019, 14, 776-782) and visual adaptation to different light intensities (Nature Electronics, 2022, 5, 84-91; Nature, 2022, 602, 364). In this work, we extended the in-sensor computing to perceive the dynamic motion with different directions and speeds (Nature Nanotechnology, 2023, 18, DOI: https://doi.org/10.1038/s41565-023-01379-2).

Figure 2. A book on “Near-sensor and in-sensor computing” published in Springer & Nature.

References:

[1] Nature Electronics, 2022, 5, 84-91

[2] Nature, 2022, 602, 364

[3] Nature Electronics, 2020, 3, 664-671

[4] Nature, 2020, 579, 32-33

[5] Nature Nanotechnology, 2019, 14, 776-782

[6] Nature Electronics, 2022, 5, 483-484

[7] Nature Nanotechnology, 2023, 18, DOI: https://doi.org/10.1038/s41565-023-01379-2

Follow the Topic

-

Nature Nanotechnology

An interdisciplinary journal that publishes papers of the highest quality and significance in all areas of nanoscience and nanotechnology.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in