Seeing through forest with drone swarms

Published in Computational Sciences

Considering the current high level of attention that is being paid to drones in connection with their military uses, it is easy to overlook the enormous potential that they bring with them in civilian areas. Drone taskforces are establishing themselves worldwide in blue light organizations such as the police, fire brigades or mountain rescue as a key technology for saving human lives. Search and rescue operations, for instance, benefit from the flexible, fast and - compared to helicopters - inexpensive and safe operation of drones. Today, drones also find their applications in inspection of disaster areas, early detection of forest fires, border security, and wildlife observations, to name only a few.

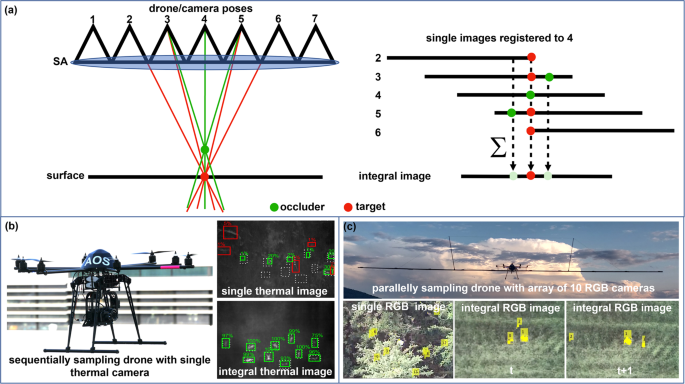

One common problem in all these applications is always occlusion caused by vegetation, such as forest, which usually makes it impossible to find, detect, and track people, animals or vehicles in single aerial photographs. The "Airborne Optical Sectioning" (AOS) imaging method developed at the Johannes Kepler University in Linz, Austria solves this problem with a special scanning principle, known as Synthetic Aperture Sensing (see Video 1 for an example). Similar to the networking of radio telescopes distributed around the world to improve measurement signals, AOS combines several optical images recorded over a large area in order to computationally remove occlusion in real time. This creates an extremally shallow depth of field with a largely unobstructed view of the forest floor. But because AOS combines frames that are captured blindly (i.e. without knowledge of local viewing conditions) by a single drone during flight, it has been difficult so far to detect fast movements, such as walking people or animals, under dense foliage.

Video 1: Airborne Optical Sectioning principle - Real-time and wavelength-independent occlusion removal from aerial images.

This problem is now to be examined in more details within a new basic research project jointly financed by the German Research Foundation (DFG) and the Austrian Science Fund (FWF). In addition to Oliver Bimber of the Johannes Kepler University in Linz, Dmitriy Shutin of the German Aerospace Center (DLR) in Oberpfaffenhofen and Sanaz Mostaghim of the Otto von Guericke University Magdeburg are the principal investigators. The focus of this project is the use of autonomous drone swarms, which collectively contribute to solving this problem. Here, drones can, for example, imitate the swarming behavior of birds in order to always have an optimal, less obstructed, view of the object to be detected and tracked (e.g. a walking person). Multiple drones can cooperate as a single entity to generate the optical signal of a very large, adaptable lens, many meters in diameter. The shallow depth of field of this optical signal will effectively make the forest disappear. The dynamic behavior of the drone swarm will also allow it to react to the movements of the object. So, if a target, such as a person, is moving in dense forest, the swarm can find it, make it visible, and follow it despite heavy concealment by the tree foliage.

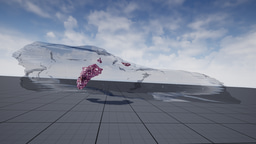

With our initial work published in the paper “Drone Swarm Strategy for the Detection and Tracking of Occluded Targets in Complex Environments” (see paper), we demonstrate first simulation results on how efficient autonomous drone swarms can be used in detecting and tracking occluded targets in densely forested areas, specifically lost persons during search and rescue missions. Exploration and optimization of local viewing conditions, such as occlusion density and target view obliqueness, provide much faster and much more reliable results than previous blind sampling strategies that rely on pre-defined waypoints. An adapted real-time particle swarm optimization and a new objective function are presented that are able to deal with dynamic and highly random through-foliage conditions. Synthetic aperture sensing is our fundamental sampling principle, and drone swarms are employed to approximate the optical signals of extremely wide and adaptable airborne lenses. The reason why swarms significantly outperform blind sampling strategies of single drones in terms of efficiency and detection rate is that sampling can be adapted autonomously to locally sparser forest regions and to larger target view obliqueness (see Video 2).

Video 2: Airborne Optical Sectioning with drone swarms - A simulation that indicates how much better adaptive sampling with drone swarms is when compared to blind sampling with single drones (see paper).

As part of the ongoing basic research project, this and other swarm approaches will now be implemented with real swarms of drones, tested in field studies and further developed. We believe that ongoing and rapid technological development will make large drones swarms feasible, affordable, and effective in the near future – not only for military but, also for numerous civil applications. Collectively, a swarm can act much faster and cover larger area as compared to a single drone. For other synthetic aperture imaging applications that go beyond occlusion removal, drone swarms have the potential to become an ideal tool for realizing dynamic sampling of adaptive wide aperture lens optics in remote sensing scenarios.

The unique advantages of AOS, such as its real-time processing capability and wavelength independence, opens many new application possibilities in contexts where occlusion is problematic. These include, for instance, search and rescue (see Video 3), wildlife observation (see Video 4), archaeology (see Video 5), wildfire detection, and surveillance.

Video 3: Airborne Optical Sectioning for search and rescue - automatic classification of strongly occluded people (see paper 1, paper 2).

Video 4: Airborne Optical Sectioning for nesting observation - counting the population of breading herons (see paper).

Video 5: Airborne Optical Sectioning for archeology - recovering the ruins of an early 19th century fortification tower (see paper).

Contact: oliver.bimber@jku.at

Code, data, papers: https://github.com/JKU-ICG/AOS/

This research was funded by the Austrian Science Fund (FWF) and German Research Foundation (DFG) under grant numbers P 32185-NBL and I 6046-N, and by the State of Upper Austria and the Austrian Federal Ministry of Education, Science and Research via the LIT–Linz Institute of Technology under grant number LIT-2019-8-SEE-114.

Follow the Topic

-

Communications Engineering

A selective open access journal from Nature Portfolio publishing high-quality research, reviews and commentary in all areas of engineering.

Related Collections

With Collections, you can get published faster and increase your visibility.

Engineering the future of 2D transistors: scaling, p-doping, and contact strategies

Publishing Model: Open Access

Deadline: May 31, 2026

Microgrids and Distributed Energy Systems

Publishing Model: Hybrid

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in