TENG-Based Self-Powered Silent Speech Recognition Interface: from Assistive Communication to Immersive AR/VR Interaction

Published in Materials and Computational Sciences

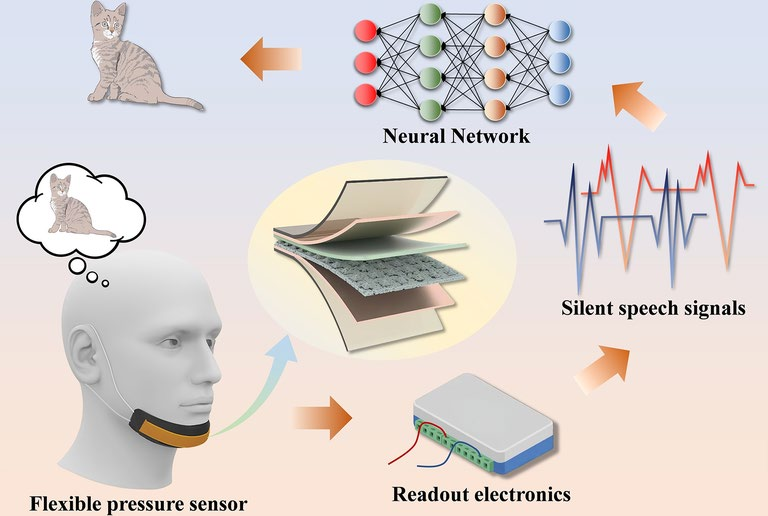

Lip language provides a silent, intuitive, and efficient mode of communication, offering a promising solution for individuals with speech impairments. Its articulation relies on complex movements of the jaw and the muscles surrounding it. However, the accurate and real-time acquisition and decoding of these movements into reliable silent speech signals remains a significant challenge. In this work, we propose a real-time silent speech recognition system, which integrates a triboelectric nanogenerator-based flexible pressure sensor (FPS) with a deep learning framework. The FPS employs a porous pyramid–structured silicone film as the negative triboelectric layer, enabling highly sensitive pressure detection in the low-force regime (1 V N− 1 for 0–10 N and 4.6 V N− 1 for 10–24 N). This allows it to precisely capture jaw movements during speech and convert them into electrical signals. To decode the signals, we proposed a convolutional neural network-long short-term memory (CNN–LSTM) hybrid network, combining CNN and LSTM model to extract both local spatial features and temporal dynamics. The model achieved 95.83% classification accuracy in 30 categories of daily words. Furthermore, the decoded silent speech signals can be directly translated into executable commands for contactless and precise control of the smartphone. The system can also be connected to AR glasses, offering a novel human–machine interaction approach with promising potential in AR/VR applications.

Follow the Topic

-

Nano-Micro Letters

Nano-Micro Letters is a peer-reviewed, international, interdisciplinary and open-access journal that focus on science, experiments, engineering, technologies and applications of nano- or microscale structure and system in physics, chemistry, biology, material science, and pharmacy.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in