The myth of the ‘unreadable’ humanities and social sciences

Published in Computational Sciences and Arts & Humanities

It is a familiar trope: of all the complex, hard to understand academic writing, the woolly and impenetrable humanities and social sciences research is the worst, contributing more than its fair share to the widening gulf between academics and the public. The narrative is familiar, but is it true? To answer that, we must first look at the evidence supporting this narrative, as well as the counter-evidence.

How difficult is academic writing to understand and is the difficulty increasing?

Academic writing is a specialised genre that budding academics take years to learn and perfect. It is specialised because research papers are primarily written for an audience of academic specialists. For this reason, academic writing has features that we normally associate with complex, or harder to read, texts, such as specialised vocabulary and long words and sentences.

Consequently, it is hardly controversial to say that academic papers can be harder to read, especially for non-specialists, than many other types of text. However, several studies in the last six years have claimed that academic writing is getting even harder to read, and that humanities and social sciences (HSS) prose is particularly at fault.

This might seem like an obscure problem, but the ramifications are real. Although research is primarily written for other researchers, they are not the sole audience. Research is also relevant to practitioners, policymakers, innovators, and others beyond academic circles with stakes and interest in the research being published. If research is becoming more difficult to read, that could have negative consequences for application and dissemination of that research.

What evidence is there to support the claim that research, particularly in HSS, is becoming harder to read? A paper from 2020 argues that linguistics papers are becoming harder to read, over the time span from 1991 to 2020, based on over 70,000 article abstracts. More recently, a paper from 2026 looked at a sample of papers from HSS and STEM journals between 1991 and 2023, and argued that overall, the HSS papers had more difficult abstracts. The argument isn’t only academic. In 2024, The Economist, a newspaper, reported the results of its own investigation, (covering nearly 200 years of data) into the matter and concluded that academic research, especially the humanities, is becoming less readable.

But is it really that simple? Spoiler alert: no. There are two problems with the narrative so far: data and measurements.

How data selection shapes the conclusion

The studies mentioned above are perfectly respectable, but they have some blind spots in their data selection. Firstly, the two academic papers mentioned above only consider the period from 1991 to the early 2020s. That is important because as we will see later, their findings need to be re-interpreted when we look at longer timespans. The study conducted by The Economist does cover a much larger time span, over 200 years from 1812 to 2023. However, the data used consisted of about 350,000 abstracts from PhD dissertations at British universities. Two things are important here. First, PhD dissertations, while being important contributions to the scholarly output, should not be presumed to be representative of all scientific and scholarly writing. PhD students write for a particular audience in a particular context, and it is problematic to make generalisations to all types of scholarly writing based on such a narrow sample, because thesis abstracts differ from article abstracts. Second, although the amount of data is far from trivial, it pales in comparison to the about 88 million abstracts that we find in Dimensions, a large publication database, for the same period.

The complexity of measuring linguistic complexity

Linguistic complexity is one of those intangible concepts that are often easy to recognise in practice but much harder to precisely define. The studies mentioned above measure reading complexity predominantly in terms of syntax: word complexity and sentence complexity. Different measures are used, including counting words, verbs, and subordinate clauses per sentence, as well as readability indexes. Readability indexes tend to count things like word and sentence length and combine them with in a formula that has been benchmarked against standard reading materials at different difficulty levels. Several such formulas exist, and they tend to be heavily correlated with each other.

But reading complexity is about more than just syntax. The vocabulary (general vs specialised) matters, as well as how tightly the information content is packed together. There are two simple measures we can use to capture those things, the type-token ratio (TTR) and the lexical density (LD). The former estimates the size of the vocabulary by dividing the unique words in a text by the total number of words, while the latter estimates the density of lexical words (nouns, verbs, adjectives and adverbs) as a percentage of the total number of words.

Let’s take an example: “The cat sat on the mat”. This sentence has six words, and five unique words (“the” is used twice). Furthermore, three words are lexical words (cat, sat, and mat) with the other three being function words. This gives us a type-token ratio of 5/6 x 100 = 83, and a lexical density of 3/6 x 100 = 50. For TTR, a higher value means a larger vocabulary relative to the text, while for LD a higher value means a greater concentration of lexical words and hence a heavier concentration of words that provide most of the content, leading to a denser reading experience.

Both metrics, TTR and LD, are relevant to readability, but in a different way from the structural complexity metrics. Structural complexity, lexical density, and vocabulary size are all related to how easy or difficult it is to read a text, but in slightly different, complementary ways: a text can be syntactically complex while still using a small, general vocabulary. Likewise, a text can use a simple syntax but with many specialised words packed closely together.

Complexity in academic abstracts: a view from Dimensions

So far, we’ve seen that linguistic complexity isn’t one-dimensional, and that narrow (in one sense or another) data selection can be problematic. What do we find when we calculate both structural LD, TTR, and structural complexity, specifically the Automated Readability Index (ARI), a weighted index over mean sentence length and mean word length, for abstracts in Dimensions?

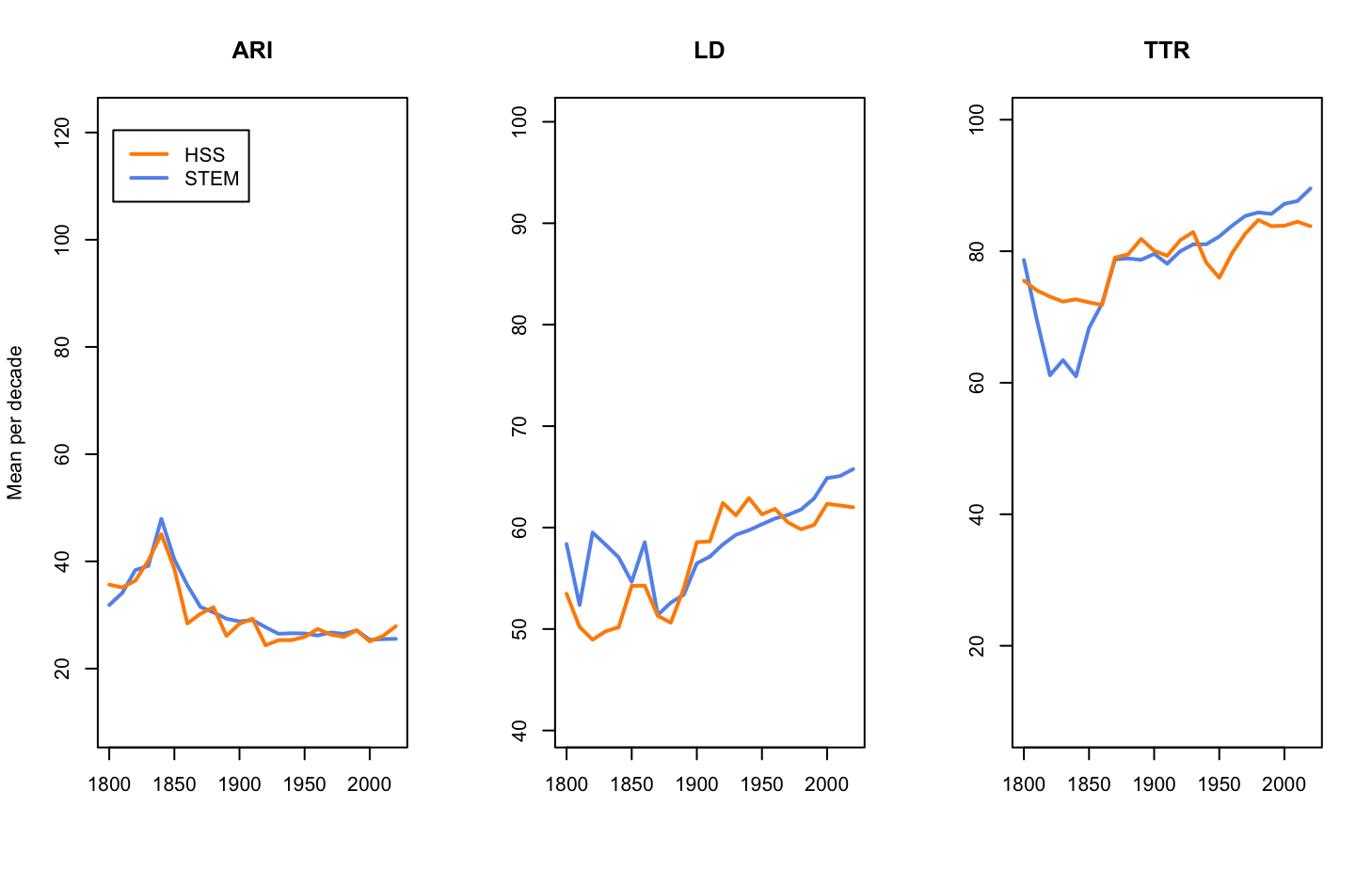

The figure below shows the mean values, per decade, of ARI, LD, and TTR for HSS (orange line) and STEM (blue line) abstracts in Dimensions between 1800 and 2024. The abstracts were categorised into STEM or HSS disciplines based on high-level fields of research.

A few things immediately stand out. First, across all three metrics, HSS and STEM tend to follow each other. Second, despite the correlation we see a fair amount of variation over time, which is hardly surprising across two centuries. And if we look closely, we can see a small uptick in the structural complexity of HSS abstracts since 2000, but considered against the past time periods, this is a small increase. Interestingly, if we look at the word-level measures, TTR and LD, these show that STEM abstracts, not HSS, are growing more complex lexically. The higher lexical density in STEM abstracts is also attested in previous studies.

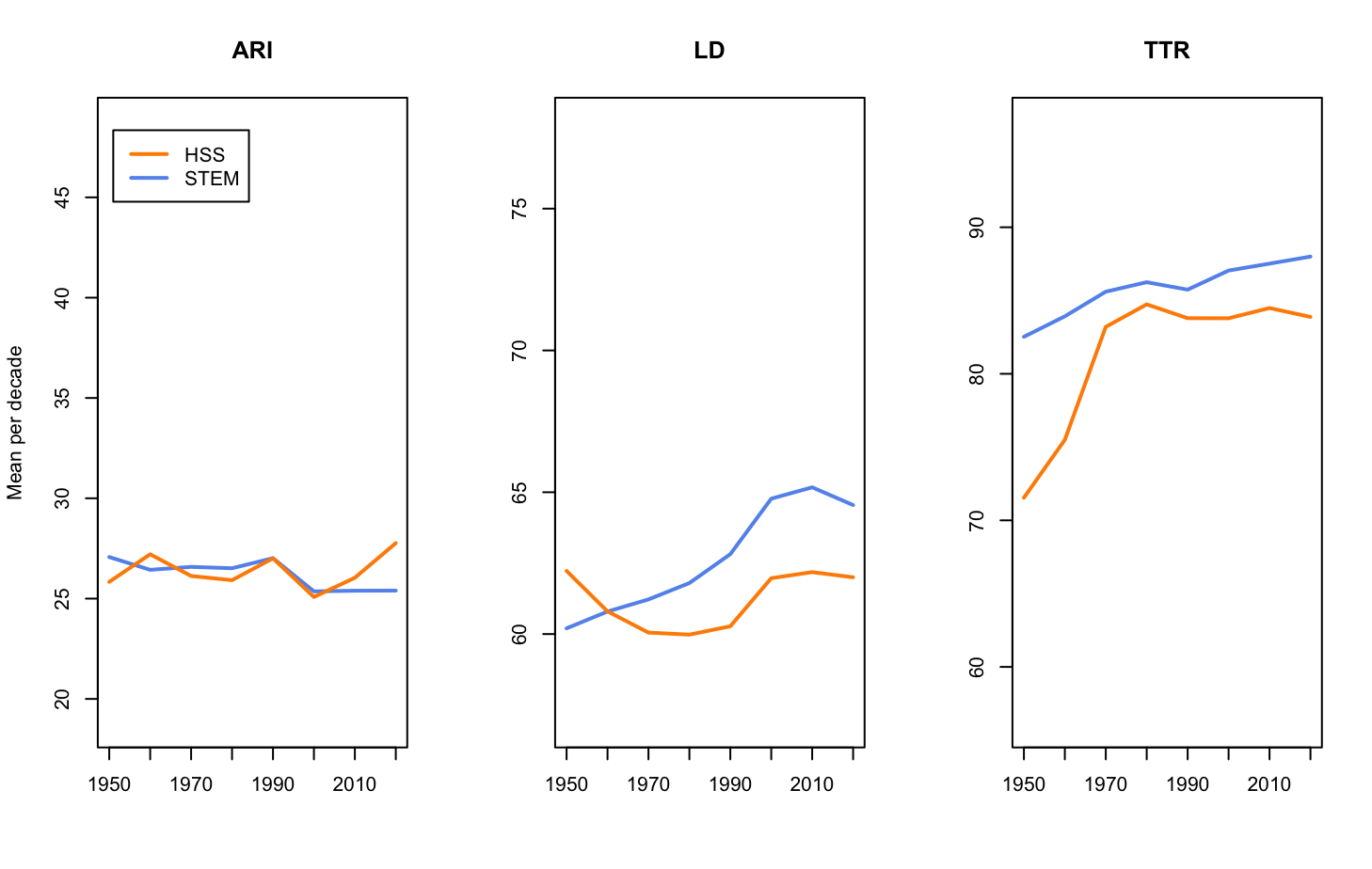

Zooming in on the same data, but limited to the period since 1950, we can see those trends more clearly.

In short, the long-term trend is that the syntax is becoming simpler, while the vocabulary is becoming more complex, in the form of a larger, more dense and specialised vocabulary packing more information. This shift can make texts feel harder to read even if their syntax is simpler. In this context, the difference between HSS and STEM looks less like which one is more complex, and more like STEM writing concentrating complexity in terminology and vocabulary, while HSS writing favours, longer and potentially more complex discursive structures. Both can be complex, but in different ways, an interpretation that is supported by research suggesting that there is a trade-off between different types of linguistic complexity.

Diagnosing the right problem

This case study illustrates the importance both of using multiple metrics but also carefully considering the time window that data is taken from when studying trends in linguistic complexity. Recall that some papers had identified an increase in HSS syntactic complexity from 1991 to the 2020s. That increase is visible in the figures above as well. It is a trend that may or may not grow much more substantial in the future, but in the context of a longer time horizon, it looks much less dramatic. Similarly, focussing too much on syntactic complexity might lead us to diagnose the wrong problem for communicating and disseminating research findings. The word-level, including information density and specialised vocabulary, also matter to the overall complexity of the text.

As we have seen, the debate about academic readability often suggests that HSS abstracts have simply become harder to understand than others. But large-scale linguistic evidence from Dimensions suggests a different picture. Over the past two centuries, academic writing has become structurally simpler while simultaneously becoming lexically denser and more specialised. Rather than a steady decline into unreadable prose, what we see is a shift in how complexity is expressed. And once we take that broader perspective, the idea that the humanities are uniquely to blame for unreadable scholarship becomes much harder to defend.

Springer Nature is committed to open science, and in line with that commitment the data behind this blogpost is available from Figshare.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in