Haptics based multi-level collaborative steering control for automated driving

Published in Electrical & Electronic Engineering

As a consequence of the fast adoption of driving automation systems, most vehicles available on the market are the result of a robot-centered development approach. A few decades ago, the major challenges faced by the engineers were to implement sensors and control capabilities enabling the vehicle to follow and remain within a lane. For safety and to ensure compliance with the evolving regulations, driver monitoring systems (hands-on detection, head, and gaze cameras) and override or takeover strategies completed the necessary equipment. The human driver has been considered afterward the development of the robotized vehicle.

While increasing the level of automation is regarded as a measure to meet environmental, productivity, and traffic safety requirements, the role of the driver is shifted to a monitoring task, increasing risks for human to lack operational understanding. The paradox of automation (not only in automotive) is that the more proficient and reliable the system evolves, the incentive for the human to maintain attention also reduces. Over-reliance or complacency is induced when an unjustified trust in the system ability builds up over time. The consequent loss of situation awareness results in an out-of-the-loop (OOL) phenomenon or disengagement. Although monitoring systems and attention reminders increase engagement, they are reactionary to driver behavior and do not guarantee continuous engagement.

While level-2 ADAS aim at reducing the driver workload and ensuring engagement, override biased the concept of “driver assistance” as the notion of working together by giving the impression that the driver can be replaced by the automation. The discontinuous operation of ADAS with an override strategy is assumed to be one of the causes of driver misuse and disengagement occurrence.

To improve the user experience, the concept of “haptic shared control” has received significant attention due to the anticipated benefits on safety and intuitive operation for partial and conditional automation levels. This concept is often compared to horseback riding. The rider can convey to the horse his intention “where does he want to go?” through reins, whereas the horse understands the rider's order but also still has the ability to look at the situation by itself and independently secure the ride in case of danger. The steering system plays the role of rein, and the automated driving system must be open for negotiation with the driver. It is essential that the driver monitor the automation, and vice versa. Therefore, it would be ideal that the driver and the automation could engage together in partnership as if they were close trustworthy partners who can watch out for each other.

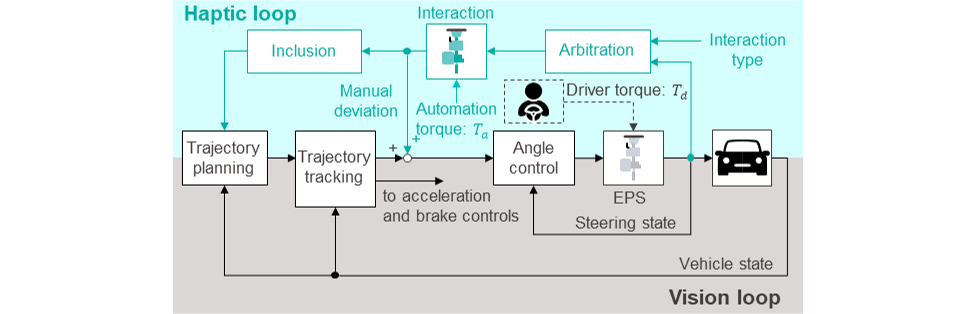

In this research, we aimed at “unifying driver and automation” by utilizing prior knowledge of physical human-robot interaction (pHRI) to the shared control of steering system in automated driving, while trying to reproduce “the rider and the horse” metaphor. In order to go beyond the classical form of driver-automation interaction this paper proposes a multi-level haptic collaborative steering control framework (Figure 1) within the limitation of mass-produced steering hardware.

This concept is inspired by human-human collaboration. Specifically, when two human agents work together to carry a desk, first, they communicate their intention via interactive force to allocate roles and to negotiate the direction to go. When a robot is interacting with a human, the role allocation is achieved by changing the type of interaction such as collaboration, competition, etc. (see the detail in the paper). Arbitration (robot control) of the selected type of interaction is performed by assimilating the human sensorimotor control (goal and impedance) and adapting accordingly to the robot response. When the haptic communication is settled, each agent adjusts their motion accordingly. For the automated driving application, it is necessary to decide the type of interaction based on the traffic conditions and the driver status. In this research, we are interested in how to enable joint collaborative steering assuming that the decision on the type of interaction is available. Three functions have been developed: “interaction” to provide a control environment to the steering system where driver and automation can coexist, “arbitration” which sets the automation dynamics based on the preselected type of interaction and “inclusion” to assimilate the driver intention to the trajectory planning of the automation. Actual vehicle evaluations by several drivers demonstrated the capability of the proposed multi-level framework to enable smooth collaborative steering operations with less effort for drivers.

JTEKT Corporation has named this steering control technology for automated driving PairdriverTM (Figure 2) and is working on the development of ADAS and automated driving technologies that are intuitive and safe for the driver.

Follow the Topic

-

Communications Engineering

A selective open access journal from Nature Portfolio publishing high-quality research, reviews and commentary in all areas of engineering.

Related Collections

With Collections, you can get published faster and increase your visibility.

Applications of magnetic particles in biomedical imaging, diagnostics and therapies

Publishing Model: Open Access

Deadline: May 31, 2026

Integrated Photonics for High-Speed Wireless Communication

Publishing Model: Open Access

Deadline: Mar 31, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in