There is a recent surge of interest in graph learning using deep neural networks as revealed by the wide spectrum of applications such as prediction of molecular properties for drug design, recommender systems for social networks, and combinatorial optimization for electronic design automation, making it one the most topical research fields in machine learning.

However, in the era of big data and Internet of Things, the size of graph-structured data is exploding exponentially. As a result, deep learning with graphs on traditional von Neumann computers leads to frequent and massive data shuttling between physically separated off-chip-memory and processing units, inevitably incurring large time and energy overheads. In addition, as the size of transistor is approaching its physical limit, Moore’s law, which has fueled the past development of CMOS chips for decades, is slowing down, making further performance boost of digital hardware increasingly hard. Moreover, training conventional graph neural networks could be expensive due to tedious error backpropagation for node and graph embedding. The growing challenges in both hardware, i.e., von Neumann bottleneck and transistor scaling, as well as software i.e. tedious training, calls for a brand-new paradigm of graph learning.

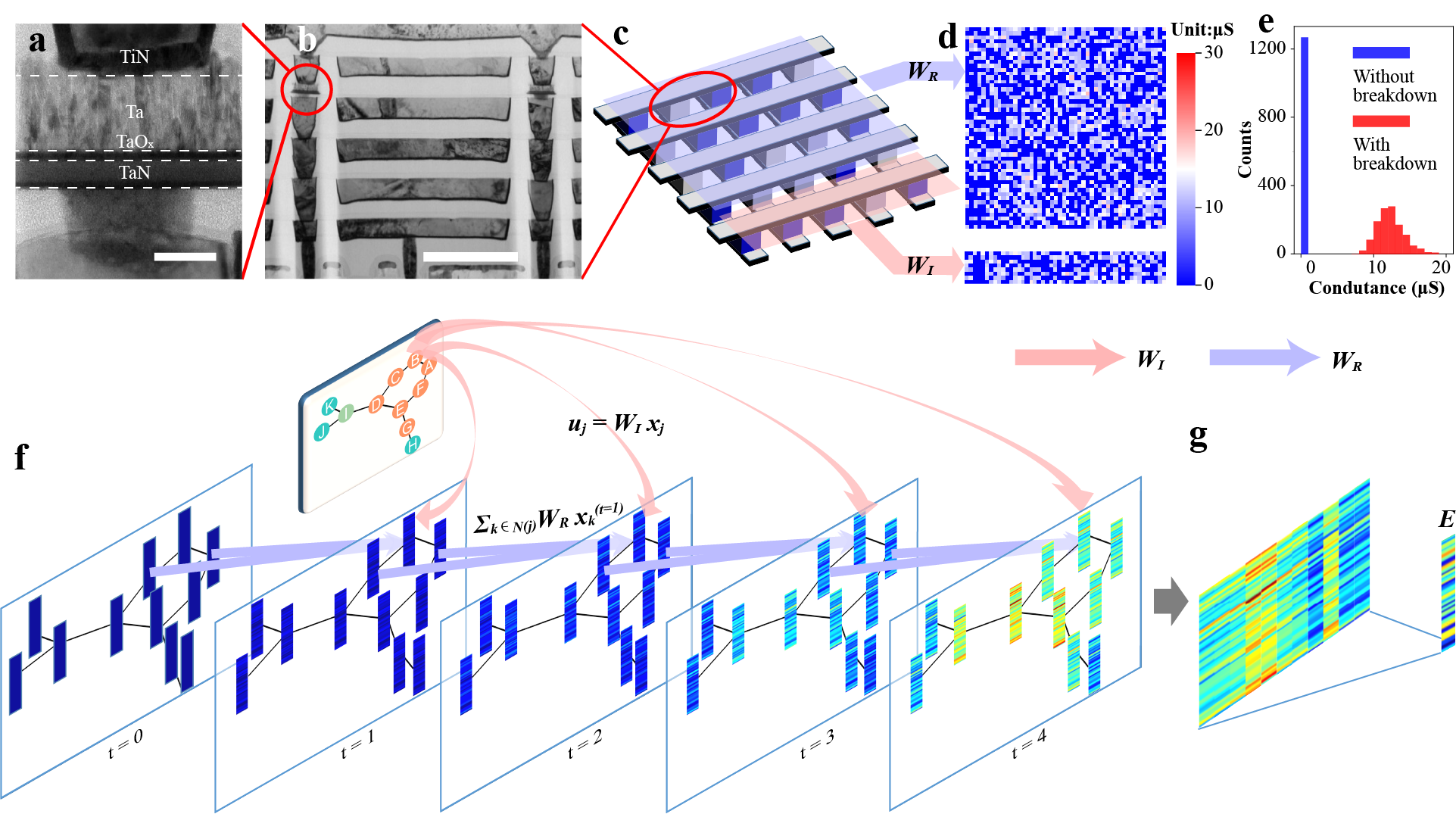

The emerging in-memory computing with resistive memory may provide a novel solution. It employs arrays of tunable and scalable resistors (resistive switches or memristors) for computations using simple physical laws. As the data are effectively stored and processed in the same resistors, it naturally overcomes the von Neumann bottleneck and achieves better efficiency. However, resistive memory suffers from a variety of issues when their resistance is changed, including large programing energy and duration compared to transistor switching as well as intrinsic stochasticity, which defeats their advantage and prevent them from being used in graph learning. Here we present a novel hardware-software co-design, the random resistor array-based echo state graph neural network, to address all aforementioned challenges. The random resistor arrays not only harness low-cost, nanoscale and stackable resistors for highly efficient in-memory computing using simple physical laws, but also leverage the intrinsic stochasticity of dielectric breakdown to implement random projections in hardware for an echo state network that effectively minimizes the training cost thanks to its fixed and random weights. For the first time, graph learning was experimentally demonstrated on emerging memory with state-of-the-art graph and node classification performance on representative datasets (MUTAG, COLLAB and CORA), while achieving significant improvement (2.16×, 35.42× and 40.37×) of energy efficiency and reduction (99.35%, 99.99% and 91.40%) of training cost compared to conventional graph learning on digital hardware. Our random resistor array-based echo state graph neural network will not only enable efficient and affordable real-time graph learning thanks to the novel hardware-software co-design, but more importantly, it will resolve the programming obstacle of resistive in-memory computing, leading to transformative impacts on the next-generation AI hardware.

Figure 1. The Soft-Hard Co-Design of the Learning Process for Echo State Graph Neural Network Based on Random Resistance Array.

Follow the Topic

-

Nature Machine Intelligence

This journal publishes high-quality original research and reviews in a wide range of topics in machine learning, robotics and AI.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in