Why the Weight of Evidence Matters in Efficacy and Effectiveness Studies

Published in Behavioural Sciences & Psychology and Education

Efficacy and effectiveness are two important aspects of measuring impact in educational technology (EdTech). Efficacy and effectiveness are both crucial, demonstrating that an EdTech solution not only works but also has the potential to be effective in diverse settings, ideally with replicated effects.

There are several frameworks evaluating whether and how EdTech tools, designed for teaching and learning in K12, work. Foster et al. (2023) conducted a rapid literature review and identified 74 relevant frameworks that fall into four categories: frameworks that analyse the quality components of EdTech design, frameworks that assess whether EdTech is meeting users' needs, frameworks that consider alignments between an EdTech and digital pedagogy, and frameworks that evaluate whether EdTech products adopt an evidence-informed approach.

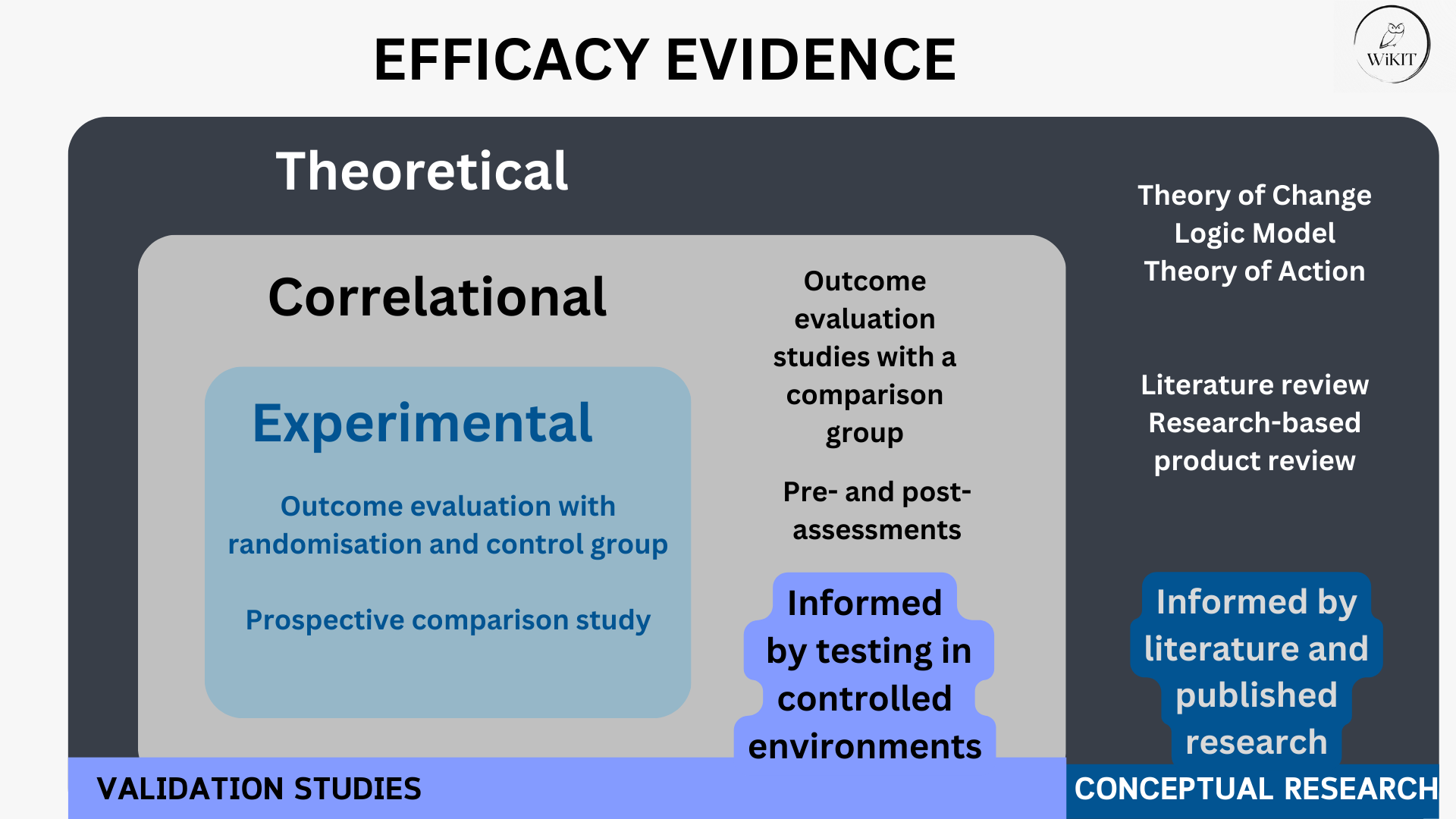

The fourth type of frameworks are efficacy and effectiveness frameworks. These frameworks are crucial for EdTech quality discussions as they align with current governmental priorities concerning "what works" in EdTech. Reflecting this focus, our report "Consolidated Benchmark for Efficacy and Effectiveness Frameworks in EdTech" systematically reviewed all currently available frameworks and also consolidated them based on criteria such as the weight of evidence or the rigour of the studies considered by the frameworks.

The importance of rigour evaluations

The emphasis on rigour holds a central position in learning sciences, a sentiment underscored by scholars such as Gough (2017) in the paper "Weight of evidence: a framework for the appraisal of the quality and relevance of evidence". The significance of rigour in evaluating EdTech interventions is also emphasised in our npj Science of Learning paper, where we introduced an EVER evaluation routine incorporating rigour assessments. Why does the weight of evidence matter?

Comparing a meta-analysis (an analysis of multiple analyses) published in a top-ranking journal to a small-scale experiment published in a Master's thesis is inherently different. Similarly, comparing a one-day observation of a single teacher to a series of focus group interviews conducted over a year and guided by strong theories yields very different insights. The independence of the study (i.e. who conducted it), factors such as effect size and whether randomization was employed, play crucial roles in determining a study's rigour.

Rigour and various disciplines

Assessing the rigour of a study would be incomplete in the absence of relevant subject matter expertise within a given domain. This is why, in high-stakes impact evaluations, assessments of rigour are entrusted to subject matter experts familiar with how to gauge the quality of a study, ensuring a match between the evaluators' expertise and the nature of the intervention—such as a literacy researcher evaluating a literacy intervention rather than a chemistry professor, for example.

Another important dimension to consider is methodological plurality: both qualitative and quantitative studies are necessary for each type of evidence related to efficacy and effectiveness. Different disciplines have different criteria for the methodologies employed in quantitative and qualitative studies.

Rigour and diverse types of evidence

The weight of evidence is a critical consideration across all types of research, whether focusing on efficacy or effectiveness. As figures 1 and 2 show, studies can be either empirical (validation or implementation studies) or they can remain on the theoretical and review levels. The different colours of the squares in the figures represent the resource intensity required to conduct each type of research, whether this is in controlled settings (efficacy) or in real settings (effectiveness). The importance of rigour evaluations applies to both kinds of studies; highest quality should be ensured for a literature review or an experiment.

.jpg)

Regardless of the nature of evidence presented by an EdTech, be it conceptual or empirical, or effectiveness or efficacy, it deserves to be evaluated and recognised for its rigour.

Follow the Topic

-

npj Science of Learning

An online open access peer-reviewed journal dedicated to research on all aspects of learning and memory – from the genetic, cellular and molecular basis, to understanding how children and adults learn through experience and formal educational practices.

Related Collections

With Collections, you can get published faster and increase your visibility.

Reimagining Teaching and Learning in the Age of Generative AI Agents

Publishing Model: Open Access

Deadline: Jul 13, 2026

Effects of lifestyle behaviours on learning and neuroplasticity

Publishing Model: Open Access

Deadline: Jun 09, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in