Behind the Paper: Why I Wrote From Assistants to Agents

Published in Social Sciences and Philosophy & Religion

Explore the Research

From assistants to agents: a relational framework for human–AI co-agency

When we talk about artificial intelligence, much of the debate still oscillates between two familiar images. On one side, AI is treated as a sophisticated tool: something that supports human action but remains external to responsibility. On the other side, AI is sometimes described in near-anthropomorphic terms, as if it were becoming an autonomous actor in its own right. Both views, in different ways, seemed incomplete to me.

What increasingly interested me was a more difficult question: what happens when AI is neither merely assistive nor fully autonomous, but instead operates within structured systems of delegation, supervision, and institutional responsibility? In other words, what happens when action is distributed across humans, artificial systems, and governance structures?

That question became the starting point for my article, From Assistants to Agents: A Relational Framework for Human–AI Co-Agency, now published in AI and Ethics.

The central intuition behind the article is simple: the rise of agentic AI requires us to rethink agency relationally. In many real-world settings, AI does not act in isolation. Its apparent initiative is enabled, bounded, and interpreted through human decisions, institutional goals, technical design, and governance mechanisms. This means that responsibility cannot be understood by looking only at the machine, or only at the individual user. It has to be understood through the structure of the broader sociotechnical system.

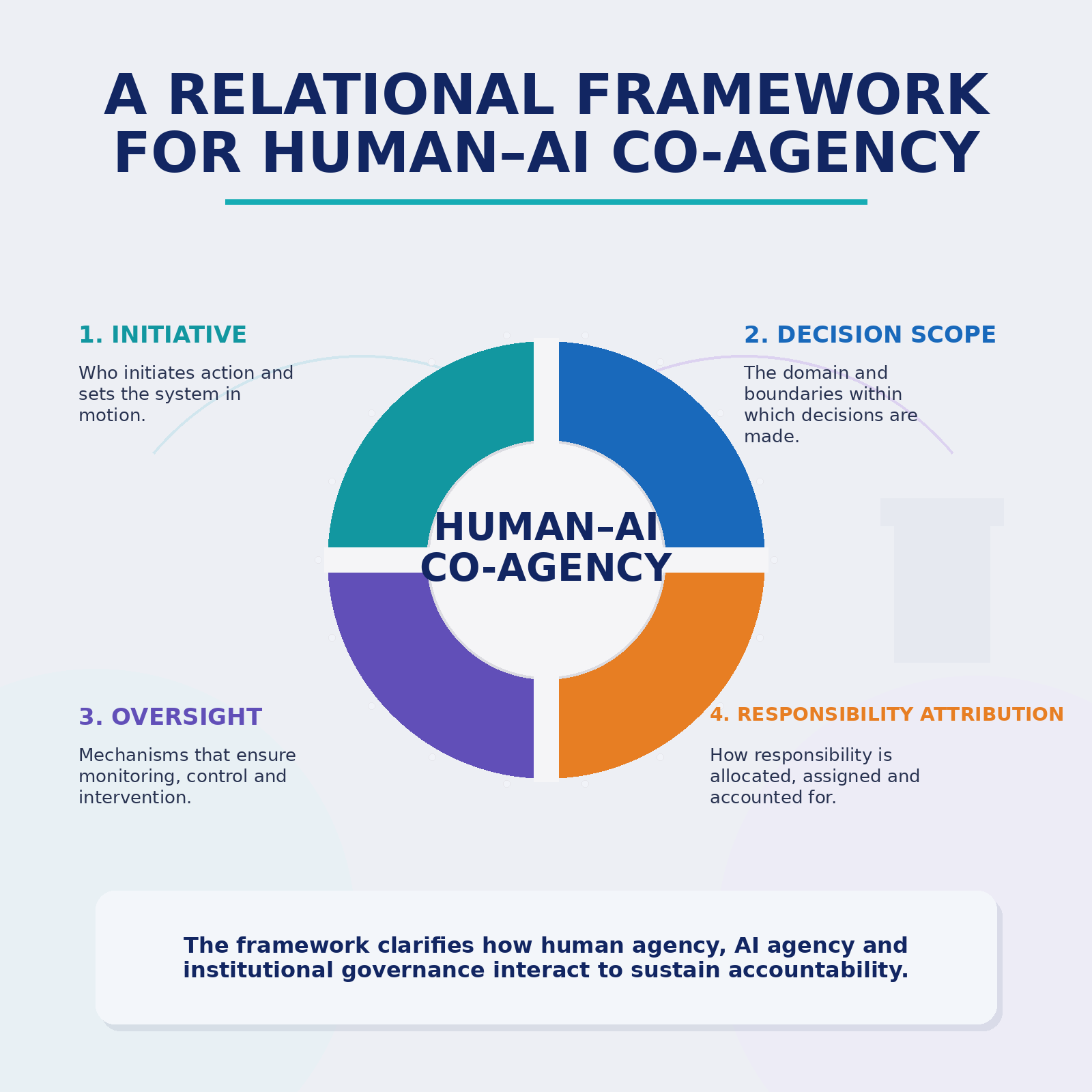

To make that argument more operational, I proposed a four-dimensional framework built around initiative, decision scope, oversight, and responsibility attribution. These dimensions are intended to help clarify where delegation begins, how far it extends, how supervision is preserved, and how accountability should remain anchored even when systems take on greater initiative.

One reason I felt this mattered is that discussions about AI governance often remain either too abstract or too procedural. We speak in large terms about ethics, trust, regulation, and policy, but we often lack a practical conceptual bridge between theory and institutional design. I wanted this work to contribute to that middle layer: not only asking what AI is, but how human–AI arrangements should be evaluated when decisions, actions, and consequences are distributed.

I was also concerned that the vocabulary of “autonomy” can sometimes obscure more than it reveals. The issue is not simply whether a system appears autonomous. The more important question is whether institutions remain capable of structuring delegation responsibly. A system may appear highly capable while still depending on weak governance. Conversely, human accountability can be eroded not because humans disappear, but because roles, boundaries, and responsibility structures become blurred.

In that sense, the challenge posed by agentic AI is not machine agency in isolation. It is the alignment of delegated action with meaningful human oversight and institutional responsibility. More broadly, I hope this work contributes to a more grounded and rigorous conversation about how societies, organizations, and governance systems should respond as AI becomes more agentic.

Read the article

SharedIt: https://rdcu.be/fgHrc

DOI: https://doi.org/10.1007/s43681-026-01111-5

Follow the Topic

-

AI and Ethics

This journal seeks to promote informed debate and discussion of the ethical, regulatory, and policy implications that arise from the development of AI. It focuses on how AI techniques, tools, and technologies are developing, including consideration of where these developments may lead in the future.

Introducing: Social Science Matters

Social Science Matters is a campaign from the team at Palgrave Macmillan that aims to increase the visibility and impact of the social sciences

Continue reading announcementRelated Collections

With Collections, you can get published faster and increase your visibility.

AI Resistance, Refusal, Reclamation and Reimagining: Ethical Imperatives and Emerging Practices

Artificial intelligence is becoming a contested area. On a global level, educators, technologists, policymakers, artists, labor groups, and community organizations are opposing or refusing AI systems they deem harmful, extractive, pedagogically flawed, discriminatory, as well as environmentally damaging, or socially unjust. Despite this evidence, there are widespread narratives, pervasive even within “responsible” or “ethical” AI initiatives, aimed at inculcating the belief that challenges to the current trajectory of AI development are futile. This topical collection seeks to examine the context of empowering resistance, refusal, reclaiming and reimaging AI as a fundamental ethical imperative.

This topical collection invites interdisciplinary contributions that explore the ethical foundations, sociopolitical contexts, practical strategies, and cultural implications of resisting, refusing or reclaiming AI systems. We welcome theoretical pieces, case studies, empirical research, policy analysis, and speculative or creative examinations of what it means to resist and refuse AI—and what alternative futures such resistance makes possible.

Please find a detailed call for papers at https://link.springer.com/journal/43681/updates/27848400.

Publishing Model: Hybrid

Deadline: Jul 31, 2026

Epistemic Transformations in Defence: Knowing About, With, and Through Artificial Intelligence

This Topical Collection focuses on the triadic framework of knowledge about, with, and through AI as a lens to analyse these developments. Knowledge about AI concerns the conceptual, technical, and normative understanding required to critically evaluate the capabilities, limits, and societal implications of AI systems in defence. Knowledge with AI refers to the epistemic and operational practices that emerge when AI is used as an analytic, diagnostic, or decision-support instrument, thereby reshaping modes of reasoning, situational awareness, and human-machine interaction. Knowledge through AI captures the novel conditions of information production and interpretation introduced by generative and predictive systems, raising questions about epistemic authority, professional competence, trust, and the transformation of military institutional norms. We particularly welcome submissions that illuminate the interrelation of these dimensions or explore their implications for ethical governance, regulatory debates, and democratic control of military technologies.

Please find the detailed call for papers here.

Publishing Model: Hybrid

Deadline: Jul 31, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in