In-sensor wireless computing for intelligent remote sensing

Published in Bioengineering & Biotechnology and Electrical & Electronic Engineering

The field of remote sensing faces a long-standing inherent contradiction: the conflict between limited wireless communication bandwidth and ultrahigh volume of visual information (typically wide-field high-resolution images) that needs to be transmitted to ground stations for real-time analysis and judgement. Conventional machine vision architecture performs full imaging and pixelated analog-to-digital conversion, followed by complex digital compression and signal modulation for wireless transmission. The separate sensing, analog-to-digital conversion, compression, and transmission cause severe latency. This issue will become even more severe for low-orbiting satellites because of the short over-the-top time window that can be used for effective wireless transmission. Thus, there is a pressing need to realize highly efficient wireless transmission for large-scale images with high throughput, promoting various real-time applications such as monitoring for hotspot areas and early warning for forest fire risks, etc.

To resolve the above challenge, we think: can we introduce new ways of device operations or interconnections like our previous work [Nature Electronics 7, 225 (2024)] as additional computational resources, and fuse the sensing, compression, modulation, and wireless transmission processes into a single step directly within the analog domain?

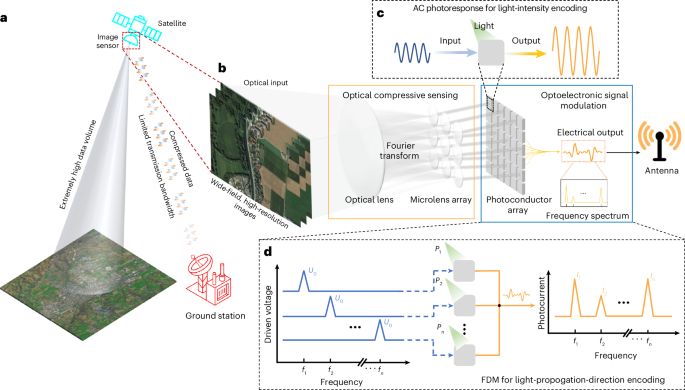

In our recent study published in Nature Sensors, we introduce an in-sensor wireless computing scheme based on an optically and electrically integrated architecture. The original image is sensed and directly compressed via an optical lens-based Fourier transform, and low spatial-frequency components at the back focal plane are retained and transmitted. A Shack–Hartmann microlens array encodes these components by mapping amplitudes to light intensities and phases to light propagation directions. Simultaneously, a photoconductor array based on alternating-current (AC) photoresponse and frequency-division multiplexing (FDM) is used for recording the light intensities and propagation directions. The frequency spectrum of the summed photocurrent of the array carries the compressed spatial-frequency information of the original image. In this way, the visual information can be directly encoded into the frequency spectrum of a short piece of electrical signal (much like the tones of a song), and then wirelessly transmitted into free space via an antenna.

We have built a proof-of-concept end-to-end wireless transmission and wireless reception system in experiments. The reconstruction of original image via an inverse Fourier transform and direct recognition of the frequency spectrum of the received signal have been successfully demonstrated at the reception end. The transmission latency is reduced by 96.8% compared with conventional machine vision architecture. The scalability for high-resolution remote sensing images in practical applications and robustness under realistic wireless channel conditions have also been demonstrated in simulation. Our proposed in-sensor wireless computing scheme transforms “sense first, then compress, then transmit” into “sensing is compression and transmission”, which fundamentally revolutionizes the conventional machine vision architecture. The image compression in our scheme is intrinsic to the process of light propagation and the signal modulation is inherent to the AC photoresponse of the phototransistors array. There is no concept of traditional spatial pixels and frame rate in our proposed scheme. Moreover, the two-dimensional photosensitive material used in our array has excellent radiation tolerance for satellite applications in outer space according to a recent study published in Nature [Nature 650, 346-352 (2026)].

Our scheme is suitable for overall assessment of large-scale scenarios and preliminary screening in real-time, despite that some high spatial-frequency components and fine details of the environment are currently absent. In our next step, with the array size scales up, the increment of cutoff frequency, and the adoption of new encoding technique, different parts of the image information in the frequency domain can be actively and adaptively selected and transmitted through a built-in AI agent. Federated learning could be introduced into our scheme based on hardware-software co-design, promoting edge-cloud collaborative intelligence and enhancing data security. Above all, our scheme can be further expanded to process continuous-time video information with three-dimensional depth information and spatial motion information.

Follow the Topic

-

Nature Sensors

Publishing fundamental, applied, and engineering research across the full spectrum of sensing technologies, spanning biological, computational, engineering, and systems domains.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in