Machine learning (ML) is emerging as one of the main disruptive technologies of our time, whose influence compares with that of the widespread adoption of computers in the 80s and 90s [1]. Its origins can be traced to neurophysiological theory in the mid twentieth century [2] and early work on artificial intelligence – the term itself was first coined in 1959 in the context of the development of algorithms to play checkers [3]. What lies behind the current explosion of ML techniques is the introduction of multilayer feed forward networks and their variants [4]. This has resulted in the dramatic transformations in image and speech recognition and processing, financial fraud detection, spam filtering, web searches, targeted advertising, and opened the possibility of a variety of other applications such as face recognition and photograph sorting in smartphones. In science, ML has been applied in an ever-increasing number of domains such as drug discovery, genomics, statistical physics, quantum mechanics, high energy physics and cosmology [5,6,7,8,9].

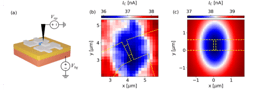

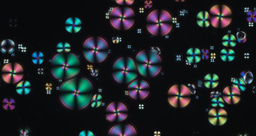

Our use of ML techniques applied to our specific field of condensed matter experiment started from necessity. The physical phenomena we were studying in frustrated magnetic systems were becoming increasingly complex and the data was becoming too hard to analyse and manage using conventional approaches. This was typified by spin-ice, where we had to tease out the underlying model from seemingly conflicting data and theoretical findings. Our strategy was to conduct neutron scattering experiments (at Oak Ridge National Laboratory) to measure diffuse scattering patterns of this material at low temperatures and under different applied magnetic fields. The reason for this was diffuse neutron scattering is the most information rich technique available providing detailed correlations in the system, but an inversion of the scattering process is a notoriously difficult problem. We took inspiration from the way experimentalists learn to understand the patterns in scattering experiment from experience over a wide variety of cases whereby they build their intuition. With complex problems and the massive three- and four-dimensional data sets coming off state-of-the-art instrumentation this becomes impossible for any human to reasonably do any more, but not for machines. We emulated the learning process by using an autoencoder – a neural network that processes and reproduces data through a constriction to abstract out a condensed representation of the data – to learn thousands of computer-generated data sets. When fed the data not only was the autoencoder able to accurately find the model (or specifically the Hamiltonian) and its parameter from the experimental data, but the ML tools also allowed us to categorize different magnetic behaviours and eliminate background noise and artifacts in the raw data, an otherwise onerous and months long task [10]

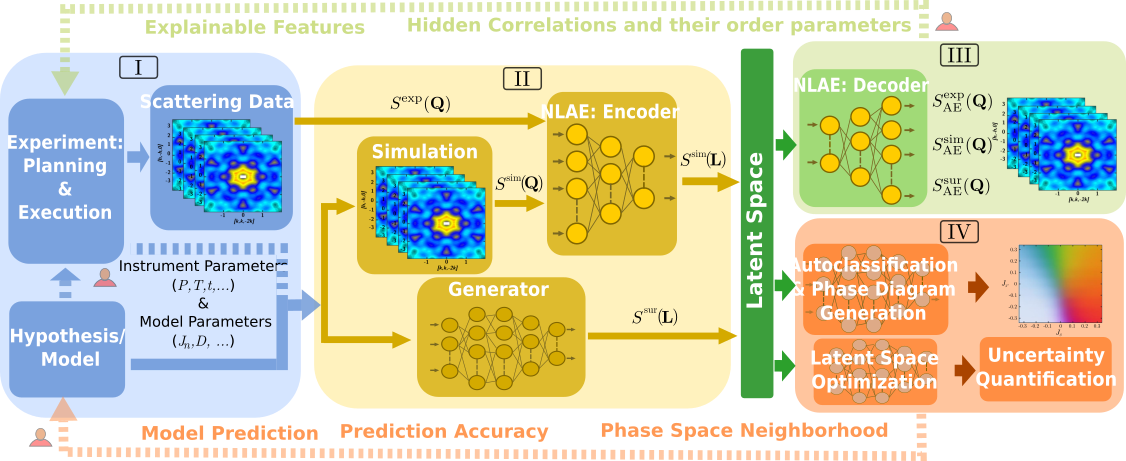

While our first efforts focusing on analysis led to significant breakthroughs in understanding spin ice, we realised that ML could have a bigger impact on the way we conduct experiments themselves. To do this we considered the essential “pipeline” of a neutron scattering experiment from conceptualization and planning to execution, analysis, and interpretation. Our main insight was that so called Latent Space (LS), the compressed representation space produced by the autoencoder, can unify disparate tasks and integrates machine learning with high-performance simulations and scattering measurements [11]. Fig. 1 shows an overview of the resulting scheme. Machine learning techniques form part of several stages of the experiment, helping pre-process the experimental data, classify magnetic behaviour, generate roadmaps for parameter exploration, and aid the modelling and parameter determination. Crucially, the ML modules allow for some of these processes to occur in real time and without the need for heavy computing resources which means that high-level on-site feedback can be given during the execution of the experiment. Neutron scattering experiments take place in large-scale facilities where the efficient use of the limited time assigned to an individual experiment is essential.

Fig. 1 Schematic overview of machine-learning integration into the direct and inverse scattering problem. The workflow is split into four main sections: (I) scattering experiment design and optimization; (II) parameter space exploration and information compression; (III) structure or property predictions; and (IV) parameter space predictions. Section II links to both III and IV via latent space, LS, a compressed version of the large pixel space. Dashed lines with a silhouette indicate parts of the flow that currently still require some human intervention. The latent space representations are used in surrogates that bypass expensive calculations. their prediction accuracy.

The approach in this latest work uses nonlinear autoencoders trained on realistic simulations along with a fast surrogate for the calculation of scattering in the form of a generative model. The experimental data, simulations, and predictions feed into the reduced dimensional LS from which structure, property and model parameters are predicted. This LS data compression reduces experimental noise and removes artefacts. The LS representations are either decoded for human-readable comparison between experiments and modelling or directly used by ML modules to construct a phase diagram of the system or determine model parameters that best fit experimental conditions. Processed results and improved modelling and predictions are fed back into instrument and experimental parameters. The fast surrogate, that generates elements of the LS from model parameters, enables real-time exhaustive phase space exploration during the neutron scattering instrument. In the example presented in our work, a conventional simulation for a single set of parameters can take hundreds of CPU hours while only 0.1 CPU seconds with the trained surrogate!

We have done a first implementation of these ideas and tested them in an experiment where we studied the spin-ice Dy2Ti2O7 under hydrostatic pressure. The results show that the further-neighbour couplings are successfully tuned by hydrostatic pressure and that a pressure of 1.3a GPa perturbs the system modifying the nanoscale magnetic order.

References

[1] Carleo, Giuseppe, et al. "Machine learning and the physical sciences." Reviews of Modern Physics 91.4 (2019): 045002.

[2] Hebb, Donald Olding. The organization of behavior: A neuropsychological theory. Psychology Press, 2005.

[3] Samuel, Arthur L. "Machine learning." The Technology Review 62.1 (1959): 42-45.

[4] Goodfellow, Ian, Yoshua Bengio, and Aaron Courville. Deep learning. MIT press, 2016.

[5] Vamathevan, Jessica, et al. "Applications of machine learning in drug discovery and development." Nature reviews Drug discovery 18.6 (2019): 463-477.

[6] Libbrecht, Maxwell W., and William Stafford Noble. "Machine learning applications in genetics and genomics." Nature Reviews Genetics 16.6 (2015): 321-332.

[7] Agliari, Elena, et al. "Machine learning and statistical physics: theory, inspiration, application." Journal of Physics A: Special 2020 (2020).

[8] Hush, Michael R. "Machine learning for quantum physics." Science 355.6325 (2017): 580-580.

[9] Ishida, Emille EO. "Machine learning and the future of supernova cosmology." Nature Astronomy 3.8 (2019): 680-682.

[10] Samarakoon, Anjana M., et al. "Machine-learning-assisted insight into spin ice Dy2Ti2O7." Nature communications 11.1 (2020): 1-9.

[11] Samarakoon, Anjana M., et al, “Integration of machine learning with neutron scattering for the Hamiltonian tuning of spin ice under pressure”, Comm. Mat. 3, 84 (2022).

Follow the Topic

-

Communications Materials

A selective open access journal from Nature Portfolio publishing high-quality research, reviews and commentary in all areas of materials science.

Related Collections

With Collections, you can get published faster and increase your visibility.

Materials for quantum sensing and computing

Publishing Model: Open Access

Deadline: Jul 09, 2026

Triboelectric nanogenerators for energy harvesting

Publishing Model: Open Access

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in