An Unaffiliated Researcher’s Journey Through Academia and Beyond

Published in Social Sciences and Education

The Credibility Question

I was putting up my poster at an academic conference when a delegate reached out to me to help. Beneath the title that had initially intrigued him was my affiliation that became the topic of our discussion instead: it mentioned I’m an independent researcher. He had a lot of queries about how it was possible to be an independent researcher without funding, adding that I must be hiding something. When I explained that I conducted a self-funded survey during a healthcare drive by a certain foundation, he opined that I should credit the foundation in my affiliation (instead of mentioning them below), else people wouldn’t “take [me] seriously.” Hoping to steer the conversation in a positive direction, I curiously asked him about his background. Alas, he dodged the question and continued saying he wasn’t convinced about mine, finally moving on with a half-hearted nod.

Gatekeeping and the Shape of Credibility

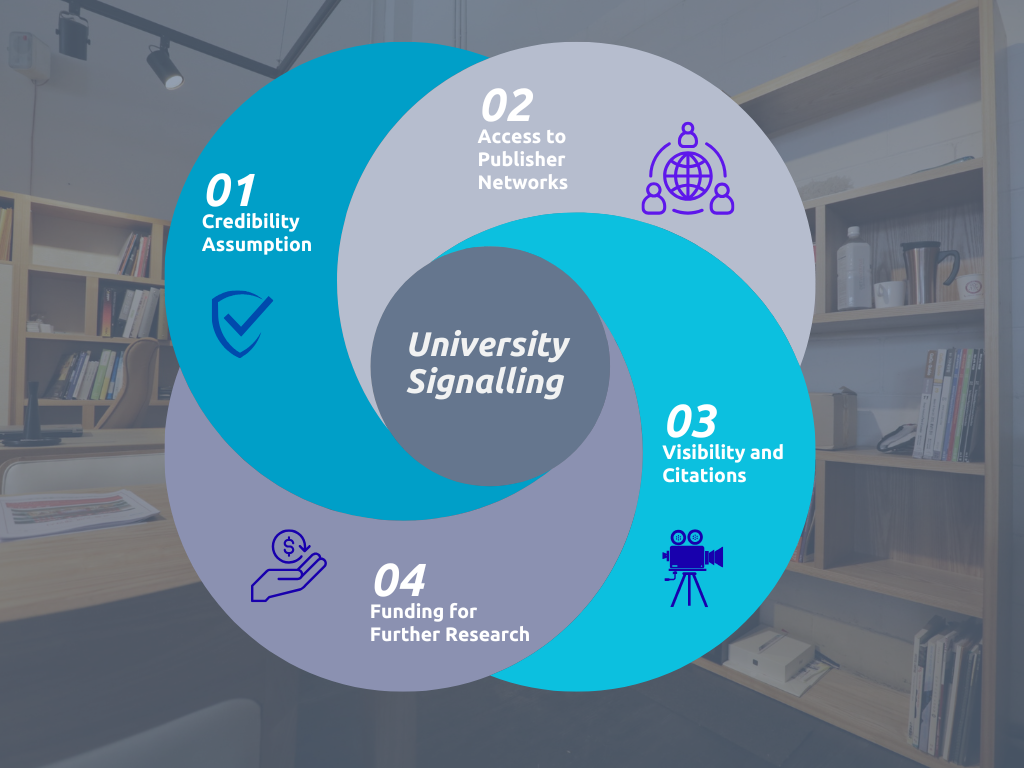

I didn’t blame his scepticism; it was hardly personal but rather systemic. It was a result of years of gatekeeping in the academic circle, where universities work as credibility signals whose rankings in turn depend on the number of theses accredited to them. This creates a self-reinforcing cycle, like the one shown below.

It’s this publish or perish culture that pushed China to test a “no thesis” policy where students would instead have to work on an end product. This also raises a broader question, though: if outputs change form, does that truly change how we evaluate credibility or simply change where manipulation may occur?

Finding My Way into Research

Far be it for me to judge an imperfect system; I myself didn’t believe I could be a researcher without a doctorate even though I’d spent nearly ten years training and working with academic researchers and editors, helping authors get published in Nature, Springer, and the likes of it.

The researcher in me would’ve still been dormant if it weren’t for the random afternoon I spent flipping through the pages of an academic book; I saw my friend’s name in the acknowledgement section. I quickly texted her asking for details, and she explained how she’d contributed to the study. Suddenly, research seemed more accessible, closer to home.

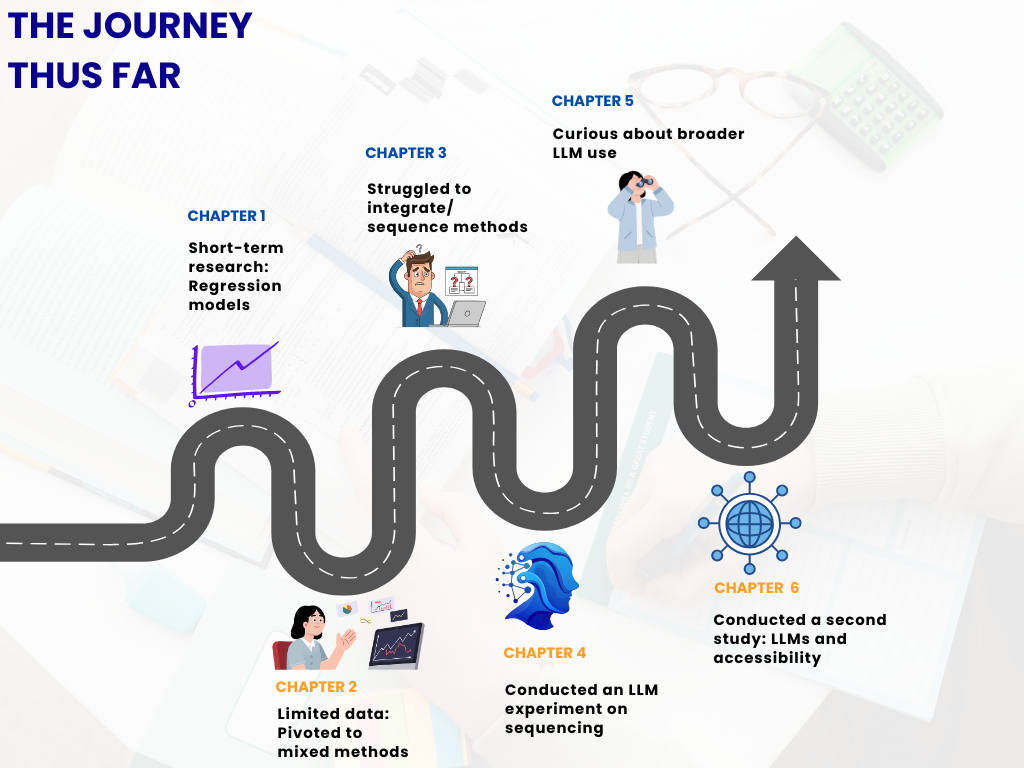

Inspired but cautiously so, I started off on a simple note. I decided to run a few regression models to examine inflation’s impact on income inequality in the short run. Now, this topic has been extensively studied in the past, but given the extent of geopolitical changes in the year before conducting my study, I thought it pertinent to examine the effects isolated for that year. However, one can’t have the cake and eat it too; I wanted to work with a simple regression model on a short-term research question, but such research by nature is characterised by data constraints. As such, I had to incorporate a mixed methodology including thematic analysis of economic reports and some survey data.

Experimenting with LLMs as Research Tools

But for all of it to make sense together, I needed to understand the sequence in which the results should be analysed. Given that researchers have varying sequencing preferences when it comes to mixed methods, and that I too had some confirmation bias, having already conducted the study in a particular order (beginning with the regression models), I turned to the elephant in the room: an LLM. But not in the way one would typically imagine. I didn’t ask GPT which sequence works best for mixed methods research. Instead, I provided it with my quantitative results followed by my qualitative and survey findings and asked for a combined interpretation after instructing it to analyse the results in the given order; I ran this prompt 10 independent times. I then reversed the order and did the same. I manually compared the depth of the outputs to understand which sequence led to richer interpretation, and used this to guide other researchers facing a similar dilemma, sharing LLM transcripts for transparency and reproducibility on platforms like Editage Insights and Sage’s Social Science Space. I later explored a related policy-focused angle for Queen Mary University of London’s blog.

Given my interest in exploring the use of LLMs in research, I decided to make them the main subject of my second study, which examined their ability to simplify peer reviewer feedback (in finance) for people with cognitive disabilities. In the context of the European Accessibility Act, where inclusivity is increasingly being built into systems alongside rising AI investments, such questions are becoming harder to ignore. I conducted this test using two prompt types for each of ten peer reviewer comments: one asking the model to make the feedback easier to understand for people with cognitive disabilities, and another asking it to simply clarify the feedback without specifying a target group. Further, I ran each prompt twice to check for consistency and drift. Once again, I shared transcripts.

Looking back, this process was far from linear. Instead, it involved a series of iterations, pivots, and moments of uncertainty, which I’ve tried to capture below.

Rethinking Dissemination

For my second research, the stakeholders I believed could benefit from my findings were scattered across platforms. Hence, it was time for me to diversify from the conventional research space (which I did cover via the likes of Scholarly Kitchen, nonetheless) by writing about my study in accessible language for Disability Horizons, providing editorial suggestions in this regard for the European Association of Science Editors, and nudging the technology industry towards inclusivity in some national newspapers such as Mid-Day. Once I stepped into this space, I began writing opinion pieces for news columns, exploring themes like rethinking feedback in Indian classrooms and future-proofing finance skills in an AI-driven world in publications such as Ahmedabad Mirror and Zee Business.

This is where I developed a stronger focus on dissemination rather than academic publication. I began to see research as something to be translated and reshaped rather than just published. The illustration below reflects this perspective, highlighting what I kept in mind when sharing research.

.png)

With the written word reaching a wider readership, why should the spoken word stay behind? With this thought, I stepped beyond spaces like academic conferences to speak at events such as TEDx. While only the first of my four TEDx talks was about my research, it’s the one I credit for revealing abilities I didn’t know I had. Over time, this journey itself became a story, with the Times of India featuring my transition from editing to researching to speaking up!

I found myself approaching even my non-research talks in a similar way: asking questions, drawing connections across domains, and examining everyday experiences with the same curiosity I once reserved only for formal studies.

For example, my latest talk was about changes in the music industry (in Spanish for TEDx Antofagasta, because let’s break the language barriers while we’re at it). I drew on concepts such as cognitive overload to explain the overwhelming choices we have today, exposure theory to reflect on how repeated listening has reduced, and dopamine-driven behaviour in our tendency to constantly switch between songs.

Receiving feedback from event curators like “explain what these mean—the audience members aren't scientists” made me think of imageries like a stuffed wardrobe as a metaphor for cognitive overload that leads us to the classic thought of “I have nothing to wear!” But more importantly, it highlighted how we tend to assume prior knowledge on others’ part. So, I’ll leave you with this guide, a small parting gift on how to make complex ideas clearer and more accessible.

.png)

PS: Taking a page from my learnings on keeping things simple, I tried to be as comprehensive and clear as possible as I recently shared my FIRST peer review for a tier-one publisher! This wasn’t a blinded one and I had access to the authors’ affiliation. However, I didn’t take a look at it, because I didn’t believe it mattered.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in

Having a credible affiliation is all about antisynergy. !!

Really liked the article..