Artificial Intelligence in Suicide Risk Assessment: A Systematic Literature Review

Published in Electrical & Electronic Engineering, Behavioural Sciences & Psychology, and Statistics

Suicide remains one of the most complex and pressing global public health challenges. It is a leading cause of preventable mortality, cutting across age groups, geographic regions, and socioeconomic contexts. Despite sustained prevention efforts, the accurate identification of individuals at risk of suicide continues to present significant clinical and systemic difficulties. Traditional risk assessment approaches, often reliant on self-reported psychometric instruments, clinician observation, and episodic evaluations, are constrained by subjectivity, recall bias, stigma, and limited temporal sensitivity. These limitations restrict the ability to detect dynamic changes in suicidal ideation and behaviour in real time.

Artificial Intelligence (AI) has emerged as a transformative force within mental health research. The capacity of AI systems to analyse large-scale, multimodal, and longitudinal data introduces new possibilities for early detection, personalised intervention, and scalable prevention. However, despite rapid growth in this domain, the evidence base has remained fragmented, with studies dispersed across technological silos, data contexts, and population groups.

This study aims to provide a comprehensive and structured synthesis of how AI technologies are being applied to suicide risk assessment. It seeks to consolidate current knowledge, evaluate methodological trends, identify performance patterns across AI subsets, and highlight critical gaps shaping future research and clinical translation.

Scope and Methodological Foundation

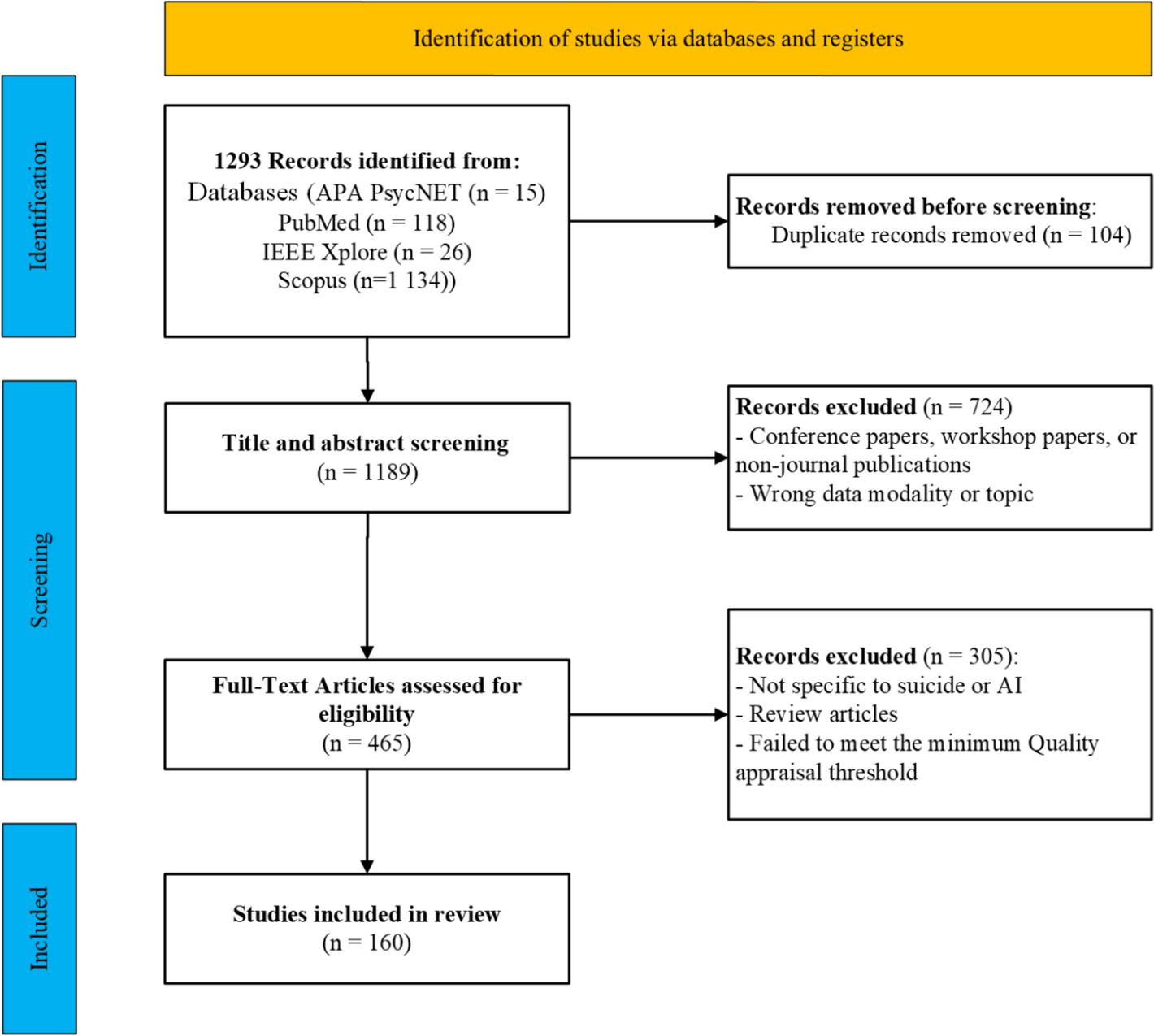

To achieve this objective, the study followed a rigorous systematic review methodology aligned with PRISMA reporting standards and a registered review protocol. A comprehensive search was conducted across four major interdisciplinary databases to ensure coverage of clinical, psychological, and computational research.

The search yielded 1,293 records, which were sequentially screened, assessed for eligibility, and appraised for methodological quality. Following duplicate removal and full-text evaluation, 160 peer-reviewed studies published in high-impact journals were included for in-depth synthesis. This extensive evidence base positions the review among the most comprehensive examinations of AI applications in suicide risk assessment.

Conceptualising the AI Landscape

A central contribution of the study lies in its integrative taxonomy of AI technologies. Rather than examining isolated techniques, the review situates suicide research within a hierarchical AI ecosystem encompassing:

- Machine Learning

- Deep Learning

- Natural Language Processing

- Explainable Artificial Intelligence

- Generative AI

- Large Language Models

This classification enables comparative analysis across modelling paradigms, data modalities, and clinical contexts, providing a systems-level understanding of technological evolution within suicide research.

Growth Trajectory of the Field

Descriptive analysis revealed a sharp escalation in publication output over the past decade. Early work in this domain was sparse, with minimal annual production before 2018. However, a marked increase in studies emerged from 2019 onward, coinciding with advances in computational capacity, expanded availability of digital data, and heightened global attention to mental health. This upward trajectory signals the consolidation of AI-driven suicide prevention as a mature and rapidly growing research frontier.

Data Modalities and Predictive Performance

The findings demonstrate that AI applications span diverse and increasingly sophisticated data environments. Three dominant modalities emerged:

1. Social Media and Digital Behavioural Data

Social media platforms constitute a significant data source due to their capacity to capture real-time emotional expression and behavioural signals. AI models analysing user-generated text have demonstrated strong performance in detecting suicidal ideation, linguistic distress markers, and behavioural escalation patterns. Deep learning architectures outperform traditional statistical models in extracting contextual and semantic meaning from unstructured text.

2. Clinical Notes and Electronic Health Records

Clinical documentation represents another critical domain. AI systems applied to unstructured medical records can identify suicide risk indicators that structured diagnostic codes frequently overlook. Transformer-based language models show strong sensitivity in detecting nuanced clinical signals, enabling enhanced surveillance within healthcare systems.

3. Multimodal and Passive Sensing Data

Emerging research explores audio, visual, and speech-based indicators of suicide risk. Vocal tone, facial expressivity, and linguistic cadence have demonstrated predictive utility, suggesting the feasibility of passive and non-invasive monitoring frameworks.

Population-Specific Risk Modelling

Demographic, cultural, and contextual determinants shape suicide risk. The review found that AI models tailored to specific populations consistently demonstrate improved predictive performance. Stratified modelling across age groups, gender populations, occupational cohorts, and geographic regions reveals distinct risk pathways and patterns of feature importance. These findings underscore the necessity of context-aware algorithm design to avoid generalisation bias and ensure equitable predictive validity.

Integration With Traditional Assessment Frameworks

A critical insight emerging from the synthesis is that AI does not function as a replacement for established suicide risk assessment tools. Instead, it operates as an augmentation layer. Psychometric instruments, diagnostic classifications, and clinical interviews remain foundational. AI enhances these frameworks by introducing continuous monitoring, pattern detection, and predictive analytics. This hybrid paradigm strengthens diagnostic precision while preserving clinical interpretability.

Explainability and Clinical Trust

The adoption of AI in sensitive domains such as suicide prevention depends not only on predictive accuracy but also on transparency. Explainable AI techniques, including feature attribution and post-hoc interpretability models, play a pivotal role in translating algorithmic outputs into clinically meaningful insights. These mechanisms enable practitioners to understand the drivers of risk classification, thereby fostering trust, accountability, and ethical deployment.

Emerging Technological Frontiers

Generative AI and Large Language Models represent an emerging but underexplored frontier. Early applications include synthetic data generation, conversational risk screening, and automated support systems. While promising, these technologies require robust validation, ethical governance, and clinical alignment before widespread implementation.

Structural Gaps and Future Directions

Despite significant advances, several systemic challenges persist:

- Limited cross-cultural validation

- Underrepresentation of low- and middle-income regions

- Scarcity of prospective and real-time trials

- Ethical risks relating to privacy, bias, and consent

Addressing these gaps will be essential for translating experimental models into scalable, real-world suicide prevention systems.

Concluding Reflection

This study aims to advance the discourse on AI-enabled suicide risk assessment by providing a unified, methodologically rigorous synthesis of the field. The evidence indicates that AI holds substantial potential to enhance early detection, personalise intervention strategies, and support clinical decision-making. However, its most significant value lies in complementing, not replacing, human expertise.

As the field progresses, the responsible integration of explainable, context-sensitive, and ethically grounded AI systems will be central to reshaping suicide prevention frameworks and ultimately reducing the global burden of suicide.

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Your space to connect: The Psychedelics Hub

A new Communities’ space to connect, collaborate, and explore research on Psychotherapy, Clinical Psychology, and Neuroscience!

Continue reading announcementRelated Collections

With Collections, you can get published faster and increase your visibility.

Enhancing Trust in Healthcare: Implementing Explainable AI

Healthcare increasingly relies on Artificial Intelligence (AI) to assist in various tasks, including decision-making, diagnosis, and treatment planning. However, integrating AI into healthcare presents challenges. These are primarily related to enhancing trust in its trustworthiness, which encompasses aspects such as transparency, fairness, privacy, safety, accountability, and effectiveness. Patients, doctors, stakeholders, and society need to have confidence in the ability of AI systems to deliver trustworthy healthcare. Explainable AI (XAI) is a critical tool that provides insights into AI decisions, making them more comprehensible (i.e., explainable/interpretable) and thus contributing to their trustworthiness. This topical collection explores the contribution of XAI in ensuring the trustworthiness of healthcare AI and enhancing the trust of all involved parties. In particular, the topical collection seeks to investigate the impact of trustworthiness on patient acceptance, clinician adoption, and system effectiveness. It also delves into recent advancements in making healthcare AI decisions trustworthy, especially in complex scenarios. Furthermore, it underscores the real-world applications of XAI in healthcare and addresses ethical considerations tied to diverse aspects such as transparency, fairness, and accountability.

We invite contributions to research into the theoretical underpinnings of XAI in healthcare and its applications. Specifically, we solicit original (interdisciplinary) research articles that present novel methods, share empirical studies, or present insightful case reports. We also welcome comprehensive reviews of the existing literature on XAI in healthcare, offering unique perspectives on the challenges, opportunities, and future trajectories. Furthermore, we are interested in practical implementations that showcase real-world, trustworthy AI-driven systems for healthcare delivery that highlight lessons learned.

We invite submissions related to the following topics (but not limited to):

- Theoretical foundations and practical applications of trustworthy healthcare AI: from design and development to deployment and integration.

- Transparency and responsibility of healthcare AI.

- Fairness and bias mitigation.

- Patient engagement.

- Clinical decision support.

- Patient safety.

- Privacy preservation.

- Clinical validation.

- Ethical, regulatory, and legal compliance.

Publishing Model: Open Access

Deadline: Sep 10, 2026

Artificial Intelligence for Sustainable Agriculture and Food Security

Artificial intelligence (AI) is rapidly transforming the agri-food value chain: from precise crop and soil monitoring, adaptive water and nutrient management, and early detection of pests and diseases, to yield forecasting under increasing climate variability and the optimization of transparent supply chain logistics.

This Collection aims to gather cutting-edge interdisciplinary research demonstrating how AI can enhance agricultural productivity, resilience and sustainability while safeguarding biodiversity and promoting equitable access to nutritious food. We welcome theoretical advances, novel algorithms, field-validated prototypes and socio-technical studies that bridge the gap between AI research and real-world agricultural impact, with particular attention to smallholder contexts, climate-smart practices and responsible, explainable AI.

This Collection supports and amplifies research related to SDG 2, SDG 9, SDG 12, and SDG 13.

Keywords: Artificial Intelligence; Sustainable Agriculture; Food Security; Autonomous Robotics; Agricultural IoT; Precision Farming; Crop Monitoring; Supply‑chain Optimization; Climate‑smart Agriculture; Remote Sensing

Publishing Model: Open Access

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in