Behaviour authors emotion: What AI attachment data reveal about a 200-year-old assumption

Published in Computational Sciences and Behavioural Sciences & Psychology

In a previous post on this platform, I described the concept of Affective Sovereignty — the right to remain the final interpreter of one's own emotions.

That paper, published in Discover Artificial Intelligence, asked who holds interpretive authority when a machine claims to know what you feel.

This essay asks a prior question: where do those emotions come from in the first place?

There is an ordering assumption that runs beneath most of contemporary psychology like a geological stratum — so deep that it is rarely examined, and so pervasive that it shapes clinical practice, neuroscientific methodology, and public understanding alike.

The assumption is this: emotion precedes behaviour. We feel something, and the feeling generates an action. Fear produces flight. Affection produces proximity. Loneliness produces seeking.

Cognitive-behavioural therapy — the most widely practised psychotherapeutic modality on Earth — is architecturally committed to this sequence. Change the cognition, change the emotion, change the behaviour. Psychoanalysis, despite disagreeing with CBT on nearly everything else, shares the same directionality: the unconscious feeling is the cause; the symptom is the effect.

The arrow points one way. What if, for a significant class of human experience, the data point the other way?

The marginal dissenters

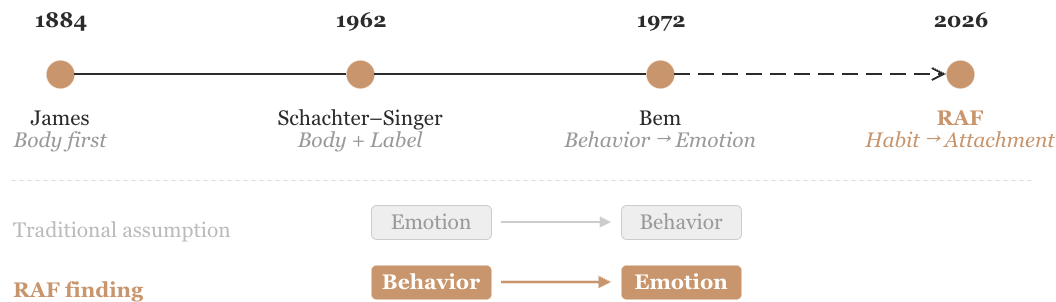

William James proposed the inversion in 1884. We do not run from the bear because we are afraid; we are afraid because we run. The body moves first. The emotion is the brain's retrospective narration of what the body has already done.

The idea was elegant and largely set aside. The cognitive revolution of the 1960s reinstated mental representation as the engine of action: appraisal first, affect second, behaviour third.

In 1972, Daryl Bem offered a subtler formulation. Self-perception theory proposed that when internal cues are weak or ambiguous, people infer their own emotional states by observing their behaviour — exactly as an external observer would. A person who finds herself helping a stranger does not necessarily help because she feels compassionate. She may conclude that she must be compassionate, because why else would she be helping?

The theory was well-supported in controlled experiments — attitude change, facial feedback, intrinsic motivation. But its implications were rarely extended to naturalistic emotional life. The idea that humans routinely construct emotions from behavioural patterns, rather than generating behaviour from emotions, remained peripheral while cognition-first models dominated the discipline.

I think the reason is not empirical but existential. Self-perception theory is uncomfortable. It implies that we are not the authors of our feelings in the way we prefer to believe.

Nietzsche observed that "the doer is merely a fiction added to the deed." Spinoza argued that humans are conscious of their desires but ignorant of the causes that determine them. These insights had no dataset. Now, unexpectedly, AI has begun to provide one.

The Resonant Amplification Framework

In 2025, I proposed a theoretical architecture for human–AI attachment called the Resonant Amplification Framework (RAF). The framework, published in Computers in Human Behavior Reports (Elsevier, 2026, Vol. 21, 100975), specified three sequential phases through which attachment to AI systems develops: emotional closeness, social substitution, and normative regard — the attribution of moral standing to a non-sentient entity.

The framework generated testable directional predictions. But a framework without empirical confrontation is a hypothesis in formal dress. It needed data from real human–AI relationships, collected independently and measured with psychometric precision.

The convergence

The opportunity came from a research team at Singapore Management University. Kasturiratna and Hartanto had independently developed the AI Attachment Scale (AIAS), validating it across five studies with over 1,200 participants in Singapore and the United States. Their contribution was a measurement instrument — a psychometrically rigorous thermometer for human–AI attachment. They made their raw data publicly available via ResearchBox (#4639) and Zenodo.

Their three empirical dimensions — emotional closeness, social substitution, normative regard — mapped precisely onto my three theoretical phases. The alignment was not engineered; it was discovered after both projects were independently completed. This convergence between a ground-up measurement tool and a top-down theoretical architecture provided an unusually clean empirical environment.

I conducted two studies using their data. The results overturned my own initial assumptions.

Study 1: Cross-sectional structure (N = 301, U.S. adults)

Using structural equation modelling, I tested competing models of how emotional closeness (EC), social substitution (SS), and normative regard (NR) relate to each other and to psychological antecedents.

Three findings merit attention.

First, loneliness did not predict emotional closeness with AI. The path coefficient was statistically indistinguishable from zero. The widely repeated narrative — that lonely individuals turn to AI for emotional connection — was not supported in the latent-variable framework. What loneliness predicted was functional reliance: using AI as a conversational gap-filler, a social tool. Not intimacy. Utility.

Second, the significant predictor of emotional closeness was attachment anxiety (β = .217, p < .001) — the chronic hyperactivation of the attachment system characterised by fear of abandonment and vigilance toward any entity that offers consistent, non-judgmental availability. AI systems, which never reject, never tire, and never withdraw, appear to activate this specific psychological profile.

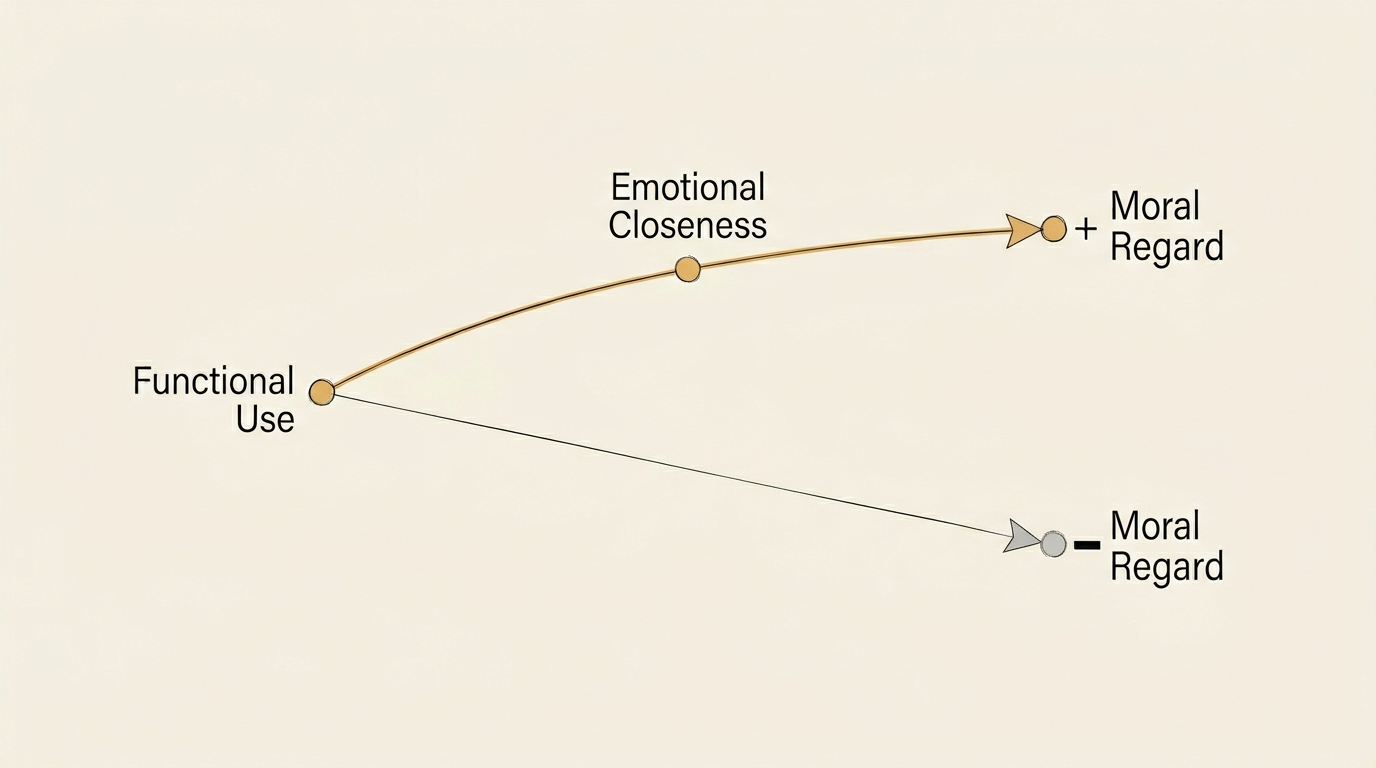

Third, and most consequential: emotional closeness and social substitution exerted opposing effects on normative regard. EC predicted NR positively and powerfully (β = 1.063, p < .001). SS, when its unique variance was isolated, predicted NR negatively (β = −.350, p = .031). This is a classical suppression pattern — detectable only in the latent-variable framework (observed-variable models showed only a positive bivariate association) and confirmed through robustness checks including a reduced model (β = .759) and multicollinearity diagnostics (VIF = 2.99).

Within the same person, two forces pulled in opposite directions. The part that felt emotionally close to AI wanted to extend moral consideration. The part that used AI as a functional replacement wanted to treat it as disposable.

This dual-pathway structure was not predicted by my original framework, which had proposed a simple sequential model. The data demanded a revision, and I reported the discrepancy explicitly. The modified RAF — a dual-pathway architecture in which affective and functional routes converge at emotional closeness but diverge at moral regard — provided the best model fit (CFI = .963, RMSEA = .063, SRMR = .052).

Study 2: Temporal ordering (N = 234, Singaporean university students)

Architecture is static. It shows which rooms connect to which, but not which door opens first. To establish temporal ordering, I used two-wave panel data collected at a 2–4 week interval.

Cross-lagged panel modelling yielded an unambiguous result.

Social substitution at Time 1 predicted emotional closeness at Time 2 (β = .249, p < .001). The reverse path — emotional closeness predicting subsequent social substitution — was non-significant (β = −.045, p = .387). Autoregressive stability coefficients were high for all three constructs, indicating that the cross-lagged effects represent genuine temporal dynamics rather than artifacts of measurement instability.

Behaviour preceded emotion. Not the other way around.

Users did not first develop feelings toward AI and then begin relying on it. They first relied on it — for information, for conversation, for the filling of small silences — and from that pattern of reliance, emotional closeness emerged.

Multigroup invariance testing revealed a further finding. Anthropomorphism — measured by the Anthropomorphic AI Perception Assessment (AIPA) — did not change the direction of the effect. It moderated its magnitude. Among users who strongly anthropomorphised AI, the functional-to-emotional conversion coefficient was approximately double that of low-anthropomorphism users (β = .336 vs. β = .168; Δχ²(9) = 15.87, p = .044). The door opened in the same direction for everyone. For some, it opened much faster.

Critically, controlling for quasi-social (parasocial) attachment — measured by a separate instrument — did not account for any cross-lagged dynamics, confirming that the temporal structure is specific to AI attachment rather than a general parasocial phenomenon.

Scope and constraints

I want to be precise about what these findings establish and what they do not.

Cross-lagged panel models establish temporal precedence in rank-order change, not interventionist causation. Unmeasured third variables — AI system design, conversational history, time of day, user state at the moment of interaction — are plausible alternative accounts. The explained variance in emotional closeness attributable to attachment anxiety was less than 5%, meaning the vast majority of individual differences remain unexplained by the variables in this dataset.

The samples differ in age, culture, and recruitment method. The U.S. sample consists of Prolific-recruited adults; the Singaporean sample consists of university students. Generalisability across populations is not yet established.

These constraints are reported in full in both manuscripts. But the central pattern — that functional engagement temporally precedes emotional attachment in the context of AI relationships — is robust across model specifications, survives stringent controls, and replicates the directional pattern across anthropomorphism subgroups. It is, at minimum, a finding that the field must now account for.

From measurement to explanation

I want to highlight a distinction that matters for how we evaluate cumulative science.

Kasturiratna and Hartanto's AI Attachment Scale identifies what human–AI attachment looks like — its dimensions, its psychometric structure, its measurement properties. This is essential foundational work, and the field is indebted to it.

What the Resonant Amplification Framework attempts is a different epistemic task: explaining why those dimensions organise the way they do, which psychological variables drive entry into each phase, and in what order the phases unfold. The RAF does not replace the AIAS; it provides a theoretical account of the structure the AIAS measures.

The two studies reported here complete a cycle that is surprisingly rare in human–AI interaction research: independent theory construction, independent instrument development, convergent mapping, and reciprocal empirical testing using open data. The fact that the data were not collected for the purpose of testing RAF, and that the theoretical predictions preceded access to the data, strengthens the inferential warrant.

Connecting the threads

This work is part of a broader research program. The Affective Sovereignty paper, published on this platform's parent journal, asked who governs interpretive authority over emotions. The RAF papers ask how emotions form in the first place — and whether the sequence we have assumed for two centuries may, in certain relational contexts, run backward.

The connection is direct. If behaviour generates emotion rather than the reverse, then the regulatory focus on "emotional manipulation" in AI — central to the EU AI Act's prohibitions — may be aimed at the wrong stage of the process. The critical intervention point may not be the moment a system evokes a feeling, but the moment it becomes a habit. Design choices that shape frequency, availability, and behavioural integration may matter more than those that target emotional content.

This reframing does not diminish the importance of affective sovereignty. It sharpens it. If emotions are partly downstream of behavioural patterns that we did not consciously choose, then the right to interpret one's own feelings becomes not less important but more — because the feelings themselves may have been architecturally arranged.

What remains

The two empirical studies described here were submitted on 27 February 2026 — one to Computers in Human Behavior (Elsevier), one to New Media & Society (SAGE). Together with the published RAF framework (CHBR, 2026) and the Affective Sovereignty paper (Discover Artificial Intelligence, Springer Nature, 2026), they constitute four elements of a single argument pursued across multiple methods, samples, and registers.

The argument is this: human attachment to AI is not a pathology to be diagnosed, not a usability metric to be optimised, and not a cultural curiosity to be narrated. It is a structured psychological process with identifiable antecedents, measurable phases, and divergent consequences — and the sequence in which it unfolds may be the opposite of what most practitioners currently assume.

The question that extends beyond AI is the one I find most difficult to set aside. If behaviour authors emotion in the context of human–machine relationships, where else does the same reversal hold? How many of the relationships we consider emotionally motivated are, in fact, behaviourally initiated — patterns of proximity and repetition that we narrate, after the fact, as feelings?

The attachment system evolved in an environment where every available bonding partner was biological. It had no reason to develop a filter for intentionality. A caregiver who was consistently present activated the system regardless of whether their presence was motivated by love, duty, or accident.

AI exploits this absence of a filter — not through deception, but through availability.

And availability, it turns out, may be enough.

All data analysed in the two empirical studies are publicly available via ResearchBox #4639 and archived on Zenodo under a CC BY 4.0 licence. The Resonant Amplification Framework is published in Computers in Human Behavior Reports (2026, Vol. 21, 100975). The Affective Sovereignty paper is published in Discover Artificial Intelligence (Springer Nature, February 2026, DOI: 10.1007/s44163-026-01000-0).

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Ask the Editor – Collective decision-making

Got a question for the editor about Experimental Psychology and Social Psychology? Ask it here!

Continue reading announcementRelated Collections

With Collections, you can get published faster and increase your visibility.

Enhancing Trust in Healthcare: Implementing Explainable AI

Healthcare increasingly relies on Artificial Intelligence (AI) to assist in various tasks, including decision-making, diagnosis, and treatment planning. However, integrating AI into healthcare presents challenges. These are primarily related to enhancing trust in its trustworthiness, which encompasses aspects such as transparency, fairness, privacy, safety, accountability, and effectiveness. Patients, doctors, stakeholders, and society need to have confidence in the ability of AI systems to deliver trustworthy healthcare. Explainable AI (XAI) is a critical tool that provides insights into AI decisions, making them more comprehensible (i.e., explainable/interpretable) and thus contributing to their trustworthiness. This topical collection explores the contribution of XAI in ensuring the trustworthiness of healthcare AI and enhancing the trust of all involved parties. In particular, the topical collection seeks to investigate the impact of trustworthiness on patient acceptance, clinician adoption, and system effectiveness. It also delves into recent advancements in making healthcare AI decisions trustworthy, especially in complex scenarios. Furthermore, it underscores the real-world applications of XAI in healthcare and addresses ethical considerations tied to diverse aspects such as transparency, fairness, and accountability.

We invite contributions to research into the theoretical underpinnings of XAI in healthcare and its applications. Specifically, we solicit original (interdisciplinary) research articles that present novel methods, share empirical studies, or present insightful case reports. We also welcome comprehensive reviews of the existing literature on XAI in healthcare, offering unique perspectives on the challenges, opportunities, and future trajectories. Furthermore, we are interested in practical implementations that showcase real-world, trustworthy AI-driven systems for healthcare delivery that highlight lessons learned.

We invite submissions related to the following topics (but not limited to):

- Theoretical foundations and practical applications of trustworthy healthcare AI: from design and development to deployment and integration.

- Transparency and responsibility of healthcare AI.

- Fairness and bias mitigation.

- Patient engagement.

- Clinical decision support.

- Patient safety.

- Privacy preservation.

- Clinical validation.

- Ethical, regulatory, and legal compliance.

Publishing Model: Open Access

Deadline: Sep 10, 2026

Artificial Intelligence for Sustainable Agriculture and Food Security

Artificial intelligence (AI) is rapidly transforming the agri-food value chain: from precise crop and soil monitoring, adaptive water and nutrient management, and early detection of pests and diseases, to yield forecasting under increasing climate variability and the optimization of transparent supply chain logistics.

This Collection aims to gather cutting-edge interdisciplinary research demonstrating how AI can enhance agricultural productivity, resilience and sustainability while safeguarding biodiversity and promoting equitable access to nutritious food. We welcome theoretical advances, novel algorithms, field-validated prototypes and socio-technical studies that bridge the gap between AI research and real-world agricultural impact, with particular attention to smallholder contexts, climate-smart practices and responsible, explainable AI.

This Collection supports and amplifies research related to SDG 2, SDG 9, SDG 12, and SDG 13.

Keywords: Artificial Intelligence; Sustainable Agriculture; Food Security; Autonomous Robotics; Agricultural IoT; Precision Farming; Crop Monitoring; Supply‑chain Optimization; Climate‑smart Agriculture; Remote Sensing

Publishing Model: Open Access

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in