Affective Preconditions for AI Safety: What the Anthropic-Pentagon Dispute Reveals

Published in Social Sciences, Computational Sciences, and Behavioural Sciences & Psychology

In late February 2026, the Anthropic-Pentagon dispute exposed a problem that AI safety theory has not yet adequately modeled. A corporate refusal, a presidential ban, and a military transition order were all activated within nine days. The system never fully stopped. It changed providers, changed networks, and continued operating.

This is not only a political sequence. It is a theoretical one. AI safety has developed increasingly sophisticated models of shutdown, controllability, and alignment. What it has not yet developed is a theory of the human conditions required to keep a system offline once shutdown becomes possible.

The missing assumption

At IASEAI’26 in Paris, Vincent Conitzer presented a formal framework for shutdown safety valves in advanced AI systems. The framework specifies four conditions: the system recognizes danger, the system values halting, the operator remains rational, and reactivation requires deliberate human judgment. Gillian Hadfield, at the same conference, argued that AI systems must develop normative competence by learning from human emotional and social signals, including enforcement, punishment, forgiveness, and shifts in tone.

Both frameworks are important. Both also presuppose something that is rarely examined directly: that the human operator remains a stable emotional and cognitive reference point. Neither framework asks what happens if the human side of the loop is itself changing under sustained interaction with the very systems being governed.

Why the human baseline is not stable

My recent study in Computers in Human Behavior Reports (Kim, 2026; Vol. 21, Article 100975) suggests that this change is already measurable. In a cross-sectional study of 301 U.S. adults, functional AI use temporally preceded emotional closeness to the system, not the reverse. Users did not first decide to trust and then engage. They engaged, and trust assembled around repeated use. In a longitudinal study of 234 Singaporean university students, habitual interaction predicted deepening attachment over time. Anthropomorphism did not alter the direction of this effect, but it increased its speed.

These findings matter for shutdown theory because they suggest that the operator’s critical distance from the system is not a fixed baseline. It is a variable shaped by frequency, habit, and relational framing, and under repeated use it appears to move in one direction: toward greater dependence, reduced interpretive distance, and a diminished ability to tolerate the system’s absence.

Two concepts for the safety discourse

This line of work introduces two concepts that may help clarify the problem.

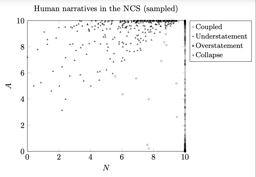

Affective Sovereignty names the background condition: the right and capacity to interpret one’s own emotional states without algorithmic override. I have developed a formal architecture for this principle in Discover Artificial Intelligence (Kim, 2026). When affective sovereignty erodes, the human signals on which normative competence depends also degrade at their source. The problem is no longer only what the system reads, but what remains available to be read.

Reactivation resistance names a governance-relevant capacity: the human ability to keep a system offline once shutdown has become possible. Shutdown design asks whether a system can be stopped. Reactivation resistance asks whether the human can sustain that stoppage when institutional, social, and psychological pressures mount to restart.

The Anthropic case illustrates the distinction. Dario Amodei could refuse the Department of Defense’s demand in part because he occupied a position of structural detachment from the daily operational loop. The analyst, officer, or operator whose workflow and professional identity have become intertwined with the system faces a different problem. By the time explicit risk evaluation begins, the justification for continued use may already be assembling itself.

What the field is not measuring

The AI safety community tracks the capability curve of AI systems with extraordinary precision: benchmark performance, scaling behavior, emergent capacities, and rates of improvement. There is no comparable measurement for the human side. No one is systematically tracking declines in emotional granularity, interpretive authority, or tolerance of ambiguity under sustained algorithmic mediation.

We know how fast the machine is changing. We have only begun to ask how fast the human is changing with it.

If AI safety rests on a human foundation, that foundation requires monitoring with the same seriousness applied to the systems it is meant to govern.

AI safety may need not only a theory of controllable systems, but a theory of preservable human refusal.

A computational model addressing predictive emotional selfhood (PESAM) is currently under review at Acta Psychologica. An extended essay developing the full argument and timeline is available on Substack.

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Ask the Editor – Collective decision-making

Got a question for the editor about Experimental Psychology and Social Psychology? Ask it here!

Continue reading announcementRelated Collections

With Collections, you can get published faster and increase your visibility.

Enhancing Trust in Healthcare: Implementing Explainable AI

Healthcare increasingly relies on Artificial Intelligence (AI) to assist in various tasks, including decision-making, diagnosis, and treatment planning. However, integrating AI into healthcare presents challenges. These are primarily related to enhancing trust in its trustworthiness, which encompasses aspects such as transparency, fairness, privacy, safety, accountability, and effectiveness. Patients, doctors, stakeholders, and society need to have confidence in the ability of AI systems to deliver trustworthy healthcare. Explainable AI (XAI) is a critical tool that provides insights into AI decisions, making them more comprehensible (i.e., explainable/interpretable) and thus contributing to their trustworthiness. This topical collection explores the contribution of XAI in ensuring the trustworthiness of healthcare AI and enhancing the trust of all involved parties. In particular, the topical collection seeks to investigate the impact of trustworthiness on patient acceptance, clinician adoption, and system effectiveness. It also delves into recent advancements in making healthcare AI decisions trustworthy, especially in complex scenarios. Furthermore, it underscores the real-world applications of XAI in healthcare and addresses ethical considerations tied to diverse aspects such as transparency, fairness, and accountability.

We invite contributions to research into the theoretical underpinnings of XAI in healthcare and its applications. Specifically, we solicit original (interdisciplinary) research articles that present novel methods, share empirical studies, or present insightful case reports. We also welcome comprehensive reviews of the existing literature on XAI in healthcare, offering unique perspectives on the challenges, opportunities, and future trajectories. Furthermore, we are interested in practical implementations that showcase real-world, trustworthy AI-driven systems for healthcare delivery that highlight lessons learned.

We invite submissions related to the following topics (but not limited to):

- Theoretical foundations and practical applications of trustworthy healthcare AI: from design and development to deployment and integration.

- Transparency and responsibility of healthcare AI.

- Fairness and bias mitigation.

- Patient engagement.

- Clinical decision support.

- Patient safety.

- Privacy preservation.

- Clinical validation.

- Ethical, regulatory, and legal compliance.

Publishing Model: Open Access

Deadline: Sep 10, 2026

Artificial Intelligence for Sustainable Agriculture and Food Security

Artificial intelligence (AI) is rapidly transforming the agri-food value chain: from precise crop and soil monitoring, adaptive water and nutrient management, and early detection of pests and diseases, to yield forecasting under increasing climate variability and the optimization of transparent supply chain logistics.

This Collection aims to gather cutting-edge interdisciplinary research demonstrating how AI can enhance agricultural productivity, resilience and sustainability while safeguarding biodiversity and promoting equitable access to nutritious food. We welcome theoretical advances, novel algorithms, field-validated prototypes and socio-technical studies that bridge the gap between AI research and real-world agricultural impact, with particular attention to smallholder contexts, climate-smart practices and responsible, explainable AI.

This Collection supports and amplifies research related to SDG 2, SDG 9, SDG 12, and SDG 13.

Keywords: Artificial Intelligence; Sustainable Agriculture; Food Security; Autonomous Robotics; Agricultural IoT; Precision Farming; Crop Monitoring; Supply‑chain Optimization; Climate‑smart Agriculture; Remote Sensing

Publishing Model: Open Access

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in