Beyond the Code: Why Feelings Are the Missing Link in AI Education

Published in Computational Sciences, Education, and Arts & Humanities

The AI wave has hit higher education hard. From intelligent tutoring systems to personalised learning environments, universities are scrambling to catch it — mostly by teaching the mechanics: the algorithms, the code, the data pipelines. But a new study of 237 computer science undergraduates across three campuses of COMSATS University Islamabad argues that’s only half the story. The other half is affective: how students feel about AI. And, as it turns out, that half may matter more.

What is Affective AI Literacy?

The ABCD model frames AI literacy as four-dimensional: Affective (emotions and attitudes), Behavioural (usage), Cognitive (knowledge), and Digital/ethical. Most curricula fixate on the cognitive. This study zooms in on the affective — a student’s emotional and motivational readiness to engage with technology. It’s intrinsic motivation, curiosity, and self-efficacy rolled into one: the quiet internal voice that says “I can do this,” and “this is interesting to me.” The researchers wanted to know whether that inner readiness actually shifts how useful and how easy AI feels in practice.

Confidence shapes reality

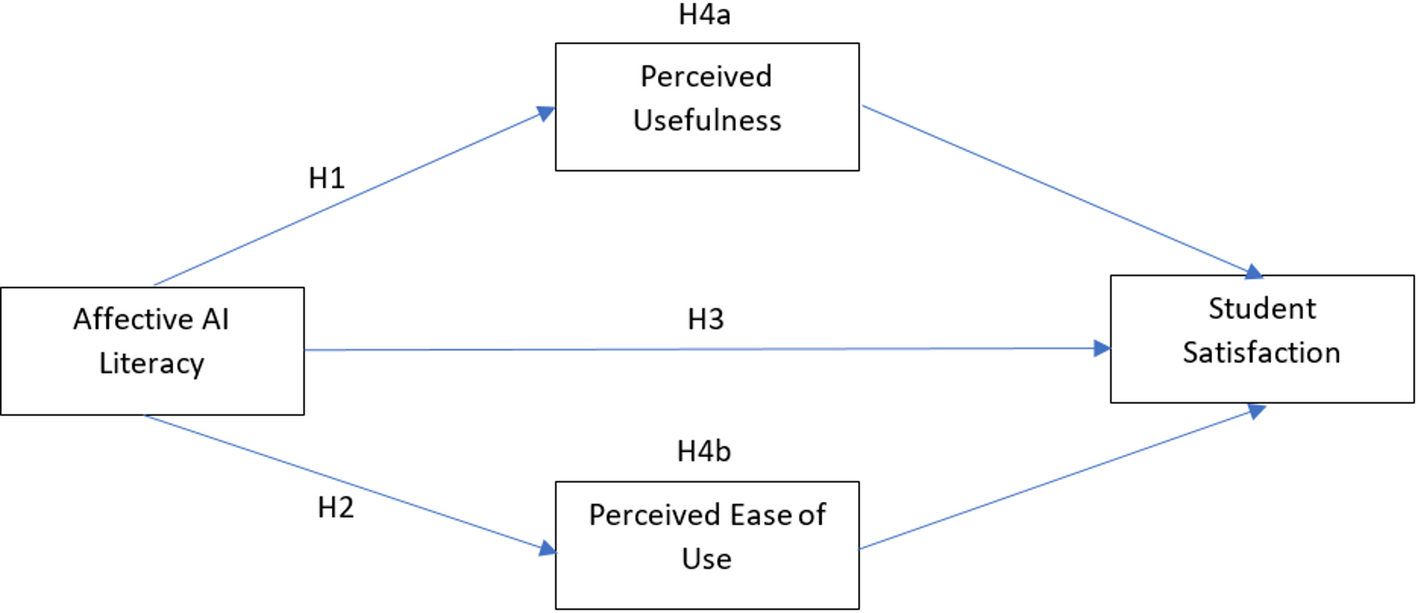

Using structural equation modelling, the researchers found a robust positive link between affective AI literacy and perceived usefulness. Emotionally ready students see AI as a partner, not a hurdle — and that perception drives productivity.

The ease-of-use bridge

The more practical finding: emotional engagement also raises perceived ease of use. Fear and anxiety make tasks feel harder; self-efficacy makes them feel intuitive. A positive attitude lowers the mental tax of learning a new tool, creating a virtuous loop of reduced resistance and deeper integration.

The real secret to satisfaction

Affective literacy does nudge satisfaction directly — but the magic is mediation. Perceived ease of use acts as a bridge:

- Student builds affective AI literacy (confidence, motivation)

- Confidence makes the AI tool feel easier to use

- Ease of use translates into genuine satisfaction

The model explains roughly 62% of the variance in student satisfaction. Ease of use isn’t a design nicety — it’s the psychological hinge connecting attitude to outcome.

What this means for educators

Dropping AI tools into classrooms isn’t enough, especially in resource-constrained settings like Pakistan’s universities. Curricula need to reach beyond technical training into emotional design — hands-on workshops that demystify the technology, reflective assignments that humanise human–AI interaction, and teaching that makes AI feel emotionally intuitive rather than merely functional. Confidence, in short, deserves the same lesson plan as code.

The technology is artificial. The learning, still, is deeply human.

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Related Collections

With Collections, you can get published faster and increase your visibility.

Enhancing Trust in Healthcare: Implementing Explainable AI

Healthcare increasingly relies on Artificial Intelligence (AI) to assist in various tasks, including decision-making, diagnosis, and treatment planning. However, integrating AI into healthcare presents challenges. These are primarily related to enhancing trust in its trustworthiness, which encompasses aspects such as transparency, fairness, privacy, safety, accountability, and effectiveness. Patients, doctors, stakeholders, and society need to have confidence in the ability of AI systems to deliver trustworthy healthcare. Explainable AI (XAI) is a critical tool that provides insights into AI decisions, making them more comprehensible (i.e., explainable/interpretable) and thus contributing to their trustworthiness. This topical collection explores the contribution of XAI in ensuring the trustworthiness of healthcare AI and enhancing the trust of all involved parties. In particular, the topical collection seeks to investigate the impact of trustworthiness on patient acceptance, clinician adoption, and system effectiveness. It also delves into recent advancements in making healthcare AI decisions trustworthy, especially in complex scenarios. Furthermore, it underscores the real-world applications of XAI in healthcare and addresses ethical considerations tied to diverse aspects such as transparency, fairness, and accountability.

We invite contributions to research into the theoretical underpinnings of XAI in healthcare and its applications. Specifically, we solicit original (interdisciplinary) research articles that present novel methods, share empirical studies, or present insightful case reports. We also welcome comprehensive reviews of the existing literature on XAI in healthcare, offering unique perspectives on the challenges, opportunities, and future trajectories. Furthermore, we are interested in practical implementations that showcase real-world, trustworthy AI-driven systems for healthcare delivery that highlight lessons learned.

We invite submissions related to the following topics (but not limited to):

- Theoretical foundations and practical applications of trustworthy healthcare AI: from design and development to deployment and integration.

- Transparency and responsibility of healthcare AI.

- Fairness and bias mitigation.

- Patient engagement.

- Clinical decision support.

- Patient safety.

- Privacy preservation.

- Clinical validation.

- Ethical, regulatory, and legal compliance.

Publishing Model: Open Access

Deadline: Sep 10, 2026

Artificial Intelligence for Sustainable Agriculture and Food Security

Artificial intelligence (AI) is rapidly transforming the agri-food value chain: from precise crop and soil monitoring, adaptive water and nutrient management, and early detection of pests and diseases, to yield forecasting under increasing climate variability and the optimization of transparent supply chain logistics.

This Collection aims to gather cutting-edge interdisciplinary research demonstrating how AI can enhance agricultural productivity, resilience and sustainability while safeguarding biodiversity and promoting equitable access to nutritious food. We welcome theoretical advances, novel algorithms, field-validated prototypes and socio-technical studies that bridge the gap between AI research and real-world agricultural impact, with particular attention to smallholder contexts, climate-smart practices and responsible, explainable AI.

This Collection supports and amplifies research related to SDG 2, SDG 9, SDG 12, and SDG 13.

Keywords: Artificial Intelligence; Sustainable Agriculture; Food Security; Autonomous Robotics; Agricultural IoT; Precision Farming; Crop Monitoring; Supply‑chain Optimization; Climate‑smart Agriculture; Remote Sensing

Publishing Model: Open Access

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in