Deep Operator Networks can accelerate simulation solvers for materials processing!

Published in Materials

The phase-field method has emerged as a powerful, heuristic tool for modeling and predicting mesoscale microstructural evolution in a wide variety of material processes. The Cahn-Hilliard, nonlinear diffusion equation, is one of the most commonly used governing equations in phase-field models. It describes the process of phase separation, by which a two-phase mixture spontaneously separates and form domains pure in each component. The Cahn–Hilliard equation finds applications in diverse fields ranging from complex fluids to soft matter and serves as the starting point of many phase-filed models for microstructure evolution.

Although the phase-field method accurately models mesoscale morphological and microstructure evolution in materials, it is computationally expensive. The presence of numerous time-evolving high-gradient regions in the concentration field defining the microstructures make it difficult for the conventional neural network based architectures to directly learn the dynamics of the underlying system in the primitive space. To overcome this difficulty, we demonstrate the effectiveness of learning the dynamics in the latent space of autoencoders.

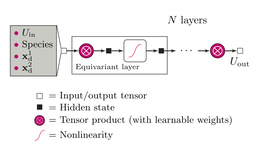

Our model consists of an encoder, a decoder, and a Deep Operator Network (DeepONet). As the first step, we train the autoencoder (encoder and decoder) to learn a non-linear transformation to a low dimensional latent space. This can be interpreted as a dimensionality reduction step. Next, we train the DeepONet model to learn the dynamics in the latent space previously learned by the encoder. The predictions made by the DeepONet in the latent space are retransformed using the pre-trained decoder to obtain the predicted microstructure at the query time.

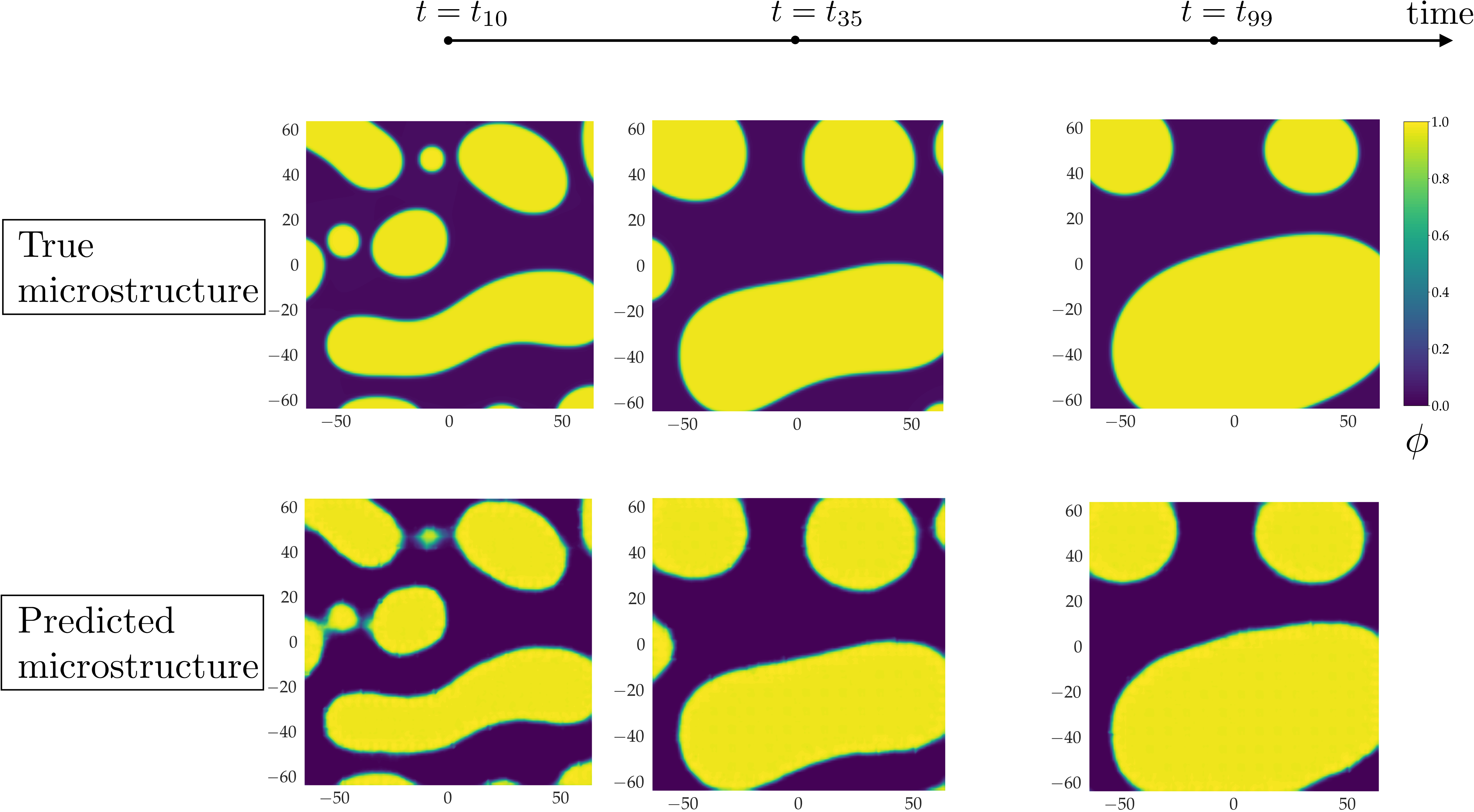

We compare the predictions of the surrogate autoencoder-DeepONet model with solutions generated by the high-fidelity numerical model in Figure 2. The predicted results align well with the true microstructures. Increasing the weight given to the earlier time steps, where the microstructure evolves rapidly, enabled us to endow DeepONet with an inductive bias to learn the smaller features and fast dynamics accurately.

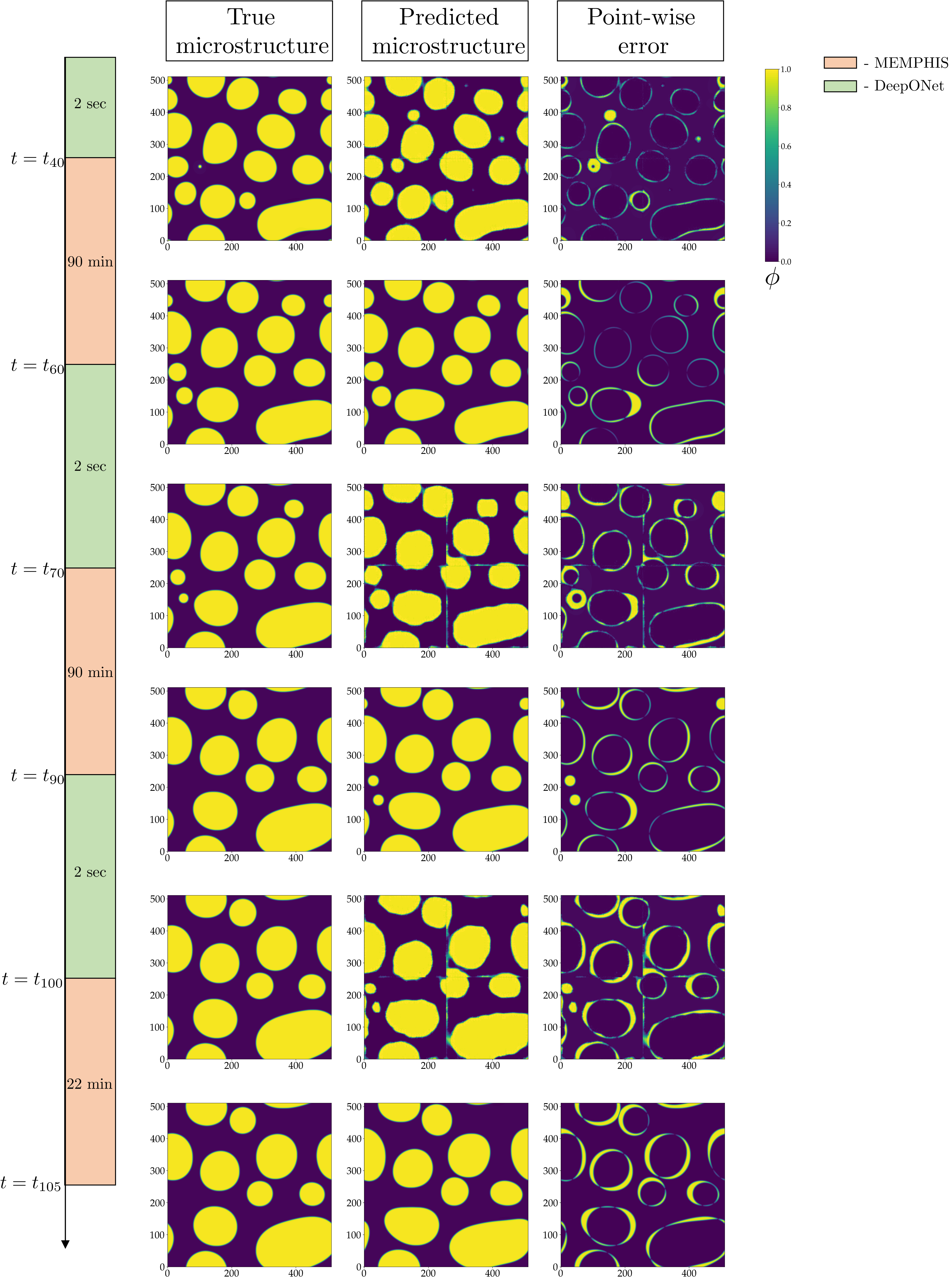

Our proposed framework can also be used for extrapolation tasks and be integrated to the phase-field numerical solver to accelerate the predictions for initial microstructure and parameters that are outside the aforementioned distributions (extrapolation task). To demonstrate this point, we devised a hybrid approach that integrates the autoencoder--DeepONet framework with our high-fidelity phase-field Mesoscale Multiphysics Phase Field Simulator (MEMPHIS solver). This hybrid model unites the efficiency and computational speed of the autoencoder-DeepONet framework with the accuracy of high-fidelity phase-field numerical solvers. The hybrid 'leap in time' strategy demonstrated in this study achieves a speed-up of 29% compared to the numerical solver.

In this work, we developed and applied a machine-learned framework based on neural operators and autoencoder architectures to efficiently and rapidly predict complex microstructural evolution problems. Such architecture is not only computationally efficient and accurate but it is also robust to noisy data. The demonstrated performance makes it an attractive alternative to other existing machined-learned strategies to accelerate the predictions of microstructure evolution. It opens up a computationally viable and efficient path forward for discovering, understanding, and predicting materials processes, where evolutionary mesoscale phenomena are critical, such as in optimization and design of materials problems.

The paper is now published in:

Oommen, V., Shukla, K., Goswami, S. et al. Learning two-phase microstructure evolution using neural operators and autoencoder architectures. npj Comput Mater 8, 190 (2022). https://doi.org/10.1038/s41524-022-00876-7

Follow the Topic

-

npj Computational Materials

This journal publishes high-quality research papers that apply computational approaches for the design of new materials, and for enhancing our understanding of existing ones.

Related Collections

With Collections, you can get published faster and increase your visibility.

Computational Catalysis

Publishing Model: Open Access

Deadline: Apr 30, 2026

Recent Advances in Active Matter

Publishing Model: Open Access

Deadline: Sep 01, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in