Multimodal AI for Real‐Time Food Safety and Quality: From Sensors to Foundation Models, Edge Deployment, and Regulation

Published in Materials, Computational Sciences, and Agricultural & Food Science

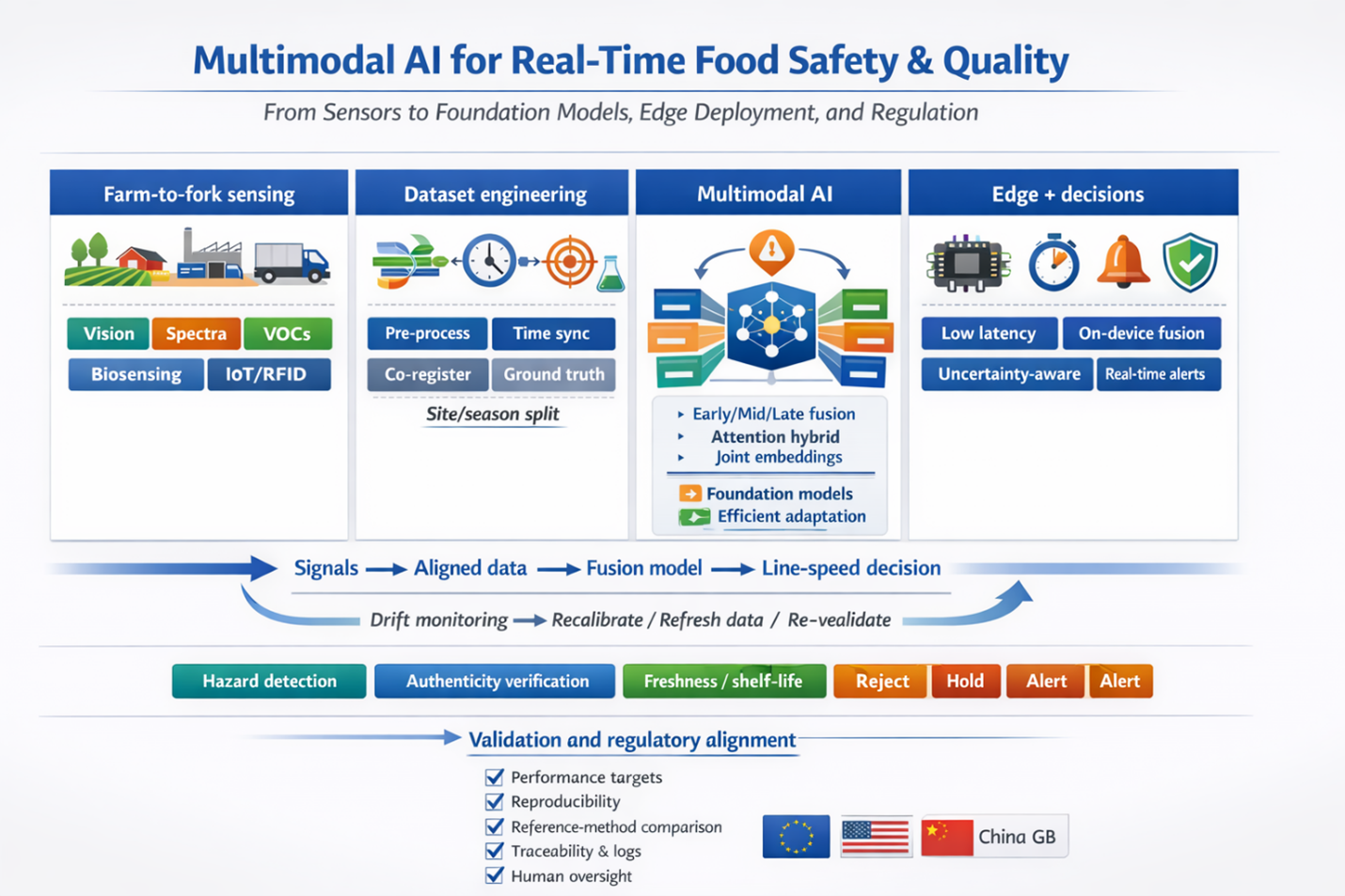

The Challenge of Real-Time Food Monitoring

Food safety and quality are critical yet distinct dimensions of the food supply chain. Traditional inspection methods, relying on manual sampling and laboratory assays, are slow (minutes to days), laborious, and prone to human error. While single-sensor automation and deep learning have dramatically improved detection accuracy since the 2010s, no single modality can capture all potential hazards or quality defects. A camera may miss chemical adulterants; a spectrometer cannot see a foreign object. This fundamental limitation has driven the rise of multimodal AI systems that fuse data from multiple sensor types for a more holistic and robust assessment.

Multimodal Sensing Across the Supply Chain

This review surveys five major categories of sensing modalities deployed from farm to fork:

|

Modality |

Principle |

Key Applications |

Response Time |

|

Optical Imaging (RGB, HSI, X-ray) |

Optical features, X-ray density |

Defects, foreign bodies, size/color grading |

RGB: less than 0.1 s/item |

|

Spectroscopy (NIR, FTIR, Raman) |

Molecular spectra |

Composition, adulteration, authenticity |

Seconds per scan |

|

Electronic Noses (VOC arrays) |

Volatile organic compound patterns |

Spoilage, freshness, off-odors |

Seconds to minutes |

|

Biosensors (immuno/aptamer, LoC) |

Biorecognition signals |

Pathogens, toxins, allergens, residues |

Minutes to hours |

|

Process/IoT Sensors (T/H, RFID) |

Environmental and process logs |

Cold chain integrity, traceability |

Continuous |

Each modality has complementary strengths and failure modes. For instance, optical imaging excels at catching visible defects, while spectroscopy detects molecular composition changes invisible to cameras. Electronic noses sniff spoilage volatiles that optical or spectral sensors might miss entirely.

The Power of Multimodal AI Fusion

By fusing data from these heterogeneous sources, multimodal AI systems overcome the blind spots of individual sensors. The review appraises fusion strategies ranging from early and late schemes to attention-based hybrids that learn joint embeddings across images, spectra, and gas sensor time series. Head-to-head studies consistently show that multimodality improves accuracy or reduces error compared to unimodal baselines. Critical data engineering practices, including time synchronization, co-registration to ground truth, and robust multisite/multiseason sampling, are essential to make disparate streams analysis-ready and ensure generalization.

Foundation Models and Efficient Adaptation

The review discusses the maturation of foundation-scale encoders and vision-language systems (e.g., CLIP, BLIP) for food tasks. These models, pre-trained on massive datasets, can be efficiently adapted via techniques like LoRA and prompt tuning to food-specific tasks such as label verification, compliance Q&A, and cross-modal hazard search. Knowledge infusion from HACCP protocols and food safety ontologies further enhances their domain relevance, while bias control and licensing constraints in regulated environments must be carefully managed.

Edge Deployment and Regulatory Compliance

Deploying AI models in factory environments demands hardware acceleration on embedded GPUs (e.g., NVIDIA Jetson), NPUs, and FPGAs to meet strict latency budgets (often under 100 ms). Model compression techniques, including INT8 quantization, structured pruning, and knowledge distillation, make large models practical for edge inference with minimal accuracy loss. System engineering must deliver deterministic pipelines, model versioning, decision logs, and change control so that every automated decision can be traced. The review examines regulatory alignment across the EU AI Act, US FDA guidelines, and China's National Food Safety Standards (GB) framework, emphasizing that rigorous validation, auditability, and human-in-the-loop fail-safes are paramount for compliance.

Future Perspectives and Evidence Gaps

The integration of zero-shot learning, federated learning, and digital twins promises to enhance adaptability, privacy, and predictive capability. However, evidence gaps persist: few multisite deployments over long durations, limited public benchmarks for hyperspectral and e-nose fusion, and sparse cost-benefit analyses in the scholarly record. Addressing these gaps will enable trustworthy, auditable multimodal AI that complements existing HACCP controls, reduces waste, and protects consumers worldwide.

https://doi.org/10.1002/fsn3.71534

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in