Who Gets to Say How You Feel? The story behind "Formal and Computational Foundations for Implementing Affective Sovereignty in Emotion AI Systems"

Published in Social Sciences, Neuroscience, and Computational Sciences

A few years ago, I watched a job candidate receive an automated rejection. The system had analyzed her video interview and scored her "enthusiasm" as low. She told me she had been nervous, not disengaged — and that in her culture, restraint is a sign of respect. There was no way to contest the score. No way to say, "That is not what I felt." The label was already in the file, and it outranked her.

That moment did not leave me. Not because it was unusual, but because it was becoming ordinary. Emotion AI — systems that infer what we feel from our faces, voices, text, or physiology — is now embedded in hiring platforms, mental health apps, companion chatbots, driver monitoring, and customer service. The promise is personalization and care. The risk, I came to believe, is something deeper than misclassification. It is the quiet transfer of interpretive authority: the moment a system's label about your inner life begins to outrank your own.

I looked for a name for this problem. Privacy did not quite cover it — privacy protects data, but the issue here is meaning. Transparency was necessary but insufficient — knowing that a system read you as "anxious" does not give you the power to say, "No, I was tired." Fairness frameworks addressed demographic bias, but not the more fundamental asymmetry: that a statistical model was claiming to know someone's feelings better than the person themselves.

So I proposed a concept: Affective Sovereignty. The right to remain the final interpreter of one's own emotions.

From principle to formalism

The concept alone was not enough. Ethics guidelines are abundant in AI; enforcement is scarce. I wanted to ask a harder question: can you build sovereignty into the mathematics of a system — not as an afterthought, but as a structural constraint?

The paper that resulted, now published in Discover Artificial Intelligence, attempts exactly that. It decomposes the risk function of an emotion AI system into three terms: conventional prediction loss, an override cost that makes it expensive for the system to contradict a user's self-report, and a manipulation penalty that flags coercive or deceptive influence on emotional states. When the system is uncertain, or when a pending action would violate a user's stated preferences, the optimal policy is to abstain and ask — not to assert.

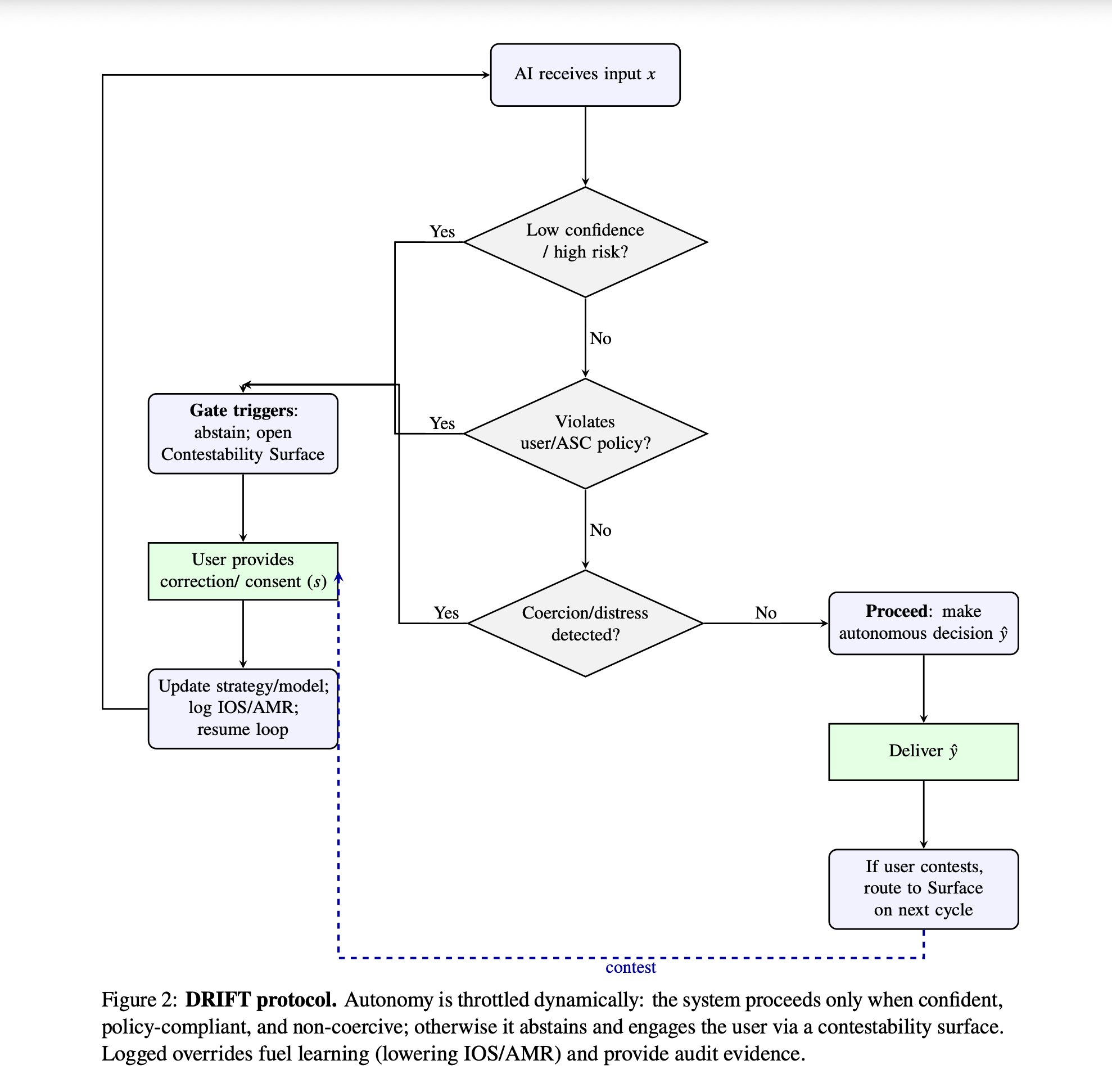

This is operationalized through what we call DRIFT: Dynamic Risk and Interpretability Feedback Throttling. DRIFT is a runtime protocol with a series of gates — uncertainty, policy compliance, coercion detection — that throttle the system's autonomy and route decisions to a contestability surface where the user can confirm, correct, or decline the system's reading.

We also introduced three alignment metrics. The Interpretive Override Score (IOS) measures how often the system contradicts the user's self-report. The After-correction Misalignment Rate (AMR) tracks whether the system repeats the same mistake after being corrected. Affective Divergence (AD) captures the distributional gap between what the model thinks you feel and what you report over time. Together, these make sovereignty auditable — not just declarable.

What the simulation showed

In proof-of-mechanism simulations, enforcing DRIFT with policy constraints reduced the Interpretive Override Score from 32.4% to 14.1%, and the repeat-error rate dropped from 36.4% to 13.2%. The cost was high abstention — roughly 70% of decisions were deferred to the user. That number might look like a flaw. I see it as the point. In domains where a system is interpreting your inner life, deferring to you is not inefficiency. It is respect.

These are simulation results, not field data. A preregistered human-subject study is planned. The claims are deliberately modest in method. But the implication is strong: when you price uncertainty and interpretive risk into the design, alignment improves without requiring any leap toward artificial general intelligence. Sovereignty is an engineering choice, not a philosophical luxury.

The road to this paper

The idea did not arrive in a single moment. It grew across disciplines — from psychoanalytic thinking about how meaning is constructed, through phenomenological accounts of first-person experience, to the regulatory architecture of the EU AI Act, which now places strict limitations on emotion inference in workplaces and schools but lacks the runtime mechanisms to enforce that prohibition at the point of inference.

I presented an earlier version of this framework at the SIpEIA Conference in Rome, under the theme "Accountability and Care." The response from philosophers, engineers, and legal scholars confirmed something I had suspected: the gap between ethical principles and technical enforcement is not just a policy problem. It is a design problem. And the design vocabulary did not yet exist.

This paper tries to supply part of that vocabulary. The Affective Sovereignty Contract (ASC) is a machine-readable policy layer that encodes user preferences and domain rules — disabling emotion inference in banned contexts, setting interruption budgets, specifying which emotions the system may even ask about. The Declaration of Affective Sovereignty, included in the paper, is not a constitutional charter but a normative design brief: eight testable tenets with measurable acceptance criteria and auditable hooks.

What this opens

The implications reach beyond emotion recognition. Any system that models, infers, or acts upon subjective human states — recommender systems shaping mood, therapeutic bots interpreting distress, companion agents managing attachment — faces the same question: who holds interpretive authority?

This paper is one element of a broader research program — one that examines how affective meaning is constructed, defended, and sometimes fractured, across computational, clinical, and narrative domains.

I believe the answer must be structural, not aspirational. Sovereignty must be embedded in objective functions, enforced at runtime, and measured over time. The person is the final arbiter of their inner life — not because self-reports are infallible, but because the alternative is a world in which machines quietly become the judges of what we feel.

Even the most perceptive AI should be counsel, never judge.

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Related Collections

With Collections, you can get published faster and increase your visibility.

Transforming Education through Artificial Intelligence: Opportunities, Challenges, and Future Directions

Artificial Intelligence (AI) is rapidly changing the educational field by enabling personalized learning, intelligent tutoring systems, automated assessments, learning analytics, and administrative automation.

This collection invites original research, systematic reviews, and visionary perspectives on the transformative impact of AI in education. It aims to explore how AI technologies can enhance equity, inclusion, and efficiency in educational settings across different contexts, including higher education, K-12, vocational training, and lifelong learning. This collection will address technical, pedagogical, ethical, and policy aspects, fostering interdisciplinary perspectives and evidence-based insights.

This Collection supports and amplifies research related to SDG 4 and SDG 9.

Keywords: Artificial Intelligence, AI in Education, Educational Technology, Data Analytics, AI Ethics

Publishing Model: Open Access

Deadline: May 31, 2026

AI for Image and Video Analysis: Emerging Trends and Applications

The application of AI in image and video analysis has revolutionized a wide range of domains, offering more accurate and efficient visual data processing. Thanks to advances in neural networks, large-scale datasets, and computational power, AI algorithms have surpassed traditional computer vision techniques in performance. This transformation has had a profound impact on areas like healthcare (where AI aids in diagnosing diseases through medical imaging), security (with real-time video surveillance), and entertainment (enhancing video quality and enabling automated content tagging). As AI continues to evolve, new challenges emerge, including the need for explainability, handling large datasets efficiently, improving robustness in real-world environments, and addressing biases in AI models. These open questions necessitate continued research, collaboration, and discourse. The proposed Collection focuses on the intersection of artificial intelligence (AI) and image and video analysis, exploring the latest advancements, challenges, and applications in this rapidly evolving field. As AI-powered techniques such as deep learning, computer vision, and generative models mature, they are increasingly being leveraged for tasks like image classification, object detection, video segmentation, activity recognition, facial recognition, and more. These technologies are pivotal in industries including healthcare, security, autonomous vehicles, entertainment, and smart cities, to name a few. We invite researchers and practitioners to submit articles related to, but not limited to, the following topics:

- Deep learning techniques for image and video analysis

- AI-based object detection and recognition

- Image segmentation and annotation using AI

- Video classification and activity recognition

- Real-time video surveillance and security systems

- AI for medical image analysis and diagnostics

- Generative adversarial networks (GANs) for image and video generation

- AI in autonomous driving and smart transportation systems

- AI-powered multimedia search and retrieval

- Human-Computer Interaction (HCI) through AI-based video analysis

- AI techniques for image and video compression

- Ethical concerns and responsible AI in image and video analysis

This Collection supports and amplifies research related to SDG 9 and SDG 11.

Keywords: computer vision; image segmentation; object detection; video surveillance

Publishing Model: Open Access

Deadline: Sep 15, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in