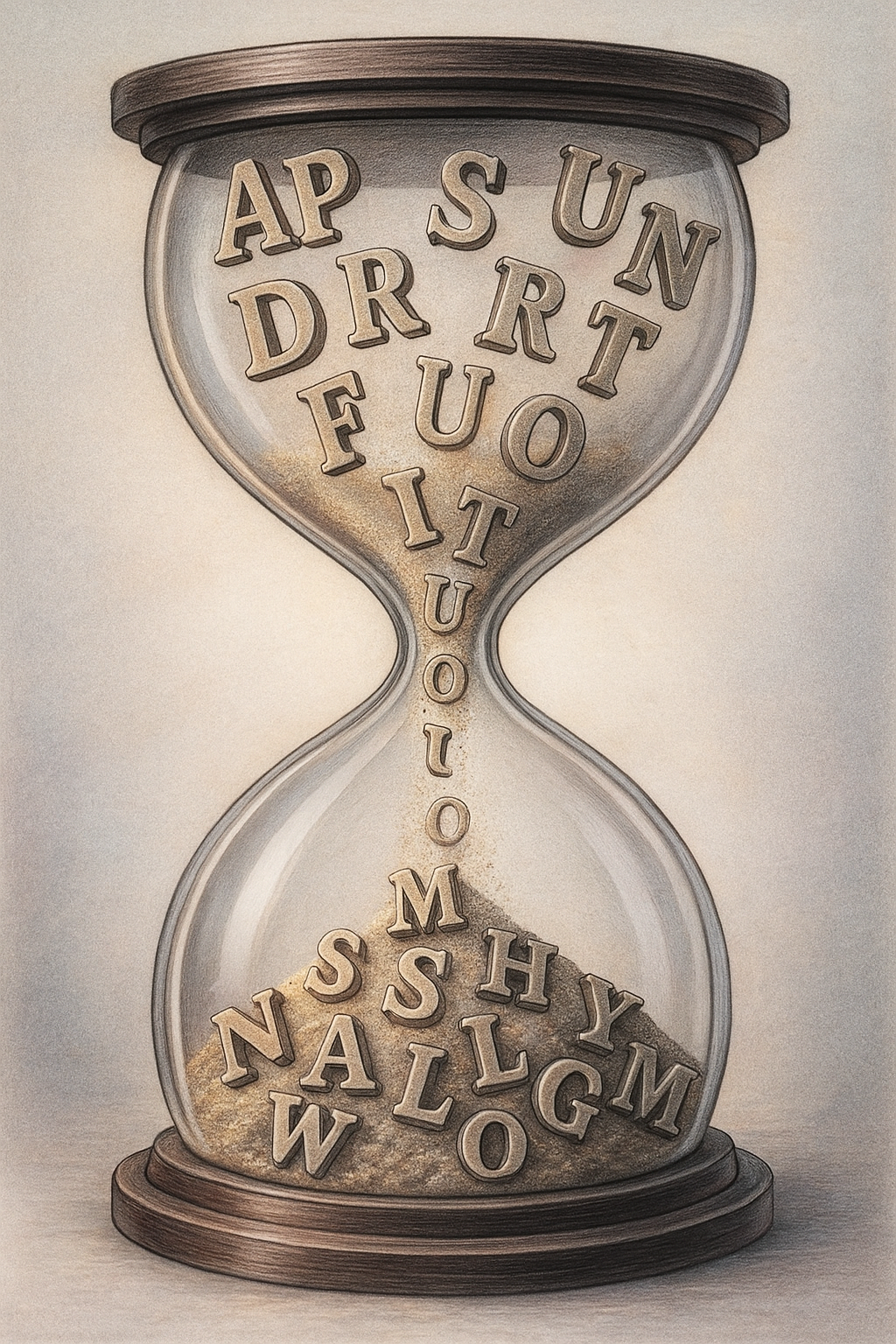

As words come of age, as words come undone

Published in Social Sciences, Electrical & Electronic Engineering, and Neuroscience

The linguistic boundary between healthy and pathological aging

The boundary between normal aging and early Alzheimer’s is rarely clear for the untrained eye. Experts rely on long cognitive batteries, expensive brain scans, and invasive biofluid markers. Yet, there’s another, simpler lens on the aging brain—one we use all day, often without thinking: the way we speak. In my recent Review, I mapped how speech and language change in healthy aging and in Alzheimer’s dementia, aiming to address a simple question: what’s “normal” for an aging brain, and what isn’t?

Aging voices

The linguistic manifestations of healthy aging are typically tracked by comparing older and younger adults. If you record healthy 70- or 80-year-olds while they name pictures, read words, or tell a story, a clear pattern emerges. Their speech organs—tongue, lips, palate, vocal folds—mostly keep doing their job. Voices may get a bit deeper or breathier; sentences may come with slightly more effort; but, for everyday conversation, most older adults remain perfectly intelligible.

The bigger changes show up in how fast and how efficiently they find and use words. Older adults take longer to recognize and retrieve words and have more “tip-of-the-tongue” moments (when meaning is accessible but the corresponding terms are not). Yet, their vocabulary is often richer than that of younger adults. The mental lexicon swells across adulthood, even if it takes us longer to access what we’ve stored.

Grammar is a quiet hero of healthy aging. When you ask seniors to judge whether a sentence is grammatical or to process complex structures, their performance resembles that of younger adults. There may be a small cost in speed or complexity, but their capacity to sequence words and word groups generally holds.

Where things get messier is at the discourse level: keeping up with fast, noisy, real-world conversation. These higher-level skills depend on broader cognitive functions like attention and executive control, which are themselves vulnerable to aging.

Overall, aging involves numerous speech and language changes. Yet, these are not evenly observed across domains and do not compromise spontaneous communication. So, they are not maladaptive—a crucial point to distinguish the linguistic signatures of aging from those of Alzheimer’s dementia.

When it’s more than aging

To understand the impact of Alzheimer’s on speech and language, the basic strategy is to compare elders with and without the disorder. Patients typically exhibit early and striking deficits in lexico-semantic tasks—at the level of word meaning and word choice. In tests that require naming or generating words from a semantic category, people with Alzheimer’s produce fewer words overall, rely more on common, generic terms, and make more semantic errors. Word-finding pauses become longer and more frequent. Stories become less informative and coherent, more reliant on stock phrases. Even when the grammar is intact, the message starts to fray.

Many elements remain relatively spared in mild stages (articulation, basic phonology, much core grammar), so patients may sound fluent on the surface even while their underlying semantic system deteriorates. Of course, as patients progress from the mild to the moderate and severe stage, multiple skills collapse further and deficits become highly, and often irreparably, noticeable.

This combination—subtle but pervasive changes in word use and discourse, with partial preservation of sound structure and syntax—helps distinguish early Alzheimer’s from healthy aging. Crucially, some of these linguistic differences show up years before a clinical diagnosis, sometimes even before the person or their family notices anything is wrong. Now, if that’s the case, how does one even capture such patterns? The answer lies in automated speech and language analysis (ASLA).

Let computers listen

Imagine a brief phone task, like describing a picture or retelling a short story. With a microphone, a bit of code, and the right models, ASLA can yield features such as pause length, rate, vocabulary, and syntactic complexity. These features feed machine learning models to distinguish healthy aging from early Alzheimer’s, estimate how severe the condition is, and flag people at risk before symptoms are obvious.

Results are promising. Combinations of timing and semantic features can approach or even match traditional cognitive tests, track disease severity and brain atrophy, and in some studies even predict who will eventually develop dementia. This is noteworthy because ASLA is cheap, fast, non-invasive, remotely deployable, and scalable—all essential properties as dementia cases balloon while specialist access remains uneven.

But there are pitfalls, too. Models are often built on small, noisy datasets drawn mostly from English-speaking, high-income samples. Cross-linguistic work is still in its infancy, even though more than 80% of the world does not speak English. Clinical adoption faces key challenges, such as regulatory hurdles, lack of normative data, and reservations from diagnosticians. Progress to circumvent these issues is being made in high-income countries, but not so much in low/middle-income countries. If we’re not careful, the very tools designed to democratize brain health could end up widening global inequities.

Where we go next

Speech and language changes are not a cosmetic detail of aging and dementia—they are central to autonomy, relationships, and quality of life. When words slip away, so do stories, jokes, arguments, and love declarations. Crucially, however, these very changes turn out to be rich, underused biomarkers of brain health. With better methods, larger and more diverse samples, responsible use of AI, and collaboration between clinicians, linguists, data scientists, patients, and caregivers, we can transform a simple, human act—speaking—into a powerful ally for prevention, diagnosis, and care.

If, in the near future, a short speech recording on a smartphone helps a doctor say, “This looks like typical aging” or “Let’s investigate further,” it may buy what matters most in Alzheimer’s: time, clarity, and a little less fear when the right word refuses to come.

Follow the Topic

-

Nature Reviews Psychology

An online-only journal publishing authoritative, accessible and topical Review, Perspective and Comment articles across the entire spectrum of psychological science, its applications and its wider societal implications.

Your space to connect: The Psychedelics Hub

A new Communities’ space to connect, collaborate, and explore research on Psychotherapy, Clinical Psychology, and Neuroscience!

Continue reading announcement

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in