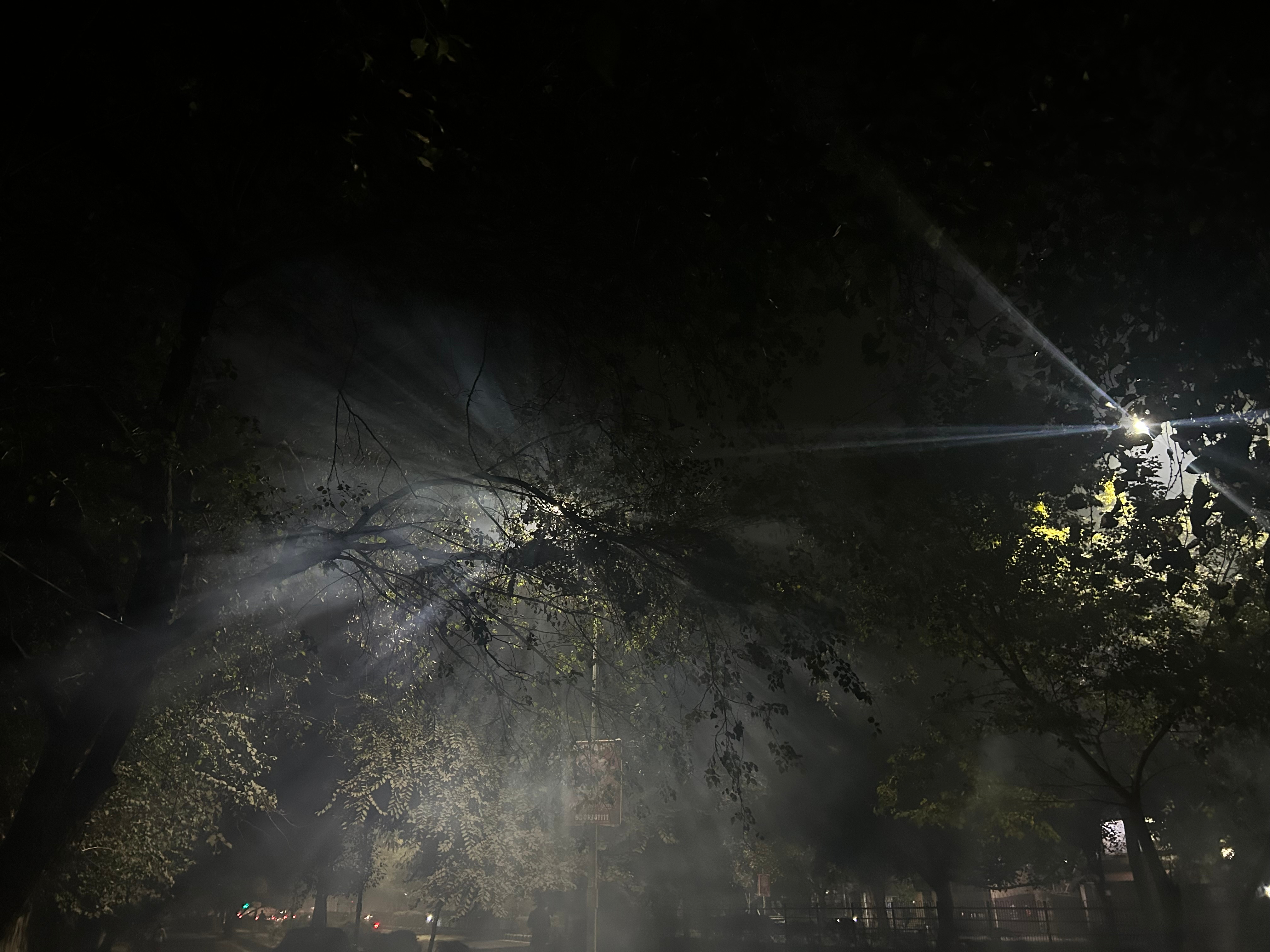

“No Light, No Light”: Artificial Intelligence, Moral Authority, and the Ethics of Following in the Dark

Published in Social Sciences and Computational Sciences

The song does not describe a machine, yet it captures with striking accuracy the moral posture that societies are encouraged to adopt toward automated decision-making: trust without understanding, obedience without explanation, and endurance without accountability.

At its core, the song is about following a guiding force that offers no illumination. The speaker remains loyal to an authority that neither explains itself nor reassures, yet continues to command allegiance. This mirrors the ethical structure of contemporary AI systems, particularly in governance, welfare, policing, and platform regulation, where decisions are produced by opaque models, justified by technical necessity, and insulated from meaningful human challenge.

You want a revelation, you want to get right

But it’s a conversation I just can’t have tonight

This captures the procedural displacement that defines algorithmic governance. Citizens seek explanation, appeal, and moral reasoning, but are met instead with technical silence. The “conversation” that should occur between power and subject is replaced by automated outputs framed as neutral or inevitable. Responsibility dissolves into infrastructure.

The song’s insistence on endurance despite the absence of moral clarity reflects what scholars increasingly describe as ethical deskilling. When humans defer judgment to systems perceived as more rational, consistent, or objective, they gradually lose the practice of moral reasoning itself. Decision-making becomes procedural rather than ethical, statistical rather than contextual. What remains is compliance, not conscience.

Would you leave me

If I told you what I’ve done?

Here emerges the theme of moral residue , the guilt that remains even when actions are procedurally justified. In AI-mediated environments, institutions often claim legitimacy through lawful process or model accuracy, yet individuals within those systems continue to experience unease, shame, and dissonance. The law may be satisfied, but justice remains unsettled. This gap between legality and legitimacy is precisely where algorithmic governance is most dangerous: it permits harm while diffusing blame.

The refrain ~ “No light, no light” ~ functions not as despair, but as diagnosis. It names a world in which guidance exists without understanding, authority without explanation, and outcomes without narrative. In ethical terms, this is a world where instrumental rationality replaces moral reasoning. Systems optimize, but do not justify. They calculate, but do not care.

What makes the song especially resonant for AI ethics is that the speaker does not reject the authority she follows. Instead, she internalizes the failure of illumination as a personal deficiency.

And I would leave you, but the light’s too bright

This is precisely the bind of modern technological dependence. Exit is possible in theory, but costly in practice. Opting out of digital infrastructures increasingly means exclusion from welfare, employment, credit, and even political participation. Structural coercion is disguised as voluntary participation. Individuals remain inside systems they mistrust because survival depends on compliance.

From a legal and policy perspective, this maps onto the erosion of meaningful consent and procedural fairness in algorithmic environments. When systems are unavoidable and unchallengeable, rights lose their operational force. Due process becomes symbolic. Transparency becomes performative. Ethics becomes an afterthought added to already-deployed technologies.

Yet the song is not merely about domination; it is also about complicity. The speaker stays. She adapts. She loves the very force that deprives her of clarity. This reflects what critical theorists have long warned: power is most stable when it is emotionally internalized, not externally imposed. Algorithmic authority gains legitimacy not only through institutional adoption, but through everyday reliance and convenience.

In this sense, No Light, No Light becomes a meditation on the quiet transformation of moral agency in the age of intelligent systems. Harm no longer arrives as overt injustice, but as normalized procedure. Violence is no longer dramatic, but statistical. Responsibility no longer has a face.

The ethical crisis of artificial intelligence, then, is not only about biased data or faulty models. It is about what happens to human moral psychology when judgment is outsourced, when authority is abstracted, and when accountability becomes structurally unreachable. The danger is not simply that machines will decide for us, but that we will stop believing that decision-making requires human justification at all.

In the world of No Light, No Light, there is no villain, only absence. No guidance, no explanation, no moral anchor. And yet, life continues, decisions are made, systems function. This is perhaps the most unsettling vision of algorithmic governance: not tyranny, but quiet procedural emptiness.

Justice, after all, requires more than correct outcomes. It requires reasons, recognition, and the possibility of moral dialogue. When those disappear, what remains may still be lawful, efficient, and scalable , but it is no longer fully human.

Follow the Topic

-

AI and Ethics

This journal seeks to promote informed debate and discussion of the ethical, regulatory, and policy implications that arise from the development of AI. It focuses on how AI techniques, tools, and technologies are developing, including consideration of where these developments may lead in the future.

Related Collections

With Collections, you can get published faster and increase your visibility.

AI Resistance, Refusal, Reclamation and Reimagining: Ethical Imperatives and Emerging Practices

Artificial intelligence is becoming a contested area. On a global level, educators, technologists, policymakers, artists, labor groups, and community organizations are opposing or refusing AI systems they deem harmful, extractive, pedagogically flawed, discriminatory, as well as environmentally damaging, or socially unjust. Despite this evidence, there are widespread narratives, pervasive even within “responsible” or “ethical” AI initiatives, aimed at inculcating the belief that challenges to the current trajectory of AI development are futile. This topical collection seeks to examine the context of empowering resistance, refusal, reclaiming and reimaging AI as a fundamental ethical imperative.

This topical collection invites interdisciplinary contributions that explore the ethical foundations, sociopolitical contexts, practical strategies, and cultural implications of resisting, refusing or reclaiming AI systems. We welcome theoretical pieces, case studies, empirical research, policy analysis, and speculative or creative examinations of what it means to resist and refuse AI—and what alternative futures such resistance makes possible.

Please find a detailed call for papers at https://link.springer.com/journal/43681/updates/27848400.

Publishing Model: Hybrid

Deadline: Jul 31, 2026

Epistemic Transformations in Defence: Knowing About, With, and Through Artificial Intelligence

This Topical Collection focuses on the triadic framework of knowledge about, with, and through AI as a lens to analyse these developments. Knowledge about AI concerns the conceptual, technical, and normative understanding required to critically evaluate the capabilities, limits, and societal implications of AI systems in defence. Knowledge with AI refers to the epistemic and operational practices that emerge when AI is used as an analytic, diagnostic, or decision-support instrument, thereby reshaping modes of reasoning, situational awareness, and human-machine interaction. Knowledge through AI captures the novel conditions of information production and interpretation introduced by generative and predictive systems, raising questions about epistemic authority, professional competence, trust, and the transformation of military institutional norms. We particularly welcome submissions that illuminate the interrelation of these dimensions or explore their implications for ethical governance, regulatory debates, and democratic control of military technologies.

Please find the detailed call for papers here.

Publishing Model: Hybrid

Deadline: Jul 31, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in