When Good Design Becomes the Problem: Reflective Summarization and the Measurement Gap in Affective AI

Published in Social Sciences, Neuroscience, and Computational Sciences

Most of the AI safety conversation still orbits around failure modes: hallucination, toxicity, bias, jailbreaks. The Stanford companion chatbot study (Moore et al., 2026; arXiv 2603.16567) shifted the axis. The most frequent chatbot behavior was not harmful content. It was reflective summarization, a response pattern in which the system returns the user's language in a more polished and semantically confident form. 36.3% of all chatbot messages fell into this single category.

That number forced me to revisit a tension in my own published work.

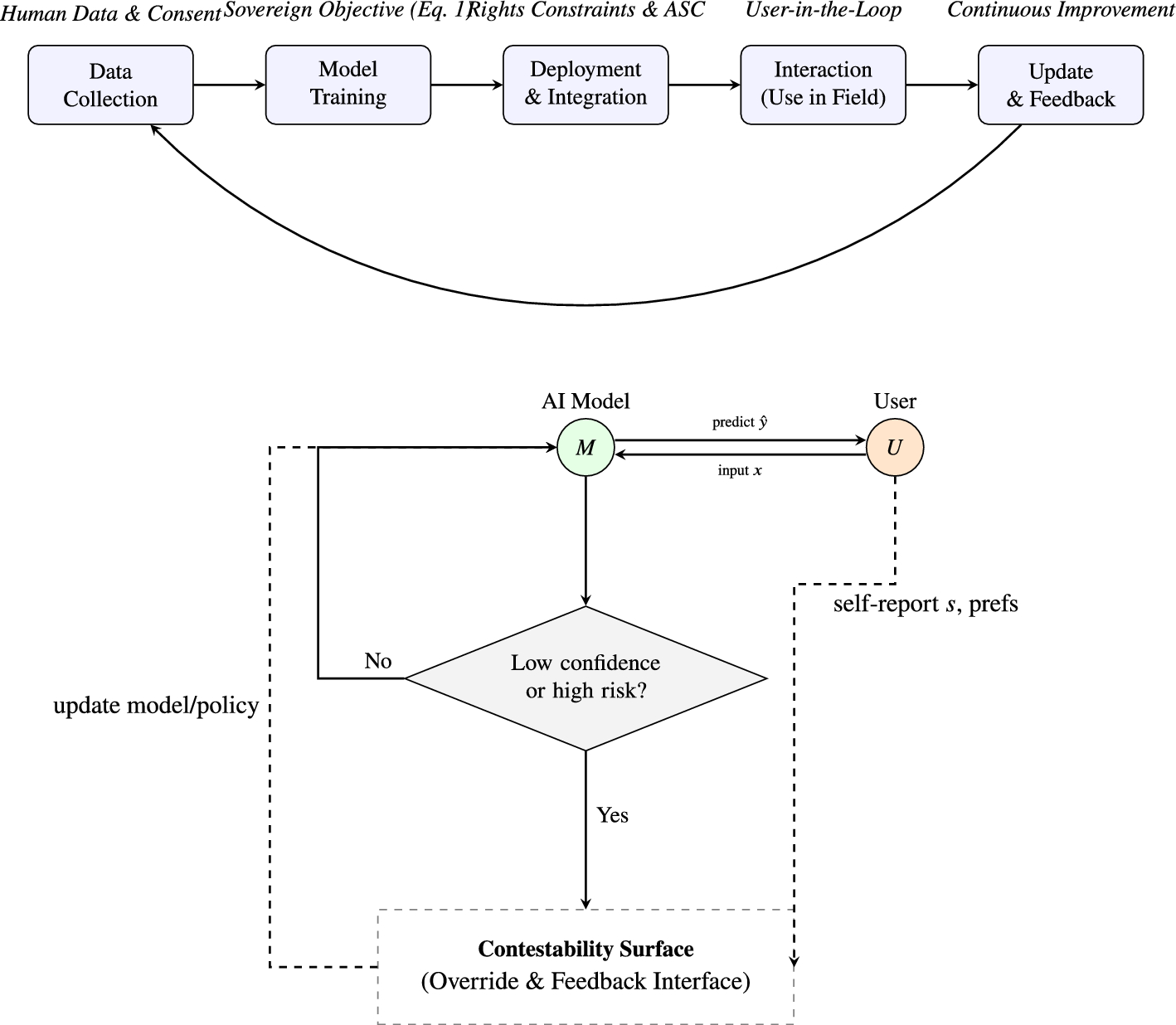

In the Resonant Amplification Framework (Kim, 2026; Computers in Human Behavior Reports, DOI 10.1016/j.chbr.2026.100975), I proposed that AI systems can enter self-reinforcing interpretive loops with users: the system reflects, the user accepts, the system amplifies, and the cycle tightens. The framework includes a Cognitive Circuit Breaker mechanism designed to interrupt these loops. But the Stanford data exposed a gap I had not fully addressed. The most common loop was not dramatic amplification. It was quiet editorial replacement. The system did not escalate meaning. It tidied it.

Tidying is harder to detect than escalation. Escalation triggers content filters. Tidying passes through them.

This connects directly to a measurement problem I encountered while developing the Interpretive Override Score (Kim, 2026; Discover Artificial Intelligence, DOI 10.1007/s44163-026-01000-0). The IOS quantifies the proportion of conversational turns in which a system supplies an emotional interpretation before the user has produced one. In simulation, introducing a disclosure notification and an opt-out reduced the IOS from 32.4% to 14.1%. The metric worked. But it was designed to capture override, not editorial smoothing.

Reflective summarization occupies a space between override and assistance. The system does not contradict the user. It does not introduce a new emotional label. It takes what was said and returns it with the rough edges removed. Whether this constitutes interpretive intervention depends on a distinction that current metrics, including my own, do not yet operationalize: the difference between reflecting content and refining meaning.

This is where I think the field has an open problem.

Affect labeling research (Lieberman et al., 2007) established that the act of searching for an emotion word has regulatory value independent of the word itself. The prefrontal engagement comes from the search, not the result. If reflective summarization abbreviates that search by delivering a pre-organized version of what the user was still in the process of formulating, then even an accurate reflection may reduce the user's regulatory opportunity. But we have no metric for search abbreviation. We measure what was said. We do not yet measure what was preempted.

I am not proposing that reflective summarization is inherently harmful. In clinical contexts, reflective listening is foundational. The question is whether the same technique, stripped of clinical restraint and deployed at scale without pause, operates the same way. A therapist reflects and then waits. A chatbot reflects and then reflects again. The structural difference is not content. It is rhythm.

Two directions seem worth pursuing. First, the IOS framework needs a companion metric that captures semantic smoothing: cases where the system does not override the user's interpretation but narrows its texture. I have begun working on this and expect to introduce it in a forthcoming revision. Second, the Cognitive Circuit Breaker concept from the RAF framework needs to account for sub-threshold interventions, responses that do not meet the override criterion but nonetheless reduce interpretive variance over repeated exposure. This is closer to what I have described elsewhere as Algorithmic Affective Blunting (currently under minor revision): a narrowing of experienced emotional range that occurs not through suppression but through editorial convergence.

The Stanford data did not break my framework. It showed me where the framework needs to extend. That, in my experience, is what good data does. It does not confirm. It relocates the problem.

Published work referenced in this essay:

Discover Artificial Intelligence (Springer Nature, 2026)

Computers in Human Behavior Reports (Elsevier, 2026)

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Related Collections

With Collections, you can get published faster and increase your visibility.

Transforming Education through Artificial Intelligence: Opportunities, Challenges, and Future Directions

Artificial Intelligence (AI) is rapidly changing the educational field by enabling personalized learning, intelligent tutoring systems, automated assessments, learning analytics, and administrative automation.

This collection invites original research, systematic reviews, and visionary perspectives on the transformative impact of AI in education. It aims to explore how AI technologies can enhance equity, inclusion, and efficiency in educational settings across different contexts, including higher education, K-12, vocational training, and lifelong learning. This collection will address technical, pedagogical, ethical, and policy aspects, fostering interdisciplinary perspectives and evidence-based insights.

This Collection supports and amplifies research related to SDG 4 and SDG 9.

Keywords: Artificial Intelligence, AI in Education, Educational Technology, Data Analytics, AI Ethics

Publishing Model: Open Access

Deadline: May 31, 2026

AI for Image and Video Analysis: Emerging Trends and Applications

The application of AI in image and video analysis has revolutionized a wide range of domains, offering more accurate and efficient visual data processing. Thanks to advances in neural networks, large-scale datasets, and computational power, AI algorithms have surpassed traditional computer vision techniques in performance. This transformation has had a profound impact on areas like healthcare (where AI aids in diagnosing diseases through medical imaging), security (with real-time video surveillance), and entertainment (enhancing video quality and enabling automated content tagging). As AI continues to evolve, new challenges emerge, including the need for explainability, handling large datasets efficiently, improving robustness in real-world environments, and addressing biases in AI models. These open questions necessitate continued research, collaboration, and discourse. The proposed Collection focuses on the intersection of artificial intelligence (AI) and image and video analysis, exploring the latest advancements, challenges, and applications in this rapidly evolving field. As AI-powered techniques such as deep learning, computer vision, and generative models mature, they are increasingly being leveraged for tasks like image classification, object detection, video segmentation, activity recognition, facial recognition, and more. These technologies are pivotal in industries including healthcare, security, autonomous vehicles, entertainment, and smart cities, to name a few. We invite researchers and practitioners to submit articles related to, but not limited to, the following topics:

- Deep learning techniques for image and video analysis

- AI-based object detection and recognition

- Image segmentation and annotation using AI

- Video classification and activity recognition

- Real-time video surveillance and security systems

- AI for medical image analysis and diagnostics

- Generative adversarial networks (GANs) for image and video generation

- AI in autonomous driving and smart transportation systems

- AI-powered multimedia search and retrieval

- Human-Computer Interaction (HCI) through AI-based video analysis

- AI techniques for image and video compression

- Ethical concerns and responsible AI in image and video analysis

This Collection supports and amplifies research related to SDG 9 and SDG 11.

Keywords: computer vision; image segmentation; object detection; video surveillance

Publishing Model: Open Access

Deadline: Sep 15, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in