Auditing TikTok’s Algorithm During the Most Consequential Election of Our Lives

Published in Social Sciences, Arts & Humanities, and Law, Politics & International Studies

How it started

Watching this unfold from our lab at NYU Abu Dhabi, we realized something: here was a platform at the very center of American political life, one that was about to mediate a presidential election for tens of millions of young voters, and yet almost no one had systematically tested whether its “For You” feed was treating both sides of the political aisle the same way. The confluence of TikTok’s political salience and the approaching election felt like a research opportunity we couldn’t pass up.

Building on what we'd learned before

This wasn't our first time auditing a platform's political content. In 2023, our team published a study in PNAS Nexus showing that YouTube's political recommendations exhibited a left-leaning skew in the United States. That project taught us the fundamentals of sock-puppet auditing at scale—and, just as importantly, taught us how many things can go wrong (although we did discover new things to go wrong!). TikTok, though, presented a different beast entirely. Unlike YouTube, where users actively search and subscribe, TikTok's “For You” feed is almost entirely algorithm-driven, which meant user agency was far more constrained and any systematic patterns in the content served to users would be far more visible.

What made both projects work was the mix of people involved. Our team brings together computer scientists, a political scientist, and researchers with backgrounds spanning network systems, AI, and experimental design. In practice, this means a lot of conversations where one person's obvious assumption is another person's revelation. The engineering questions—how do you build a scalable bot infrastructure that TikTok's detection systems won't catch?—constantly bumped up against the conceptual ones: what does “skew” mean in a recommendation system, and how do you distinguish algorithmic amplification from the natural asymmetries in how partisans produce and consume content?

These weren't separate conversations happening in parallel. They shaped each other. Our experimental design, outcome measures, and the claims we could credibly make all emerged from the back-and-forth between people who think about systems and people who think about politics. By the end of the project, our group meetings had a quality we've come to value deeply—everyone was constantly teaching and constantly being taught.

21 phones, 3 states, and a lot of factory resets

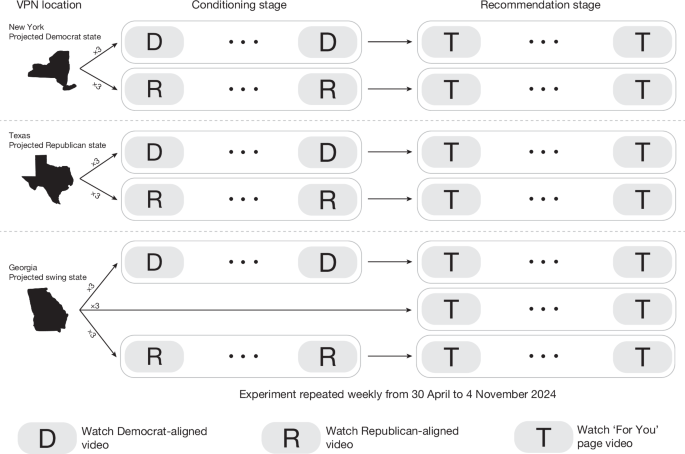

The experiment itself was conceptually simple but logistically intense. We created “sock puppet” accounts—bots that mimic real TikTok users—and seeded them with either Democratic or Republican political content. Then we recorded what TikTok recommended to them.

Every week from late April through early November 2024, we spun up 21 fresh TikTok accounts across three states: New York (a Democratic stronghold), Texas (a Republican stronghold), and Georgia (a swing state). Each phone was GPS-spoofed and routed through custom VPN servers we hosted ourselves—we avoided commercial VPNs because TikTok might recognize their IP addresses. After each weekly experiment, every phone was factory-reset to wipe any trace of the previous account, and the cycle started again.

This sounds straightforward on paper. In practice, it meant maintaining a small phone farm of Samsung Galaxy A34 devices, each needing its GPS mocked, VPN configured, and TikTok freshly installed before every run. If a phone lost internet connectivity or TikTok flagged an account as a bot mid-experiment, that run was lost. Out of 567 experiments we attempted, only 323 met our quality threshold. During one particularly frustrating stretch in late July and August, our Texas bots lost connectivity entirely while the team was unavailable to intervene—weeks of potential data, gone.

Teaching bots to be partisan

Each bot went through a “conditioning stage” where it watched up to 400 videos from channels aligned with one political party, teaching TikTok's algorithm what kind of user it was dealing with. Then came the “recommendation stage”: six days of passively scrolling the For You page, watching whatever TikTok served up.

Finding balanced conditioning material was its own challenge. We compiled 54 politically aligned channels, then matched Democratic and Republican channels by follower count and cumulative likes to ensure our bots were being trained on comparably popular content. Two of our Republican-aligned channels disappeared mid-experiment—deleted or made private—forcing us to swap in replacements on the fly.

One subtlety we hadn't fully anticipated: the Republican seed channels skewed toward independent creators, while the Democratic channels were more often official officeholders. This is the kind of asymmetry that a purely technical audit might brush past, but our experience working across disciplines meant it was flagged immediately as something that could matter for interpretation. We ultimately note it as a limitation—the real-world supply of partisan content is not perfectly symmetric, and our experiment inherited some of that imbalance.

What the platform showed us

Over 27 weeks, our bots collectively watched more than 280,000 recommended videos. After classifying the political content using an ensemble of three large language models—validated against human annotators—the picture that emerged was striking.

Republican-seeded accounts received roughly 11.5% more co-partisan content than Democratic-seeded accounts. Meanwhile, Democratic-seeded accounts were exposed to about 7.5% more cross-partisan material—and that cross-partisan content was overwhelmingly anti-Democratic in nature. This wasn't a case of Democrats simply seeing more Republican cheerleading; they were disproportionately shown content attacking their own side.

We built 48 counterfactual models to test whether this skew could be explained by differences in engagement metrics—likes, comments, shares, follower counts. Across every model, the observed Republican-leaning skew substantially exceeded what engagement alone would predict.

The negativity problem

One of our most striking findings was about tone. Both sides saw more attack content than supportive content—the platform seemed to surface negativity more readily across the board. But the asymmetry was telling: Republican bots were significantly shielded from anti-Republican content (seeing it only 5.8% of the time), while Democratic bots were flooded with anti-Democratic material (14.2%). Regression analysis confirmed that anti-Democratic videos were the single largest driver of cross-partisan recommendations, even after controlling for engagement and fixed effects.

This pattern didn't spread evenly across issues either. Using an LLM-assisted topic classification framework, we found that the skew concentrated in specific policy domains: immigration, crime, and foreign policy drove cross-partisan exposure for Democrats, while abortion was the standout domain for Republicans.

Do real users notice?

Algorithmic audits can feel abstract, so we wanted to know whether actual TikTok users perceived what our bots detected. We surveyed 1,008 U.S.-based TikTok users and found a clear pattern: Republican respondents were significantly more likely to report seeing co-partisan, positive, and pro-Trump content on their feeds, while Democrats did not report equivalent experiences with their own side's content. The alignment between our experimental findings and users' lived experiences gave us confidence that what we measured in the lab was not just a technical artifact.

What we still don't know

We want to be upfront about what our study cannot tell you. We can document that TikTok's recommendations produce asymmetric partisan exposure, but we cannot definitively say whether this stems from intentional algorithmic design, emergent properties of the recommendation system responding to the broader content ecosystem, or some combination of both. Our bots capture only the earliest stage of a user's life on the platform—how these patterns evolve over months or years of real engagement remains an open question.

We also relied on video transcripts for classification, which means we missed political messaging conveyed through visuals, audio tone, or non-English languages. And after November 11, TikTok ramped up its bot-detection efforts, cutting off our ability to study the post-election period.

Why it matters

TikTok has become a primary news source for young Americans—a demographic that shifted ten percentage points toward Trump between 2020 and 2024. When a platform's recommendation engine systematically shapes what hundreds of millions of users see, even modest asymmetries in content exposure can have outsized consequences for democratic discourse.

Our findings don't tell us that TikTok deliberately put its thumb on the scale. But they do tell us that the scale wasn't balanced—and that platforms wielding this kind of influence over political information deserve far more transparency and scrutiny than they currently receive.

More than anything, this project—like the YouTube study before it—reinforced something we've come to believe deeply: the questions that matter most at the intersection of technology and democracy don't belong to any single discipline. They require people who build systems and people who study politics to sit in the same room, challenge each other's assumptions, and be willing to learn things they didn't know they didn't know. That remains the most rewarding part of this work, and it's what keeps us coming back for more.

Follow the Topic

-

Nature

A weekly international journal publishing the finest peer-reviewed research in all fields of science and technology on the basis of its originality, importance, interdisciplinary interest, timeliness, accessibility, elegance and surprising conclusions.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in