Digital Haptics for Navigating without Vision

Published in Neuroscience and Behavioural Sciences & Psychology

Explore the Research

Learning and navigating digitally rendered haptic spatial layouts

npj Science of Learning - Learning and navigating digitally rendered haptic spatial layouts

Digital tactile maps for the visually impaired

We have all been in the situation of having to move through our home during the night without any lights on. Now imagine doing this in a completely unknown space! That’s what everyday navigation can feel like for people suffering from partial or complete loss of vision. That is why effort is being put into developing alternative technological solutions for sightless navigation. Digital tablets can render haptic feedback using ultrasonic vibrations to modulate the friction that is felt under the exploring finger. Such tablets are a promising solution for navigation without vision.

First steps

To test the efficacy of this technology and the ability of people to learn from haptic information alone, we began with relatively simple, highly familiar shapes; namely letters. This is not a trivial task, as we had to determine the best way to teach people to explore a screen using their finger without missing important details, in a limited amount of time, with some technical considerations (e.g. warming of the tablet or desensitization of the skin). We also wanted to make this task available to visually-impaired individuals, given that we were working towards developing everyday solutions for this specific part of the population. We had to work together with clinicians and occupational therapists, as well as with engineers, in order to understand how to tame the technology and make it as user adapted as possible. We built focus groups with visually-impaired patients to understand their needs and preferences. After finally devising two successful experiments with both sighted and visually impaired individuals and seeing that people could really imagine in their mind’s eye what the textures on the tablet were conveying, we realized this technology had the potential to emulate tactile maps that are typically used to learn new places prior to navigation. Moreover, being portable, this technology could even be combined with other technologies, such as GPS, to become a live digital map service, as found in mobile applications.

The experiment

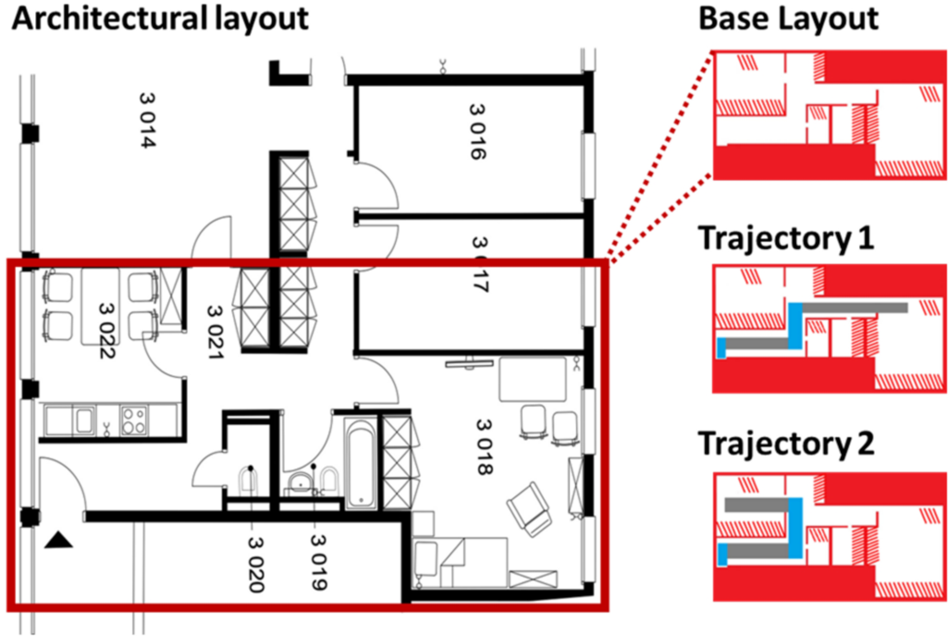

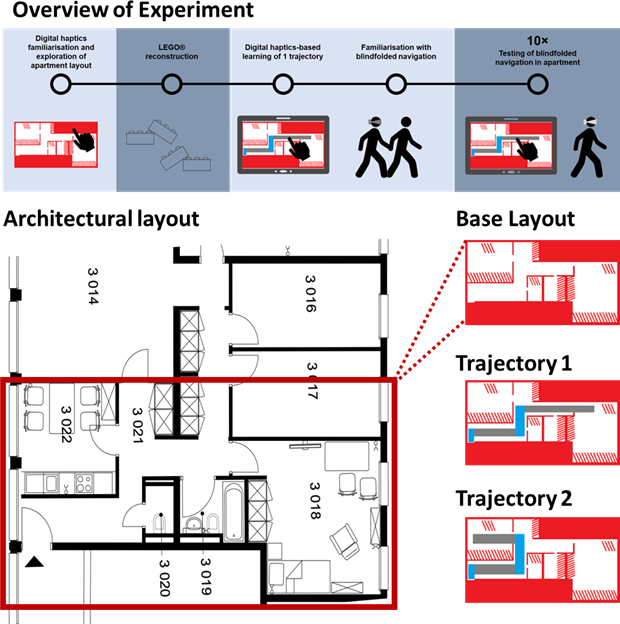

Even if the first experiments indicated that people could imagine simple shapes when using the tablet, the act of learning the layout of a certain space, positioning yourself within that space in your mind, and then moving through that space in real life without using your sight is a particularly complex task. How could we really test whether people really learned the maps we presented them? How could we make sure that participants wouldn’t hurt themselves when exploring without vision? After multiple trials and working together with sighted and visually-impaired participants, as well as with therapists and clinicians, we decided how to explore these issues (see Figure). First, all participants would wear a blindfold and noise-cancelling headphones for all tasks, to completely isolate the tactile sensation from other competing sensory information. Participants would first learn a basic layout of a space, that comprised four rooms, separated by a corridor. This layout would be rendered in a certain type of digital texture, that would feel a bit pointy to the finger. The main task would consist in placing the rooms correctly in their minds and understanding the shape of this corridor (see Movie 1). After this, we would be able to test how well they understood the space by letting them recreate the space using LEGO pieces. After this step, we would add a trajectory within the apartment using a new type of texture, different from the texture used for the walls and the obstacles within the apartment, that would feel smoother to the finger (see Movie 2). After training participants on this trajectory, we would ask them to go into the apartment and we would teach them the rules of the navigation task: what counted as an error or as a deviation from the path, how they had to explore without being able to see, without running into obstacles, and what they had to do when they reached the endpoint of the trajectory. Following this, the testing phase comprised of presenting participants two different trajectories, presented five times each in random order. We filmed participants’ navigation and had these videos rated by two independent raters (see Movie 3).

Results

Most importantly, participants were able to successfully learn, recreate, and navigate the layout and the trajectories, after only 45 minutes of training on the tablet. We quickly noticed that participants had more trouble learning one of the trajectories despite similar design and length of these two trajectories. When analysing the results, it turned out that when participants were trained on this harder trajectory, they had no issues with the easier one, but this did not also happen if participants would be trained on the easy trajectory. The only difference between the two groups was that participants trained on the hard trajectories took longer to navigate these than the easier trajectories, and that they spent less time off track on the untrained trajectories than the group trained on the easy trajectory. There was a moderate negative correlation between performance scores and reconstruction of the mazes for the latter group, which was, however, not significant. Collectively, these results indicate that having a clearer mental image of the basic layout of a space might be beneficial for navigation when exploring difficult trajectories that one was not familiarized with, and that taking more time to navigate harder trajectories might improve performance.

Implications

Our results have implications beyond the obvious use of digital haptics for the support of sightless navigation, which is the next idea that needs to be validated before actual implementation. The idea that individuals can use haptics to create mental images of objects and places, to manipulate those images in their minds and then transpose them to the real life can open many new avenues for research, education, leisure, and technological applications. Domains such as virtual reality and gaming, online shopping, museum visits for the visually impaired, but also general rehabilitation can be enhanced by digital haptics, which promises more accessibility, user comfort and ease of access through its digital nature. In conclusion, digital haptics is a promising field, and with a little refinement and continued research it could majorly assist our society in proposing solutions to everyday problems with the aim of becoming more inclusive and reducing discrimination.

Follow the Topic

-

npj Science of Learning

An online open access peer-reviewed journal dedicated to research on all aspects of learning and memory – from the genetic, cellular and molecular basis, to understanding how children and adults learn through experience and formal educational practices.

Related Collections

With Collections, you can get published faster and increase your visibility.

Reimagining Teaching and Learning in the Age of Generative AI Agents

Publishing Model: Open Access

Deadline: Jul 13, 2026

Effects of lifestyle behaviours on learning and neuroplasticity

Publishing Model: Open Access

Deadline: Jun 09, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in