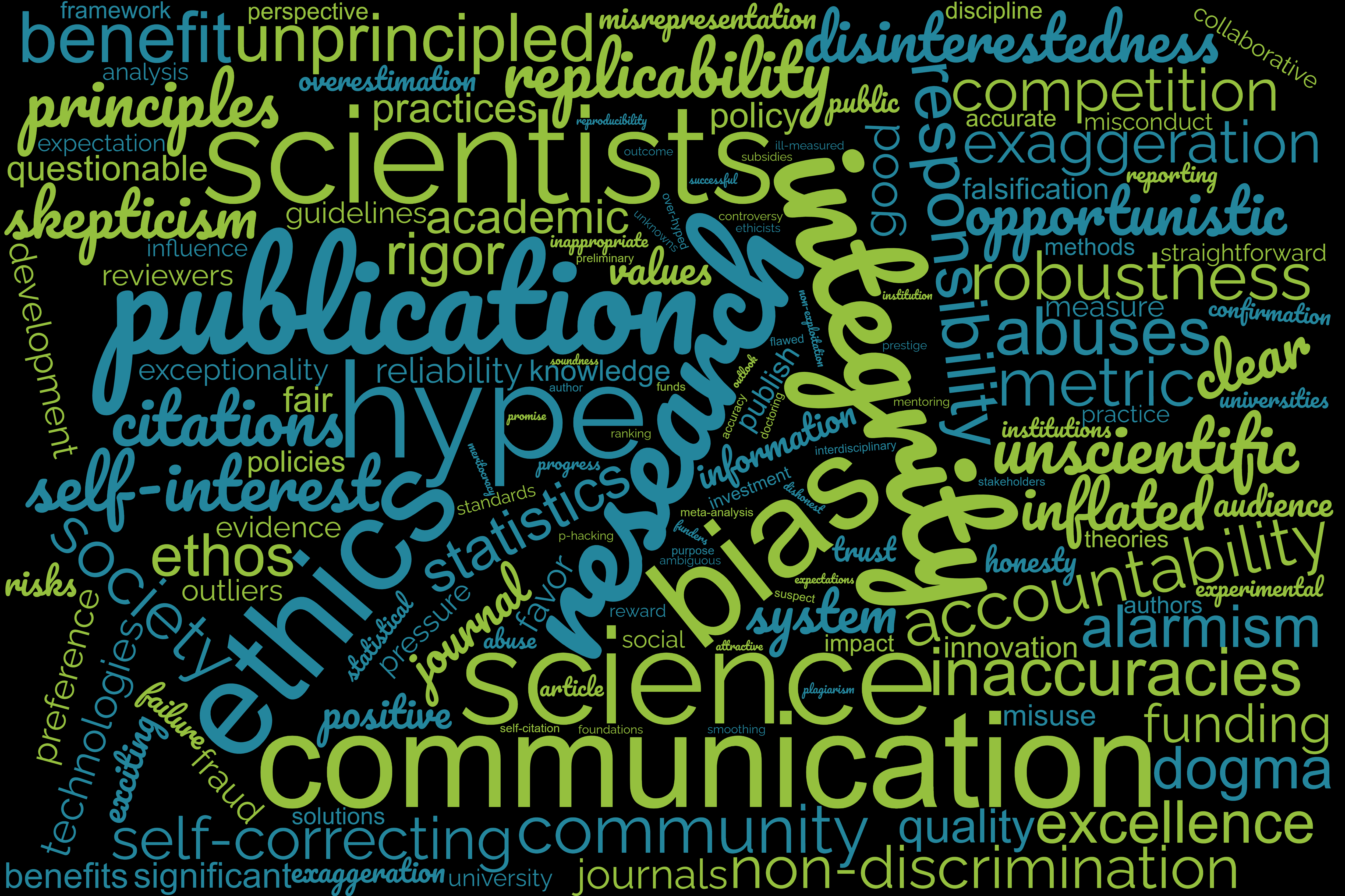

Ethics of Hype and Bias in Science

Published in Bioengineering & Biotechnology

Lack of attention and, in some cases encouragement, of hype and bias in academic research poses a significant risk to the scientific enterprise. Still, open discussions on the ethical implications of the practice of hype and bias in the context of the values that underpin scientific integrity, particularly in the academic research environment, remain uncommon. Many researchers are unaware of the different dimensions these two practices embody in science, how the current culture of hype and bias can start to be addressed, and in which measure promoting and including discussions about these themes in the broader field of scientific integrity might help curb the development of such phenomena.

Ethics in science and scientific integrity

According to the European Code of Conduct for Research Integrity, the primary goal of science is to increase the understanding of the world and ourselves. Scientific research rests on a premise of freedom to define research questions and to formulate tentative theories on the workings of systems under study, and through appropriate methods, gather data that can confirm or deny said theories. This code also states that maximizing ‘the quality and robustness of research’ is one of the basic responsibilities of the scientific community. This responsibility requires the formulation of criteria for adequate research behavior, and response to violations of these norms (Foundation and Academies 2017). One might then wonder if the scientific community is not failing to uphold these guidelines in a climate permeated by a reproducibility crisis, preference for exciting positive results, metric-centered evaluations of researchers and projects, increasing numbers of retracted papers and rampant ‘engineering’ of research outcomes. In fact, the issue of scientific integrity was never more relevant than in today’s scientific world where the sheer number of publications makes it nearly impossible to keep up and every paper must be critically analyzed because peer review is no longer a safe bet when it comes to judging the scientific accuracy of the reported facts .

Fraud is clearly categorized as scientific misconduct, an act that compromises the integrity of science in itself. However, many scientists work in a manner that while not qualifying as fraud, does tip the scales worryingly in their favor by making unprincipled use of researchers’ degrees of freedom (RDFs). RDF is a concept that covers often arbitrary decisions, mostly from a conceptual and methodological standpoint that can occur throughout the scientific process and can lead to questionable research practices. When do we cross the line between opportunistic use of RDFs and outright fraud? This brings us to the importance of ethos in science.

Today, science is a more collaborative effort than ever before. All existing lines of research are supported and justified by discoveries made prior. In this sense, science is an eternal construction where the upper levels are only as stable as the foundations. With this understanding it is easy to see how the existence of a common framework of conduct for the scientific process is essential to future progress. In a perfect world, when a scientist reads a piece of scientific literature and bases future research on it, they should be able to trust that what has been reported is as close to the truth as the methods used allow. This is only possible if we know that all scientists conduct themselves in the same manner, following the same ethos and standards. Despite the checks and balances in place to ensure the reliability of published findings, much of the process is still heavily dependent on the integrity of the individuals involved, be it researchers themselves, journal editors, reviewers, academic institutions or funding bodies. Moreover, scientists and scientific institutions are afforded very high levels of epistemic trust from society which also features an ethical component (Resnik 2011).

Some of the first established values of good scientific practice were outlined by Robert K. Merton in 1942. Communalism is the idea that scientific discoveries are jointly owned by the scientific community and scientists need to publicly disclose their findings. Universalism addresses the concept of equality in the sense that discoveries should be judged on grounds of arguments, methodology and evidence regardless of the scientist’s personal situation (e.g., race, gender, nationality, or institution). Disinterestedness is based on the principle that scientists should be impartial and work only for the benefit of science. Personal gain should not matter, but rather only the quality of the research findings. And finally, Organized Skepticism embodies the conditional acceptance of scientific findings based on critical scrutiny by the community (Merton 1973). The influence of science in society has grown, changed and evolved over the years, strongly influencing our daily lives and therefore subjected to economic and political pressures. The COVID-19 pandemic has further highlighted the importance of communicating credible science to a general public. Many scientists, at least in the academic world, would probably claim to support these norms that embody the ideal scientific community. The reality we are faced with, however, falls short. More worryingly, many times we fail to notice or pretend not to notice the areas where researchers fail to embody these values.

The European Code of Conduct for Research Integrity subscribes to the following principles: reliability of the quality of research; honesty by promoting transparent, fair, complete and unbiased research activity; respect for all the people, animals, ecosystems, societies involved in the scientific enterprise and the environment; and lastly accountability in all the steps of the scientific process from hypothesis to publication, as well as in the training and mentoring of trainees (Foundation and Academies 2017). Despite the different wording, the spirit of Merton’s values is also present in these principles. We can consider this background as a viable framework with which to evaluate the phenomena of hype and bias in science.

Additionally, a recent review identified several ethical principles that underlie scientific research ethics common across scientific disciplines including such concepts as benevolence, non-discrimination, non-exploitation, honesty, integrity, duty to society, professional competence and professional discipline (Weinbaum et al. 2019).

Bias

Scientists are only human. As such, they can fall prey to biases in their professional endeavors. Biased scientists are an inevitability, however the view of science as an objective enterprise assumes that these biases can be overcome by the scrutiny of a peer review process. Biased scientists need not necessarily give rise to biased research. The concept of self-correcting science should ensure this. This notion is associated with the idea of replication, where results that fail to replicate will not be propagated in the scientific literature. Another important step in this process are meta-analyses, in which an assembly of studies examining the same question, with varying degrees of individual error, are statistically analyzed in order to find a ‘true effect’. However, both these processes are dependent on the publication process. For this reason, publication bias is one of the biggest threats to the integrity of scientific knowledge.

Nowadays, the scientific publication process is geared towards positive, clear, exciting results and preferably big effects. Despite the human tendency to think the world can be divided in defined categories (the discontinuous mind), this is typically not how the systems under study work and regrettably the world is not made only of big effects. Observing the news media would lead us to believe that scientists are always successful in their research endeavors. This is however the purpose of the news outlets, to report events of significance. We should then expect the scientific literature to paint a different picture and show us the unbiased record of discovery efforts; but the proportion of positive results currently reported is unrealistically high to fit this expectation.

To fulfill this prospect, it would be necessary that a study is published so long as the scientific community agrees that it is a conceptual and methodologically sound test of the proposed hypothesis, independently of whether the results were positive or negative. Nevertheless, in terms of publication probability, a study reporting consistent and significant results will be preferred to an equally well conducted study that reports the outcome fully (eg. with outliers). In this sense, what we have is many low quality studies being published because they have great, instead of accurate or reproducible, results.

In many fields, small studies with small effects are often not published (Sterling 2017; Begg and Berlin 1988; Rosenthal 1979). One could argue that because these studies have low statistical power, they are not a good estimate of the effect they are measuring anyway. The problem with this is that small studies reporting big effects are not treated in the same way. This skews the overall effect calculated by meta-analysis towards larger values. This double standard likely stems from confirmation bias but it can have serious implications such as inflated views of a drug's benefits when compared with its side effects or the overestimation of the predictive power of a certain biomarker. This undermines the reliability of the evidence, potentially leading to useless medical treatments, false hope for patients and even misleading the development of public health policies and consequently the correct application of taxpayers’ money. It can also also affect the way future research funding is distributed, with research areas that show more promising results being favored. With this simple example, we can already see how publication bias interferes with the principles of reliability and honesty by promoting the publication of lower quality research and biasing the perception of the true effects. Additionally, such behavior stops us from investing our money and time in the right avenues of research.

Furthermore, publication bias has become a vicious circle where scientists typically don’t even try to publish a negative or small effect finding. This means that, for example, many clinical studies, conducted in people or animals never produce a published result. Even though a group of human participants was subjected to an experimental treatment or a group of animals was ultimately euthanized, because the results were not what was expected or the effect was too small, the study would not make it to the scientific knowledge pool. The rationale for conducting animal or human experimentation is usually based on the utilitarian perspective that the wealth of knowledge acquired by conducting such research for the benefit of the common good supersedes the potential harm caused to a smaller number of subjects. Sometimes, despite the best pre-clinical efforts and due to the complexity of systems such as the human body, null results or small effects are still fairly common; in this case, publication is then the minimum standard for such rationale since negative results are still valuable from the perspective of driving future research efforts. By suppressing the publication of such findings, we infringe upon the principles of respect towards the subjects involved in the experimental process and non-exploitation by suppressing findings based on their sacrifice.

Along the same line of thought, academic research is usually supported by governmental funds derived from taxes paid by active members of society on the justification that the knowledge resulting from it will then be fed back to society through the implementation of new knowledge or technologies that benefit the common good and support economic growth and prosperity. This circles back to the ethical principles of duty to society as well as professional discipline.

Besides these direct effects, publication bias also induces a range of other behaviors that heavily influence and discredit the scientific process. As mentioned before, many of the systems under study are imperfect and heterogeneous. In order to make their publications attractive to journals and reviewers, scientists engage in practices that aim to make their data clearer and better looking. Examples of this include p-Hacking, which is the process of running statistical analysis in slightly different ways until the result is significant (p<0.05); choosing hypotheses after the collection of data (HARKing); or deliberate omission or non-reporting of data. These are clear examples of abuse of RDFs and the fact that these practices don’t receive more attention or condemnation is also likely tied to the fact that many scientists don’t truly understand statistics or know how to correctly apply them to their data, and implies a lack of professional competence. Practices such as these ultimately stem from the desire to erase results that don’t fully fit with a preconceived theory and ultimately harm the odds of an article being published. However, it can also produce knowledge that is further from the biological truth and lead to false conclusions integrating into the literature.

Biases occur in every step of the scientific process. A widely occurring bias in scientific design is linked to the preference of male research subjects in clinical trials or animal studies with the justification that the fluctuations of female hormones lead to less clear results. This leads to some drugs not working in women or negative outcomes from alternative drug metabolism. Another example is the fact that despite the fact that major depressive disorder and post traumatic stress disorder are two times more frequent in women, behavioral tests are primarily designed and validated in males (Kokras and Dalla 2014; Zucker and Beery 2010). These are clear cases of discrimination, since this type of research won’t benefit all groups of society equally and in some cases might even produce harm to certain groups.

Another common type of bias resulting from the social dimension of science comes in the form of the scientific dogma. An essential part of being a scientist is acknowledging that knowledge is not absolute. Especially today, when methodology and scientific instruments evolve at a prompt pace, improving the resolution of the data obtained, there is always the possibility that some piece of knowledge needs to be reevaluated. In fact, this is one of the bases for self-correcting science. Still, many fields become attached to current theories and new ideas are not given a fair chance to compete, as established scientists and proponents of currently accepted theories influence funding, tenure decisions and pre-publication peer review. In this case, not only are scientists failing to uphold the principles of organized skepticism and disinterestedness, but they also fail to respect their fellow colleagues, many times on the basis of self-interest or for the sake of defeating a rival’s argument.

Every researcher is, like any other human, subject to their biases which distort the truth, but the scientific process should be designed to eliminate these biases, not to exacerbate them.

Hype

Hype is a slightly more ambiguous phenomenon because the concept of hype itself is hard to define. It likely evolved from the need to present future avenues for research and provide relevant prognostic information to guide the scientific enterprise and the application of the resulting knowledge for the benefit of society. Briefly, it has been defined as an inappropriate exaggeration with the potential to be misleading or deceptive and categorized as a failure in the science communication effort. The difficulty in defining and characterizing hype comes from the fact that it depends on a judgement value regarding what constitutes an inappropriate exaggeration; and if inappropriate exaggeration occurs when there is not enough data to support the claim, there is also a value judgement on when the evidence is sufficient and how far removed the claims can be from the evidence before it crosses the line between outlook on emerging avenues of research and exaggeration (Intemann 2020).

Hype is usually found in statements in which the primary goal is to create support and enthusiasm about science in general, emerging research areas or technologies. This, of course, is important from the point of view of researchers and institutions because it helps them generate more funding for scientific research. Nowadays, due to limited research subsidies, pressures to commercialize and translate research have increased in order to justify the investment. Therefore, scientists are encouraged to frame their research in terms of how it can benefit society. Scientific excellence is no longer defined based on basic discoveries, but also by measuring their economic power and investment return potential; as such, telling a convincing story and selling it are inextricably linked to articulating a valuable end product.

Worryingly, scientists themselves have become a major source of hype. The use of positive words, such as unprecedented, novel or remarkable, in scientific papers has increased 9-fold in 40 years (Vinkers, Tijdink, and Otte 2015). Scientists make use of this type of language to increase the appeal of their publications to journal editors, reviewers and their peers, and often end up attributing superior significance to the findings than what is supported by the evidence. Discussion and conclusion sections are particularly susceptible to the use of language that changes the impression the results produce in readers (Boutron and Ravaud 2018). By exaggerating the promise of certain technologies, research areas or treatments or the certainty of models or methods, scientists can influence the expectations of the field and hinder real progress as other scientists try to build on top of exaggerated potential. Additionally, funding can get diverted and misused in research fields that don’t justify the time or monetary investment. This ultimately cheapens the importance and rigor of scientific publications. Misguided science can undermine efforts to improve current technologies or develop new avenues of research.

It is important to consider the type of audience and in this regard, scientists are probably better trained to detect and judge the validity of such exaggerations, especially if they subscribe to the principle of skepticism. Yet, we should also remember that science is becoming incredibly collaborative, interdisciplinary and at the same time highly specialized, conferring increasing importance to clarity in communication between scientists. Adding to this the huge volume of publications available and the ever-increasing number of activities scientists are expected to perform, it is hardly reasonable to assume that all scientists can be true scientific experts in all areas of knowledge that pertain to their research.

Moreover, bending the facts in favor of a good story can lead to a slippery slope as more inaccuracies and data-divorced findings and language are propagated. By engaging in and encouraging hype, whether or not the audience believes it, scientists indicate that they are willing to neglect the needs of society and the rigor of the scientific effort, undermining the trust placed in them as they break away from the principles of integrity, honesty, reliability, and benevolence.

Another side to this debate involves the concept of alarmism, which can be seen as pessimistic hyping. In the same way that many exaggerate the benefits or importance of a certain technology, it is also possible to exaggerate future risks or unknowns (Intemann 2020). In fact, ethicists have also been responsible for exaggerating the risks of technologies (Caulfield 2016). In a study, it was observed that many articles focus on the use of CRISPR for enhancement of personality and physical traits unrelated to health (Marcon et al. 2019). However, some of these concerns are conjectural and might shift the focus away from more important debates by leading society to believe that the most pressing ethical issue is designer babies when actually this is fairly far fetched given the current state of the art and the development of ethical frameworks to prevent misuse of new genetic technology (Committee and Others 2019).

So as to avoid hype and produce useful and enlightening discussions, it will be important to discuss risks and benefits not just in equal measure but in fair proportionality to their probability, imminence and the consequences of making a wrong judgement (Intemann 2020).

Publish or Perish

Why do researchers engage in these questionable practices when they go against so many of the principles associated with research integrity? Do scientists now lack a sense of integrity?

One of the biggest contributors is probably the current reward system, commonly referred to as the “Publish or Perish” policy where publications and citations are the currency of the academic research world and a scientist is only as good as the amount of high impact factor publications under his or her belt.

"I suspect that unconscious or dimly perceived finagling, doctoring, and massaging are rampant, endemic, and unavoidable in a profession that awards status and power for clean and unambiguous discovery." (Gould 1978)

Positive, consistent and exciting results have the highest chance of being published in high impact factor journals and scientific institutions compensate scientists for such publications. In turn, these publications bring in more funding for the universities and increase their ratings and the prestige of their researchers, bringing in even more driven researchers, funding and high impact factor publications.

The current metric-based system might work in a perfect world where journals have a high degree of quality control and scientists possess innate integrity. In the current climate we suffer from the accuracy-speed trade off as researchers spend less time on each study. An increase in the volume of publications and their varying quality overburdens reviewers which in turn means that more biased or over-hyped research gets past the filter and those same reviewers have less time to dedicate to their own scientific endeavors.

“When a measure becomes the target, it ceases to be a good measure” (Strathern 1997)

In order to survive in a climate of limited funding opportunities, scientists are systematically encouraged to make concessions that compromises the scientific soundness of their work to capitalize on the system through biasing, hype and engaging in peer review fraud and questionable citation practices such as self-citation.

Under the guise of (ill-measured) meritocracy and the fostering of exceptionality, innovation and impact, the current system ends up also rewarding those who engage in dishonest methods to the detriment of those who favor replicability, honesty, rigor and sincere scientific progress. In the end, the latter either can’t compete or will be less competitive for the top positions. Ultimately, this fosters a climate that promotes the selection of bad science and bad scientists. How can we expect scientists to uphold the ethical values of scientific integrity when the system they’re embedded in promotes the exact opposite? We don’t publish negative results or replication studies because they won’t be accepted by good journals. We can’t run big studies with high statistical power because graduate students need to publish papers to graduate and be competitive in the job market. The list of excuses is endless and unfortunately these are actually legitimate concerns for scientists that spend their careers trying to navigate an uncertain employment market due to the severely limited availability of permanent positions in academic research.

While the perverse reward system in academic science is a fundamental problem and must be addressed, in this case the change must come from the top, primarily by funding bodies, scientific journals and Universities.

At the same time, we must also work harder at instilling in scientists a concern for these problems. Ethics and scientific integrity must not just be a passing thought in researchers’ minds or a stamp on a certificate. They should become a present daily attitude. Fostering discussions on scientific integrity and ethics in the same way we have weekly department seminars, involving active researchers and other stakeholders in the discussions and making these themes present in scientific talks are concrete actions we can take immediately. Making scientific integrity training mandatory in all levels of the higher education system including senior level positions and discussing how the lack of concern with these issues harms science will very likely help. And finally, making research integrity seminars more than just a quick rundown of who gets to be an author on a paper, why we shouldn’t commit overt scientific fraud and why we must be conscientious when performing animal studies would be welcome. The neglected topics of bias, hype and the abuse of researchers’ degrees of freedom have the potential to do a lot more harm in the long run exactly because no one (especially scientists) is paying attention.

While not classified as scientific misconduct, bias and hype heavily encroach on several principles that underlie scientific integrity and threaten the rigor and reliability of the research effort. In fact, the current reward system has normalized and even covertly encouraged such abuses. Research findings are preliminary and frequently challenged by new data and methodologies and, like the phenomena we are trying to study, often uncertain and contradictory. Smoothing out these details in the pursuit for perfectly clear results and implying there are only large effects, simple explanations, and easy solutions paints a flawed image of scientific research. More importantly, it devalues the hard work of many scientists around the world who face disappointment for months on end only to be reduced to a metric when things luckily go right. It also creates a vicious feedback loop that fosters an expectation of straightforward scientific narratives on the part of funders, scientific journals and society that ultimately leads only to more unscientific, biased research.

These issues are very complex and there are no straightforward explanations or solutions but we need to start addressing them. Society takes science very seriously and we, scientists, must hold ourselves to higher standards.

Acknowledgements

I would like to thank Prof. Michael A. Nash for his help in revising and editing this piece and Prof. Roberto Andorno and Prof. Eva De Clercq for their thought-provoking courses on the ethics of science and bioethics.

References

Begg, Colin B., and Jesse A. Berlin. 1988. “Publication Bias: A Problem in Interpreting Medical Data.” Journal of the Royal Statistical Society. Series A, 151 (3): 419.

Boutron, Isabelle, and Philippe Ravaud. 2018. “Misrepresentation and Distortion of Research in Biomedical Literature.” Proceedings of the National Academy of Sciences. https://doi.org/10.1073/pnas.1710755115.

Caulfield, Timothy. 2016. “Ethics Hype?” Hastings Center Report. https://doi.org/10.1002/hast.612.

Committee, World Health Organization Expert Advisory, and Others. 2019. “Advisory Committee on Developing Global Standards for Governance and Oversight of Human Genome Editing.” Human Genome Editing: World Health Organization.

Foundation, European Science, and All European Academies. 2017. The European Code of Conduct for Research Integrity. European Science Foundation.

Gould, S. J. 1978. “Morton’s Ranking of Races by Cranial Capacity. Unconscious Manipulation of Data May Be a Scientific Norm.” Science 200 (4341): 503–9.

Intemann, Kristen. 2020. “Understanding the Problem of ‘Hype’: Exaggeration, Values, and Trust in Science.” Canadian Journal of Philosophy, 1–16.

Kokras, N., and C. Dalla. 2014. “Sex Differences in Animal Models of Psychiatric Disorders.” British Journal of Pharmacology 171 (20): 4595–4619.

Marcon, Alessandro, Zubin Master, Vardit Ravitsky, and Timothy Caulfield. 2019. “CRISPR in the North American Popular Press.” Genetics in Medicine: Official Journal of the American College of Medical Genetics 21 (10): 2184–89.

Merton, Robert K. 1973. The Sociology of Science: Theoretical and Empirical Investigations. University of Chicago Press.

Resnik, David B. 2011. “Scientific Research and the Public Trust.” Science and Engineering Ethics 17 (3): 399–409.

Rosenthal, Robert. 1979. “The File Drawer Problem and Tolerance for Null Results.” Psychological Bulletin 86 (3): 638–41.

Sterling, Theodore D. 2017. “Publication Decisions and Their Possible Effects on Inferences Drawn from Tests of Significance—or Vice Versa.” In The Significance Test Controversy, 295–300. Routledge.

Strathern, Marilyn. 1997. “‘Improving Ratings’: Audit in the British University System.” European Review. https://doi.org/10.1017/s1062798700002660.

Vinkers, Christiaan H., Joeri K. Tijdink, and Willem M. Otte. 2015. “Use of Positive and Negative Words in Scientific PubMed Abstracts between 1974 and 2014: Retrospective Analysis.” BMJ 351 (December): h6467.

Weinbaum, Cortney, Eric Landree, Marjory Blumenthal, Tepring Piquado, and Carlos Gutierrez. 2019. “Ethics in Scientific Research: An Examination of Ethical Principles and Emerging Topics.” https://doi.org/10.7249/rr2912.

Zucker, Irving, and Annaliese K. Beery. 2010. “Males Still Dominate Animal Studies.” Nature 465 (7299): 690.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in