Perceptual (but not acoustic) features predict singing voice preferences

Published in Behavioural Sciences & Psychology and Arts & Humanities

“Beauty is in the eye of the beholder”. This famous saying suggests that our preferences are subjective. When it comes to singing, we often feel that our preferences are based on objective criteria. But is that really the case? How much do our preferences depend on attributes of the object being evaluated — and how much do they depend on our own characteristics or stories, one's expertise or mood? Also, do we share the same preferences for some singers over others?

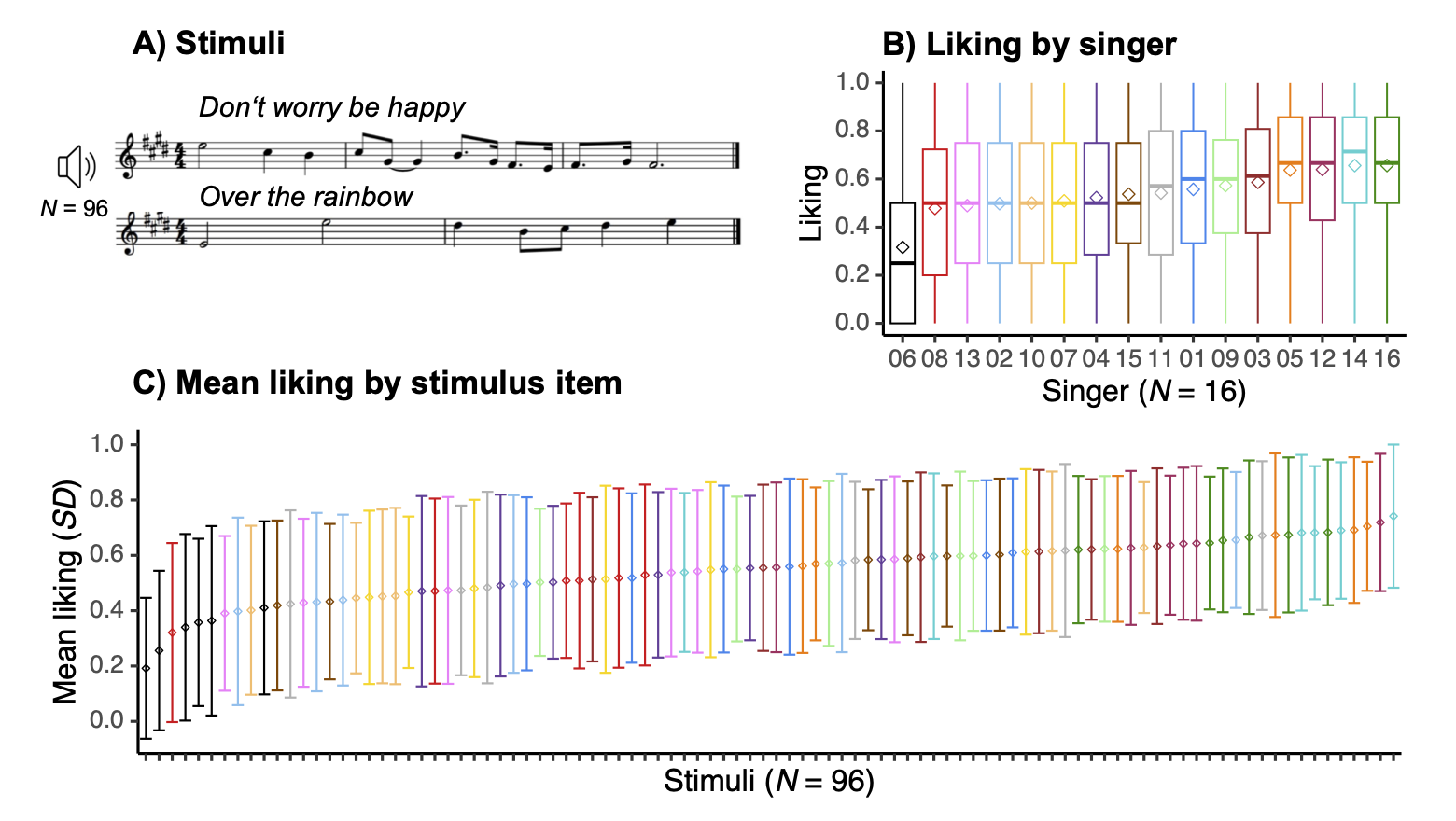

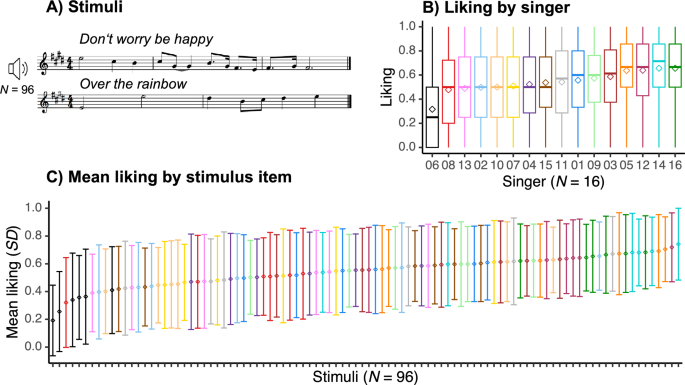

In a recent study, we quantified how much singing voice preferences are explained by attributes of the singing performances. In an online experiment, we asked 326 U.S.-based participants to rate how much they liked 96 a cappella singing performances by 16 highly trained female singers on a scale from 1 (not at all) to 9 (a lot). These performances were recorded at Berkley School of Music, which is one of the few music institutions with a program specifically for pop performers. As illustrated in the figure, we found a widely spread distribution of liking ratings, covering the full scale, and high individual differences in participants’ preferences. This is indicated by the high variability around the mean liking, and confirmed by the very low interrater agreement in liking ratings.

Mean preferences for some singers

Despite the large individual differences in participants’ liking ratings, some average preferences for some singers emerged. For instance, one can see in the figure that all performances by singer 06 (marked in black) received low average liking ratings; and that all performances by singers 14 and 16 (marked in shades of green) received high average liking ratings. Considering that such average preferences must be based (at least to some extent) on attributes of the voices themselves, we further looked into the acoustic characteristics of the stimuli.

Low prediction based on acoustic features

We investigated how much we could link the preferences described above to acoustic features of the singing voices. Intuitively, one might expect that some acoustic criteria would ground the preferences, akin to how we evaluate the correctness or pitch accuracy of a singing performance, as previously demonstrated by our team (Larrouy-Maestri et al., 2015, 2017). Thus, the next step was to quantify the acoustic characteristics of the rated performances. Our acoustic analysis initially included a core set of features which have been successfully used to describe voices and predict ratings of (speaking) voice attractiveness and perceptual ratings of pitch accuracy of singing performances. When we tried to predict the variance in liking ratings from this core set of acoustic features, we were surprised to find that remarkably little variance could be explained based on these features. To ensure that we were thoroughly describing the acoustic signal, we expanded this core set of features to include nearly 300 combined features (from the Soundgen R package, which provides numerous acoustic features to describe vocalizations, as well as from the Music Information Retrieval toolboxes Essentia and MIRToolbox). But doing so did not meaningfully increase the prediction of liking ratings. This led us to further explore the relationship between liking ratings and stimulus attributes by examining how acoustic characteristics of the stimuli are actually perceived by participants.

Examining perception of the singing stimuli

We conducted a second experiment in the more controlled setting of the laboratories of the Max Planck Institute for Empirical Aesthetics. In this experiment, 42 German participants rated all 96 stimuli on 10 different bipolar perceptual scales, in addition to the liking scale. For instance, participants provided perceptual ratings of pitch accuracy (from “out of tune” to “in tune”), articulation (from “staccato” to “legato”), tempo (from “slow” to “fast”), breathiness (from “not at all” to “a lot”), etc. The results replicated the findings described above of a widely spread distribution of liking ratings, with low interrater agreement, and leading to low prediction of liking ratings based on acoustic features. Finally, when we included the perceptual ratings in our modeling approach, we found that perceptual ratings could explain 43 percent of the variance in liking ratings. The features that contributed the most to this were pitch accuracy (from “out of tune” to “in tune”), resonance (from “thin” to “full”) and attack (from “soft” to “hard”). Importantly, there were large individual differences not only in participants’ liking ratings, but also in their perceptual ratings, as indicated by the remarkably low interrater agreement on all perceptual scales.

Individual differences in singing voice perception

Exploratory analysis of the relationship between acoustic measures (e.g., pitch interval deviation, cepstral peak prominence, vibrato extent) and corresponding perceptual ratings (e.g., perceived pitch accuracy, breathiness, and amount of vibrato, respectively) for each participant revealed correlations ranging from nonexistent to moderate. In some cases, these correlations were higher than the average group correlation, suggesting that some participants were more “objective” or “acoustically sensitive” to certain voice attributes. For ratings of vibrato and breathiness, these “acoustic sensitivities” were positively associated with participants’ general musical sophistication. These results highlight the prominent role of individual differences in voice perception and suggest that these may also play a role in subsequent steps of aesthetic appreciation. Ongoing work aims to generalize our findings to other styles of singing and integrate them into the literature on (speaking) voice attractiveness.

References

Larrouy-Maestri, P., Magis, D., Grabenhorst, M., & Morsomme, D. (2015). Layman versus professional musician: Who makes the better judge? PLOS ONE, 10(8), e0135394. https://doi.org/10.1371/journal.pone.0135394

Larrouy-Maestri, P., Morsomme, D., Magis, D., & Poeppel, D. (2017). Lay listeners can evaluate the pitch accuracy of operatic voices. Music Perception, 34(4), 489–495. https://doi.org/10.1525/mp.2017.34.4.489

Follow the Topic

-

Scientific Reports

An open access journal publishing original research from across all areas of the natural sciences, psychology, medicine and engineering.

Related Collections

With Collections, you can get published faster and increase your visibility.

Advances in neurodegenerative diseases

Publishing Model: Hybrid

Deadline: Jun 30, 2026

AI for clinical decision-making

Publishing Model: Open Access

Deadline: Jun 23, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in