The EdTech Evidence Evaluation Routine

Published in Social Sciences

The global push to establish a robust research and development infrastructure within schools aligns with the efforts to scale evidence-based EdTech solutions. These solutions undergo rigorous testing, continuous improvement, and thorough evaluation within real school environments. The goal is to ensure that the EdTech products effectively meet the needs of students and educators alike.

Some countries have been more proactive in pursuing the evidence agenda than others. The US Department of Education, for example, has set clear evidence expectation in the form of ESSA standards. However, a comprehensive review of evidence standards in educational clearinghouses revealed discrepant criteria for how “evidence” is defined and measured, leading to different conclusions and recommendations. The authors provide several examples of educational programs that were deemed evidence-based and highly recommended by one clearinghouse, but described as inadequate by another clearinghouse.

This is a problem because with many products on an open market, standards are needed to sort out the wheat from the chaff. An average US district uses 150 unique EdTech tools every month and the most popular ones are not evidence-based. Why is it so?

The long list of EdTech failures has a long list of scapegoats: EdTech developers are blamed for churning out products that lack principles of developmental science. Teachers are in short supply and those who teach in public schools lack edtech pedagogy training, which undermines their capacity to implement edtech as intended. Edtech academia-industry partnerships are complicated with disagreements around data-sharing and different incentive mechanisms for researchers and developers. Furthermore, generating edtech evidence is a difficult business model if schools are the paying customer.

So how can we ensure that all EdTech is based on the best available science?

The EdTech industry warns: If we take a punitive approach to evidence, with mandatory certifications and quality assurance standards, we risk undermining not only EdTech innovation but also the goodwill to engage with research. The current “evidence marketplace” already has several evidence frameworks, some known more to teachers, others to parents, but all lacking globally shared EdTech quality benchmarks.

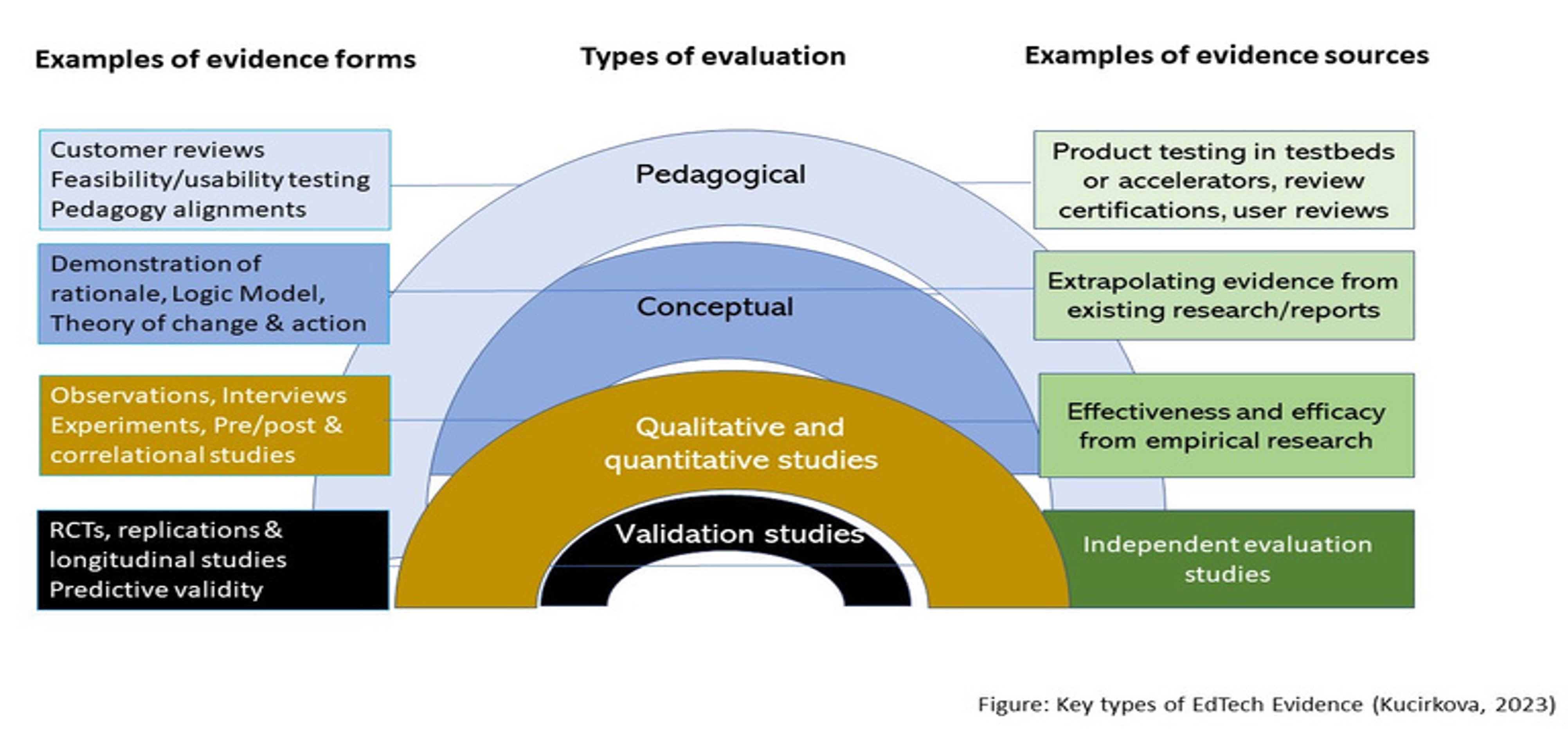

Such benchmarks should be formative and acknowledge the need for diverse evidence needs. EdTech are varied: they include apps, multiple-player learning platforms, screeners, online libraries. Evidence approaches are varied too: they include conceptual, qualitative, quantitative or validation studies. Different types of technologies need different types of evidence that they “work”. For example, a RCT for a reading-screener app does not make sense - a validation study is more appropriate. Diversity in evidence evaluations leapfrogs the innovations in EdTech if it is based on scientific principles.

Our paper outlines an EdTech Evaluation Evidence Routine (EVER) based on the science of learning. The Science of Learning is in its “golden age” and offers some guidance for gauging Edtech evidence of positive learning impact. For example, the Four Pillars specify that Edtech should promote children’s engaged, meaningful learning with social interaction and scaffolded exploration.

EVER underscores the harmony between adaptive learning and randomized experimentation, while advocating for methodological diversity. This approach bridges the gap between the focus on controlled trials and the human-centeredness approach of EdTech. By blending different research methods with careful evaluation, EVER enables a comprehensive understanding of how EdTech influences learning outcomes.

The implications are that technologies of any kind (start-ups or mature companies) and with any type of evidence (experimental evidence or commissioned reports) can be scientifically verified for their learning impact. An "evidence-ready" EdTech enterprise demonstrates a commitment to ongoing research engagement and integration into its processes. This mindset is what should be nurtured in the EdTech ecosystem as we grapple with implementing "evidence" across technologies, schools and countries.

Follow the Topic

-

npj Science of Learning

An online open access peer-reviewed journal dedicated to research on all aspects of learning and memory – from the genetic, cellular and molecular basis, to understanding how children and adults learn through experience and formal educational practices.

Related Collections

With Collections, you can get published faster and increase your visibility.

Reimagining Teaching and Learning in the Age of Generative AI Agents

Publishing Model: Open Access

Deadline: Jul 13, 2026

Effects of lifestyle behaviours on learning and neuroplasticity

Publishing Model: Open Access

Deadline: Jun 09, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in