When evidence refuses to align: making sense of biomedical waste through PNB

Published in Biomedical Research, Mathematical & Computational Engineering Applications, and Mathematics

Biomedical waste management is often approached as a matter of infrastructure, compliance, or training. Yet, when one begins to examine the evidence across different healthcare systems, a deeper challenge becomes apparent—data itself does not easily align.

Studies report segregation accuracy using different definitions. Some focus on training outcomes, others on occupational risks, and still others on environmental impact. Even within similar domains, thresholds and reporting standards vary widely. As a result, evidence becomes scattered, heterogeneous, and difficult to compare across settings.

This is not simply a technical issue. It is a scientific one.

When evidence cannot be meaningfully compared, patterns remain obscured. Strong systems cannot be consistently distinguished from weaker ones, and recurring gaps appear isolated rather than systemic. In such a context, the challenge is not only to generate data, but to interpret it in a way that allows comparison without oversimplification.

It was within this context that Bhadran’s PNB approach was developed and applied.

Bhadran’s PNB, developed by Renjith Seela Bhadran and presented in his article “Bhadran’s Point of Generation Segregation Theory for Behavioral Precision in Biomedical Waste Management” published in the Scientific Reports (Nature Portfolio), was used as part of the analytical approach to interpret cross-country biomedical waste evidence characterized by significant variability.

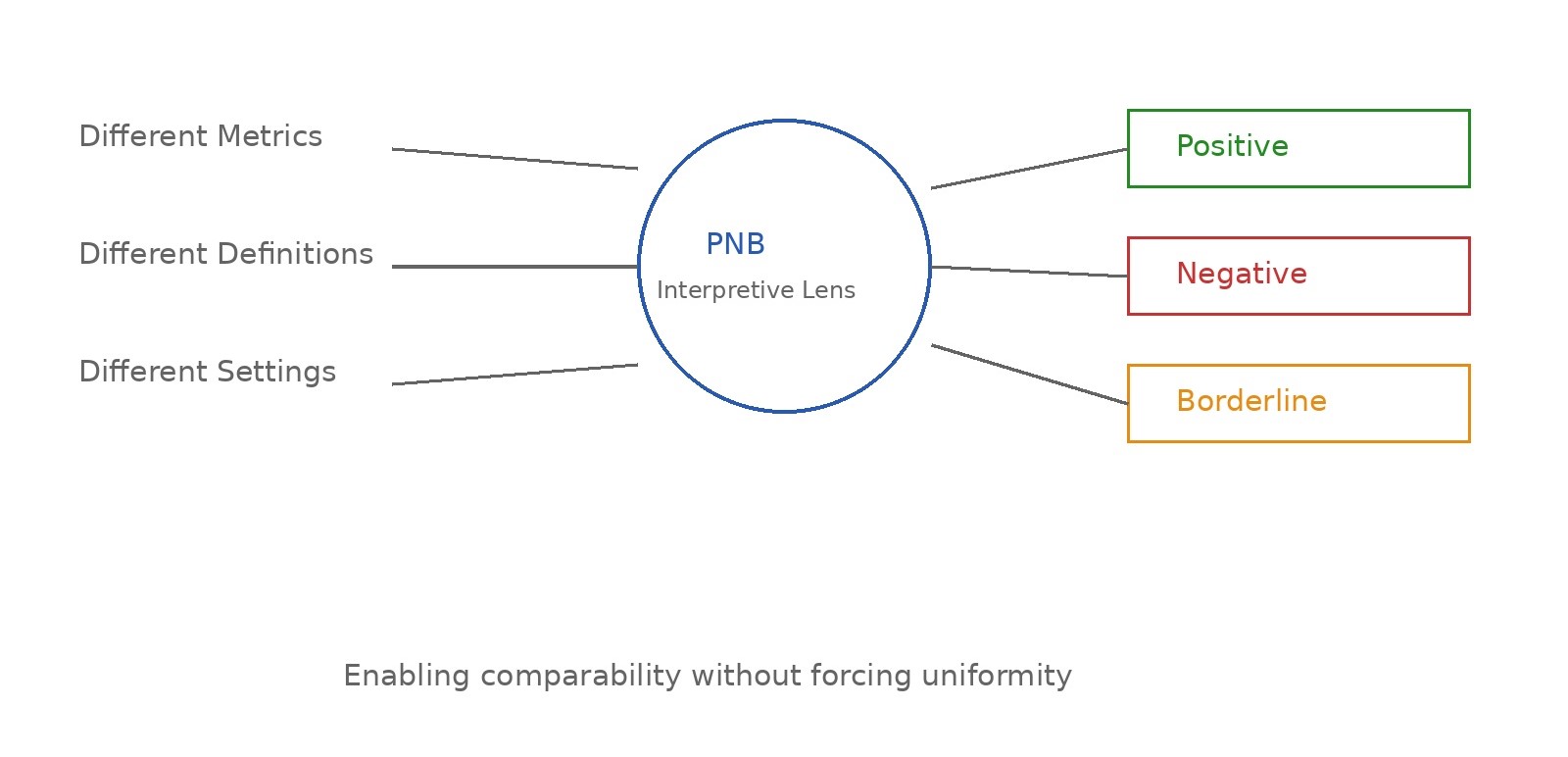

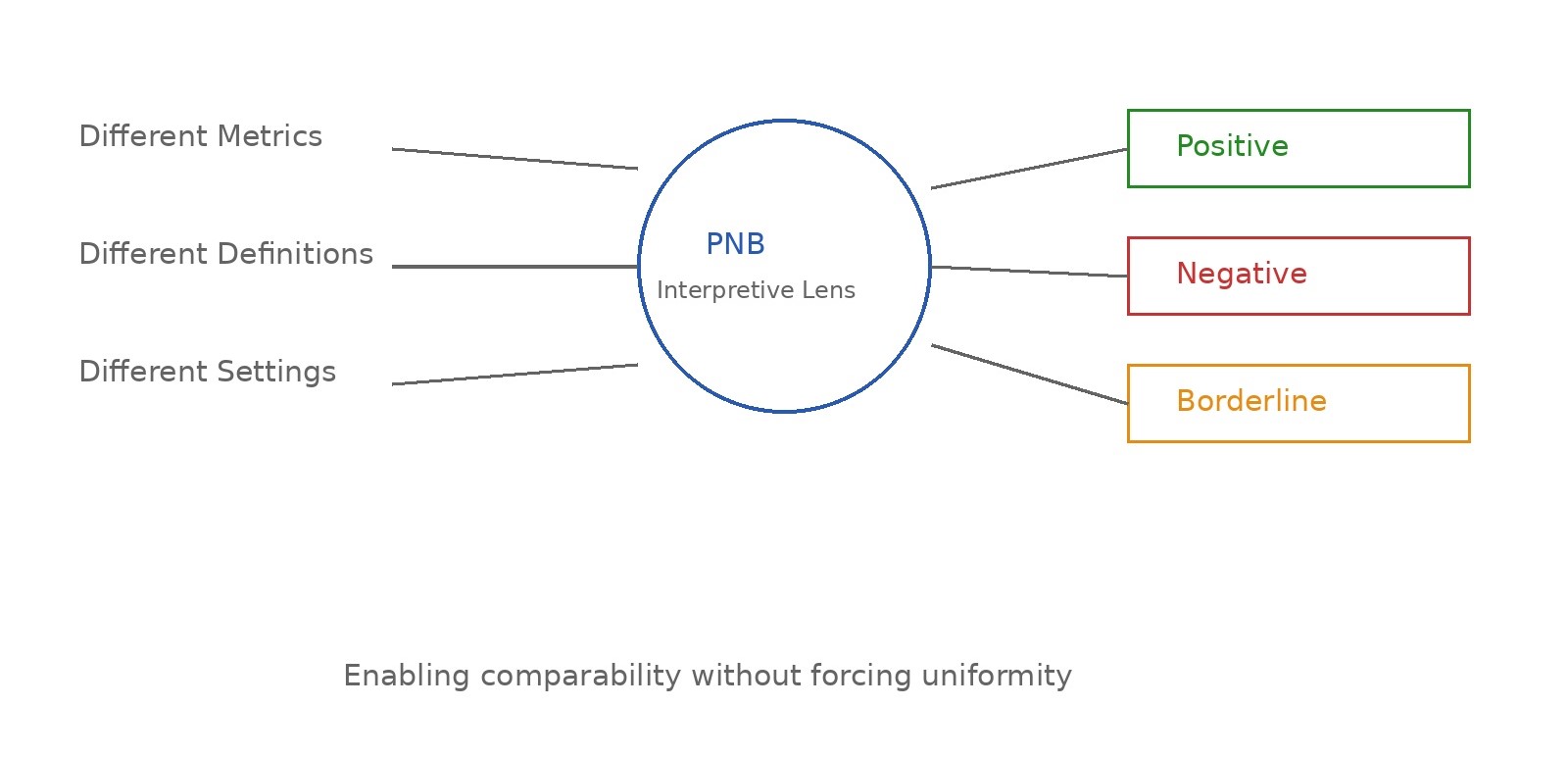

Rather than attempting to standardize inherently different datasets, the PNB approach provides a structured way to interpret them. Evidence that is diverse in form, context, and reporting is translated into three analytically consistent categories: Positive, Negative, and Borderline—reflecting clear strengths, evident gaps, and areas of uncertainty respectively.

Importantly, this is not a subjective labeling of systems, but a structured interpretive step designed to make heterogeneous evidence analytically comparable.

At its core, the logic is simple: when evidence is scattered and difficult to code directly, it can still be meaningfully interpreted through a consistent evaluative lens. By doing so, variability is not removed, but organized.

This allows something that is otherwise difficult in cross-country research—comparison without forced uniformity.

When applied across multiple studies and healthcare settings, the PNB approach enables diverse findings to be read within a common analytical frame. Data originating from different resource settings, study designs, and reporting formats can be interpreted in relation to one another without losing their contextual meaning.

What begins as fragmented evidence gradually becomes structured.

And within this structure, patterns begin to emerge.

Across domains such as training effectiveness, segregation practices, and compliance behavior, a consistent observation can be made. Systems that demonstrate more stable and reliable outcomes tend to align at a critical point—the moment waste is generated. Conversely, where inconsistencies or risks are observed, they often trace back to that same point.

This does not arise from isolated findings, but from the convergence of patterns across multiple contexts when interpreted through a common lens.

In this sense, the role of PNB extends beyond simple categorization. It functions as a way of making complex evidence readable—linking scattered observations into a form where underlying consistencies can be identified.

Such an approach becomes particularly relevant in fields like biomedical waste management, where variability is inherent and often unavoidable. Differences in infrastructure, policy enforcement, and training environments mean that evidence will rarely be uniform. Attempting to eliminate this variability may not always be feasible.

However, interpreting it systematically is.

The implications of this are both practical and conceptual. For researchers, it provides a way to engage with heterogeneous data without discarding it. For policymakers, it offers a lens through which performance can be understood even in the absence of standardized metrics. And for healthcare systems, it highlights the importance of focusing not only on outcomes, but on the conditions under which decisions are made.

Perhaps most importantly, it reframes how evidence itself is approached.

Rather than viewing variability as a barrier, it becomes something that can be organized, interpreted, and understood.

When evidence refuses to align, the answer may not lie in forcing it to do so.

It may lie in developing ways to read it more meaningfully.

To read the full article Bhadran's PGST, please visit https://doi.org/10.1038/s41598-025-32195-4

Follow the Topic

-

Scientific Reports

An open access journal publishing original research from across all areas of the natural sciences, psychology, medicine and engineering.

Related Collections

With Collections, you can get published faster and increase your visibility.

Healthy Aging

Publishing Model: Open Access

Deadline: Dec 31, 2026

Health Policy and Systems Research

Publishing Model: Hybrid

Deadline: Jul 14, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in