A data success story: how Springer Nature’s Research Data Support service helped us publish our large data set

Published in Research Data

At Springer Nature, we are currently developing the ways in which we support researchers with open data services and solutions. As a result of this, we are currently not accepting any new submissions to Research Data Support from individual Springer Nature authors. Find out how we can support you with your research data.

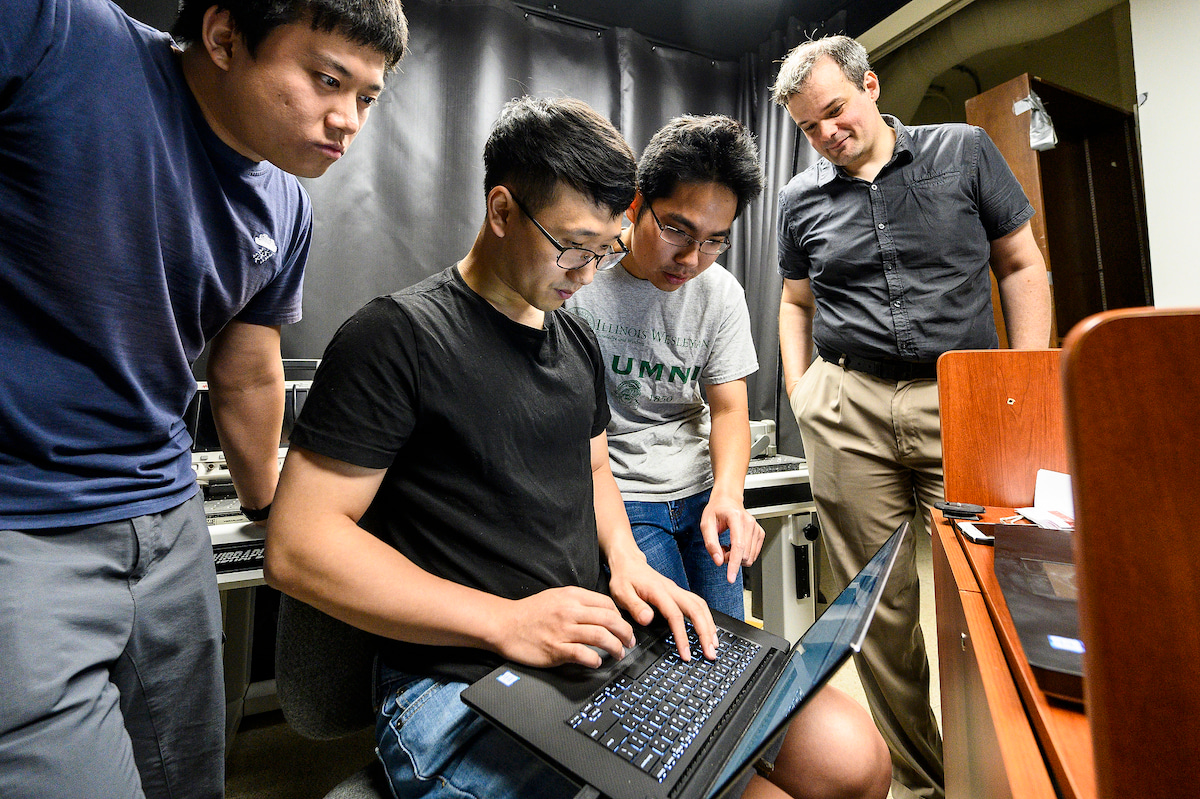

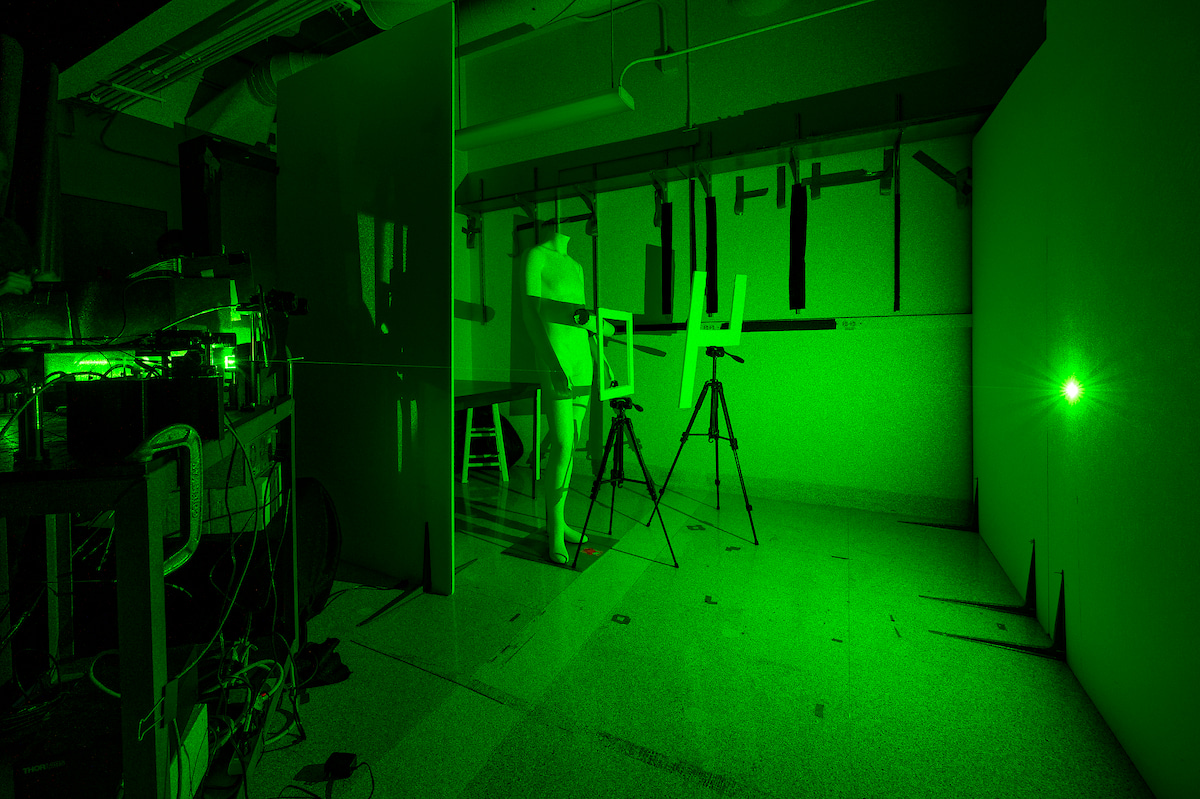

Seeing around corners

Our paper is about a new method for non-line of sight imaging, involving an algorithm. The method allows you to see around corners and reconstruct images of objects, using indirect light that has been reflected off a surface somewhere in the scene. We send short laser pulses to different points on a relay surface in the scene, and record the light from those surfaces. This produces large, five-dimensional data sets that we use to do the reconstructions. Because the data set was very large, we wanted to make sure it was deposited where it would stay available in the form that it was submitted with the paper, and in a verifiable way.

The challenge - new methods, old algorithms

We had been fighting with a phenomenon that when people compare their new methods against old algorithms, they often don't do the best job of representing the old algorithms – it is hard to do if you do not have the code and the data available. Recently, other researchers in our field uploaded and submitted their data and their reconstructions along with their paper, so anyone could make a fair comparison of what the state of the algorithm was at the time when they submitted the paper. This was really helpful to us, so we decided that we would like to do it too. It makes things a lot more reproducible – in order to get definite answers of what actually works and what doesn't, it is much better to have the data and the code available, even though that is not typically done.

Unfortunately our data sets were very large, so we couldn't just submit it as supplementary material with the paper. To help, our Nature editor referred us to Springer Nature’s Research Data Support service.

The solution – getting our data online

Using the Research Data Support service provided a way to put our data and code online, in a way that we can cite, and that other people can compare against. We are hoping that in the future when researchers write follow-up work, they will use our code and data sets in their comparisons. This way, we avoid having a lot of different comparisons and implementations, where it could become very hard to figure out which aspects of the algorithms actually do work, and which ones don't.

Making everything clearer, and supporting others in the field

There have been a lot of positive outcomes from the decision of previous researchers to publish their code and data with their paper. It makes it much easier to do real comparisons on that data. In the past, we had shared all data on request, which did lead to useful comparisons. However, it's better to have an archival published version that people can compare again. Now that we have done this, I'm expecting to see people using this code and data, and use it as the gold standard to compare against. This helps make everything much clearer – there's no question of what algorithm was compared against, and how were these results obtained.

For our paper, I was concerned that if we did not publish the actual algorithm together with the actual data that was used, people might implement their own version of the algorithm based on the description in the paper, and this might lead to different comparisons, making things unclear. At this point in time, the difference between algorithms and their performance is becoming rather subtle, so it's important people actually know what's being compared against in detail.

We hope that making the data freely available in a form that’s easily accessible without going through us directly, will allow others to develop their own algorithms based on this data. That hopefully will also help research along, because that is I think the data we have collected is amongst the largest and highest quality data sets that are out there right now. It would be great if people make use of it to come up with refined algorithms to handle the data.

Final thoughts

The reason we used the Research Data Support service was that rather than just putting it our data on our own web page, having an archival publication with the paper that is hosted independently of what happens at our group provides a baseline for everybody to compare against, and there's no way for anybody to change that. Most importantly, we wanted to have the citable data set with the publication. I was happy with the service – the curating of the data went very well. We would definitely use the Research Data Support service again, when we have a new, large data set that we hope will be very useful for lots of people, and we have claims that are really tied to that.

*****

Do you need help sharing your research data? Using the Research Data Support service is quick and simple. Find out more and get started today.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in