Deep learning based on dynamic behavioural phenotypes to evaluate the visual function of infants

Published in Bioengineering & Biotechnology

Individual precise collaboration of the sensory and behavioural systems is fundamental to survival and evolution. When some perceptional system alters, both sensory and behavioural systems have the potential to form a substitute modality, named individual multi-systematic adaptive process. Vision, as a major input of sensation, receives more than half of external information. Previous studies have proved that there is some correspondence between vision and behavioral phenotypes. However, the exact dynamics of human behaviour generated by vision loss remain largely unknown.

Most of our team members are ophthalmologists and this advantage enables us to have a deeper understanding of visual related diseases. In our daily work, we meet plenty of patients suffering varying levels of visual loss. We have noticed that some infantile patients exhibited unusual and similar behavior phenotypes. As infants are at the very early stage of growing development, whose behavioural systems remain high sensitive and plastic, the comparison of behavioral patterns between healthy infants and infants suffering from varying degrees of visual impairment can potentially provide an opportunity to access the adaptive process of behavioural system in response to vision loss. Moreover, we assumed that the vision-behaviour correlation could be applied as a reference for evaluating the visual function of infants.

Artificial intelligence (AI) has been recognized as a promising approach for image and video recognition in medicine due to its good performance in information extraction. In addition, our team have achieved several intelligent diagnosis and prediction systems for ophthalmic diseases. Based on research experience, we considered that the appearance features of behavioral system could also be recognized by AI and determined to adopt deep learning for video recognition in our research.

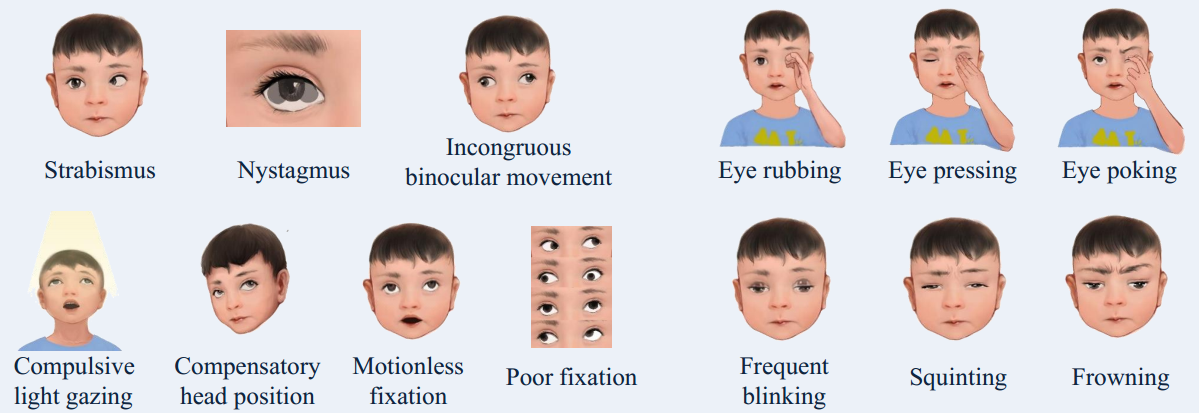

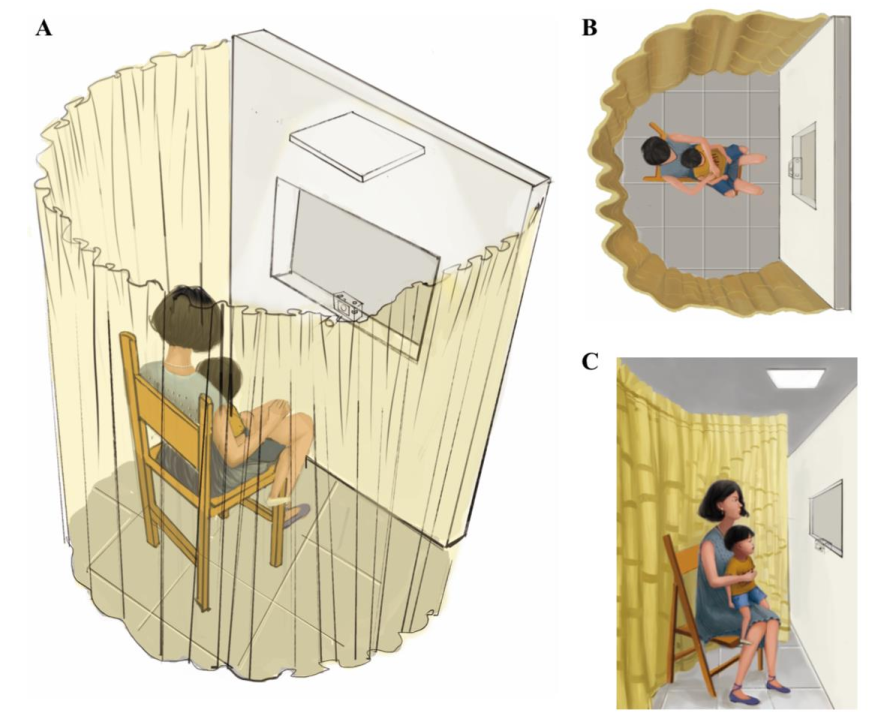

According to our study design, infants under age of three were recruited. A standardized apparatus, scenario, and procedure was applied to record all the behavioural phenotypes, with minimized background interference and stimulation (Fig 1). We collected the largest-to-date behaviour videos of 4,196 infants and their ophthalmic dataset of functional and structural visual examinations, and then indicated the statistical associations between behaviours and visual impairment. We identified a total of 11 behaviours with significantly high occurrence rates in infants suffering visual impairment.

Figure 1. Standardized scenario for video recording

A. The standardized apparatus consisted of a recording stage, a curtain, a chair, a light (10 candelas/m2) and a video recorder. Both the stage and the curtain were used to eliminate external interferences; B. The video recorder was embedded in the middle of the stage with 1-meter ground height. The chair was fixed at a position of 0.55 meter facing the stage, to ensure that all the infants’ actions can be fully recorded; C. For each standardized procedure, the guardian sat in the chair, holding the infant facing the stage. Each infant was given a few minutes to adapt to the new surroundings and to be calm before recording. No hints or simulations were permitted during the process. The recording process lasted for more than 5minutes to ensure that the behavioral phenotypes could be completely and repetitively recorded.

We also established a deep-learning algorithm (a temporal segment network) trained with the full-length videos, which could discriminate mild visual impairment from healthy behaviour (AUC, 85.2%), severe visual impairment from mild impairment (AUC, 81.9%), and various ophthalmological conditions from healthy vision (with AUCs ranging from 81.6% to 93.0%).

Given that our study population are representative of Chinese infants seen in hospitals but not of the overall infant population in any settings, behavioural phenotypes and ophthalmic dataset collected from other countries and in else conditions are needed to extend the applied range of our approach. In our following work, we will collect data of infants from more regions and improve the video recording setting. Our team aim to establish a universally intelligent visual assessment software for infants, which is available in non-medical situation to screen visual impairment.

Written by Yifan Xiang and Haotian Lin

Follow the Topic

-

Nature Biomedical Engineering

This journal aspires to become the most prominent publishing venue in biomedical engineering by bringing together the most important advances in the discipline, enhancing their visibility, and providing overviews of the state of the art in each field.

Related Collections

With Collections, you can get published faster and increase your visibility.

Implantable wireless communication technologies

Publishing Model: Hybrid

Deadline: Nov 28, 2026

Medical Ultrasound: Emerging Techniques and Applications

Publishing Model: Hybrid

Deadline: Jan 29, 2027

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in