Memristive neural networks perform better when they work in teams

Published in Electrical & Electronic Engineering

The ever-increasing demands of machine learning applications have been pushing researchers toward developing new kinds of hardware that would better match this new paradigm of computing. The slowing of Moore's law cannot keep up with the growing computing requirements of complex machine learning models, while their massive power demands are unsustainable in the context of many real-life world applications, such as mobile devices or embedded systems. One of the most promising candidates to tackle these issues is memristor-based electronics—in many machine learning tasks they can enable in-memory computing, resulting in savings in time and energy in the orders of magnitude. However, their analogue nature making these improvements possible also means that they are less reliable.

So far, the attempts to make memristor-based systems more accurate have been focused mostly on improving device-level behaviour. It is sensible to think that with better devices, the systems, that consist of these devices, will perform better too. Unfortunately, with the large number of memristor technologies available, optimisation techniques are usually not universally applicable—what works for one technology may not work for another, either because of different materials or different fabrication techniques used. Moreover, there exist several types of non-idealities with inherent trade-offs between them. Our hope was that there might be more technology- and non-ideality-agnostic approaches that improve not the device- but the system-level behaviour.

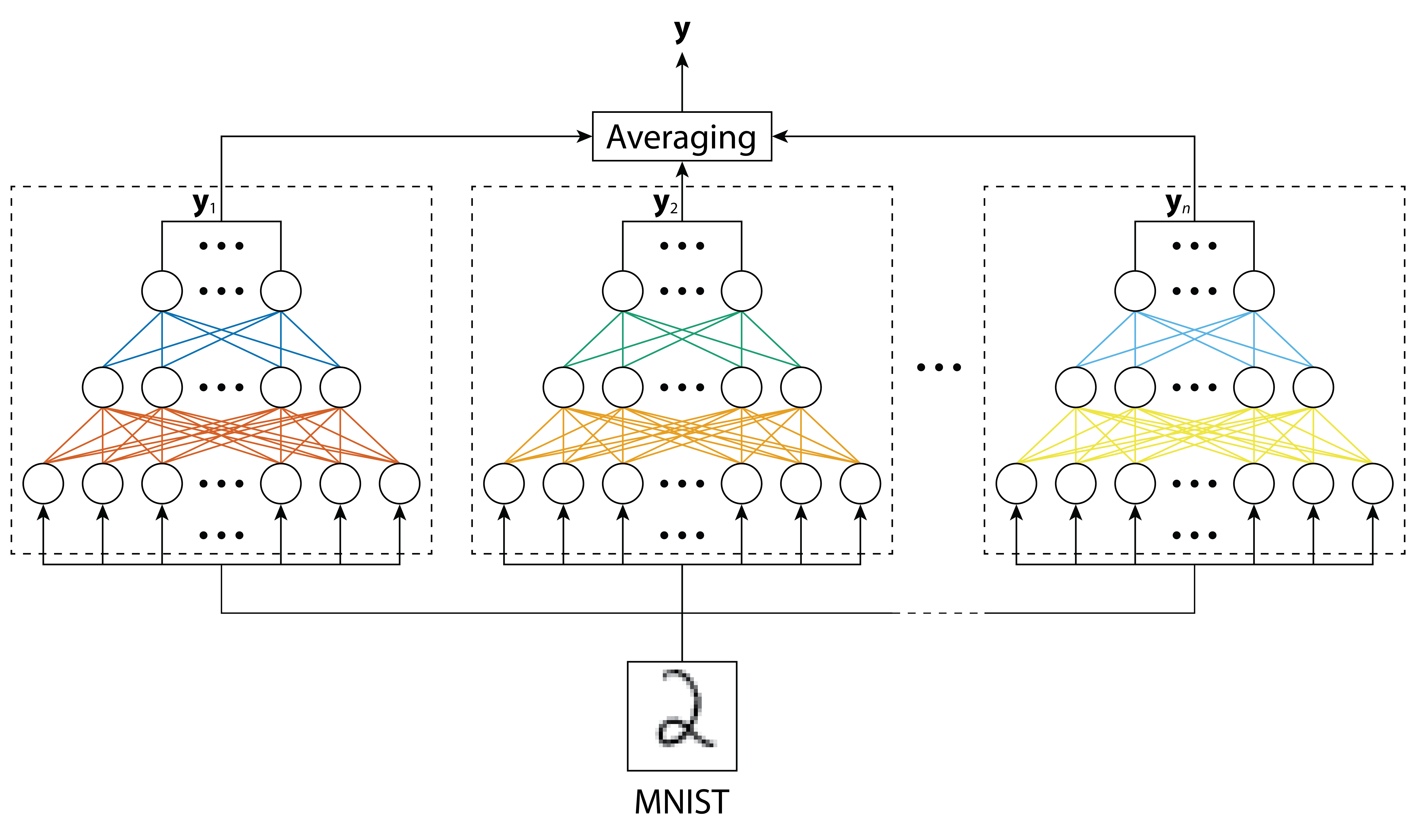

In the case of neural networks, there is one concept that is usually easy to implement in all contexts—committee machines. It is the idea that neural networks can work together by, for example, having their outputs averaged (see Fig. 1). This seemed like a perfect thing to try out with memristive neural networks; after all, their outputs are just electric currents that can be easily combined by adding them together. And it worked! Using preliminary simulations, we found that even simple averaging can significantly increase the accuracy of memristive neural networks. Importantly, when we replaced large memristive networks with committees of smaller networks (keeping the total number of memristors constant), we found that committees usually performed better.

Even though there is elegance in simple ideas, it seemed we could achieve even higher accuracy if we employed something more complex than arithmetic (ensemble) averaging. And we tried out a lot of ideas: from weighted averaging of individual networks or outputs to making individual networks vote. The result was bittersweet—none of these techniques performed better than simple averaging which was the easiest one to implement in practise.

With this realisation, we moved on to performing a comprehensive analysis of committee machines of memristor-based neural networks that employ ensemble averaging. Thanks to joint efforts from several groups, we managed to investigate these structures by employing data from three different memristor technologies. This enabled us to test the most common non-idealities of memristor technologies, and to show that the committee machines method is not only technology-, but also (largely) non-ideality-agnostic.

We believe this result will make ensemble averaging of memristive neural networks a very powerful tool when optimising these analogue systems. In addition to accuracy improvements, the new method makes this type of analogue hardware more robust—the parallel and modular nature of committee machines makes neural network systems much easier to modify during and after their fabrication. We hope that this new way of optimising memristive neural networks will make it more feasible to employ them in practise and encourage more research into how they can be improved on the system level.

For more information, please see our paper:

Joksas D. et al. Committee machines—a universal method to deal with non-idealities in memristor-based neural networks. Nat. Commun. 11, 4273 (2020).

Follow the Topic

-

Nature Communications

An open access, multidisciplinary journal dedicated to publishing high-quality research in all areas of the biological, health, physical, chemical and Earth sciences.

Related Collections

With Collections, you can get published faster and increase your visibility.

Women's Health

Publishing Model: Hybrid

Deadline: Ongoing

Biosensing

Publishing Model: Hybrid

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in