Rapid image deconvolution and multiview fusion for optical microscopy

Published in Bioengineering & Biotechnology

Modern microscopy techniques generate massive amounts of data with a click of a button, yet this data deluge does not necessarily accelerate biological insight — in part because of the time-consuming effort involved in data post-processing. For example, deconvolution and multiview fusion — key post-processing steps in improving image contrast and resolution in some microscopes — can take days, in many cases drastically exceeding the time for data acquisition. As our dual-view light-sheet microscope, diSPIM, is used widely in our lab and many others, we felt an increasing need to alleviate the computational bottleneck. In late 2015, Yicong Wu and I began to work on improving the speed of data post-processing, particularly the registration and joint deconvolution of the two image stacks acquired by our diSPIM. Initially, we focused on converting our existing software so that it could be run on graphics processing units (GPU) to parallelize the computation, and this provided a 20-30-fold improvement over the previous CPU implementation.

At the same time, Yue Li, a PhD student in Dr. Huafeng Liu’s lab at Zhejiang University, became interested in accelerating the joint deconvolution algorithm itself. Inspired by earlier work reported by Preibisch et al [1], she derived a variation of Richardson Lucy (R-L) deconvolution [2]. This variation replaces the back projector b, traditionally the transpose of the PSF (or forward projector f), with an “unmatched” back projector derived from both views’ forward projectors. Yue found that using such an unmatched back projected sped up deconvolution three-fold compared to traditional joint deconvolution.

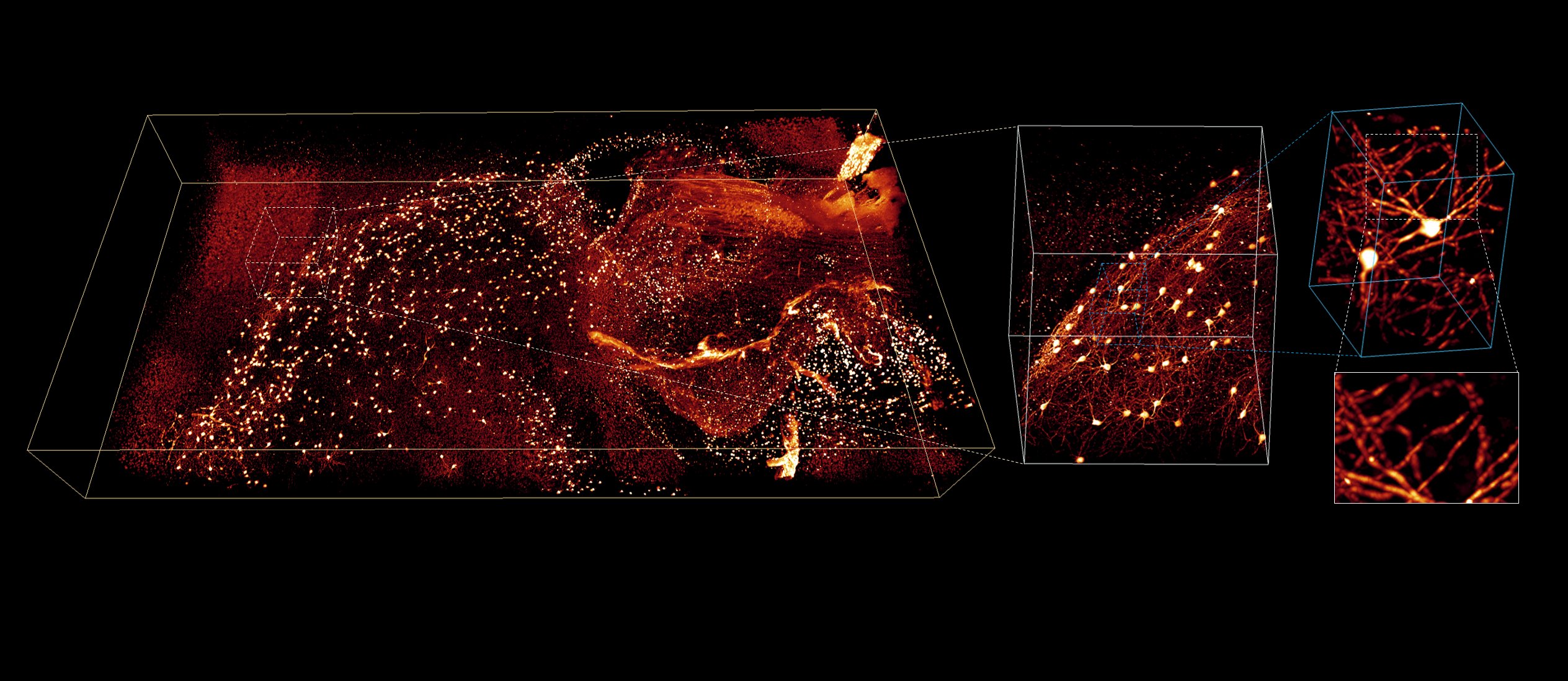

With this initial finding, we began to broadly explore “unmatched” back projectors. Working with our collaborator Dr. Patrick La Riviere at the University of Chicago, we realized that the concept of an “unmatched” back projector had been used in a different context in medical image reconstruction [3]. Patrick showed that the number of iterations in deconvolution can be greatly reduced if b is chosen so that |FT(b)|×|FT(f)| approaches a constant, where FT is the Fourier transform. We then derived a limiting case: combining a Wiener filter kernel with a low-pass Butterworth (Wiener-Butterworth or WB filter) allowed deconvolution with only 1 iteration but produced almost-identical images to those obtained with traditional R-L deconvolution with 10 iterations. While our initial motivation focused on processing diSPIM data, we were delighted to see this method also worked well for many other microscopes, including conventional single-view microscopes, quadruple-view microscopes, and even microscopes with a spatially-varying PSF. We later combined these methods with the improved GPU-based registration to extend them to processing much larger, TB-scale, cleared-tissue volumes, enabling multiview fusions that would not have been practical with our previous software (see example Video 1).

Video 1 | 4-color embryonic mouse intestine (2.1 x 2.5 x 1.5 mm3) reconstructed from the cleared tissue diSPIM data.

In the case of a spatially varying PSF, the reduction in the number of iterations due to the WB filter was helpful but still not practical for routine use, because each iteration still required hundreds of 3D convolution operations, leading to overall post-processing time far exceeding data acquisition (hours for deconvolution vs. seconds or minutes for acquisition). To obtain further acceleration, we turned to deep learning. We initially tried training a U-net to perform spatially varying deconvolution but found that it did not work well due to our limited GPU memory. Instead, we explored other network architectures with more memory-efficient footprints, ultimately creating ‘DenseDeconNet’, based on a densely connected network [4]. Compared to WB deconvolution, ‘DenseDeconNet’ achieves a 50- to 160-fold speed-up (500- to 2400-fold speed-up over traditional deconvolution), showing the potential of deep learning for future research in fast image restoration.

[1] Preibisch,S. et al. Efficient Bayesian-based multiview deconvolution. Nat. Methods 11, 645--648 (2014).

[2] Hudson, H. M. & Larkin, R. S. Accelerated image reconstruction using ordered subsets of projection data. IEEE Trans Med Imaging. 13, 601-609 (1994).

[3] Zeng, G. L. & Gullberg, G. T. Unmatched Projector/Backprojector Pairs in an Iterative Reconstruction Algorithm. IEEE Trans Med Imaging 19, 548-555 (2000).

[4] Huang, G., Liu, Z., van der Maaten, L. & Weinberger, K. Q. Densely Connected Convolutional Networks. In IEEE Conference on Computer Vision and Pattern Recognition 4700–4708 (2017).

Follow the Topic

-

Nature Biotechnology

A monthly journal covering the science and business of biotechnology, with new concepts in technology/methodology of relevance to the biological, biomedical, agricultural and environmental sciences.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in