Video Games As Learning Tools—Fact or Fiction?

Published in Neuroscience

Video games don’t have the best reputation. They’re violent. They’re ridiculously addictive. They hog up precious time that could be used to better advantage.

Yet despite these (questionably valid) arguments, there’s been a resurgence of interest in exploring video games as valuable learning tools. The traits that make these digital obsessions alluring, proponents argue, could also be harnessed to nurture young minds.

To be clear, using games as teaching tools is nothing new. The Oregon Trail, released in the 1970s, taught generations of youngsters about the hardships of pioneer life. But these days the focus isn’t necessarily on games designed for knowledge acquisition in the classroom.

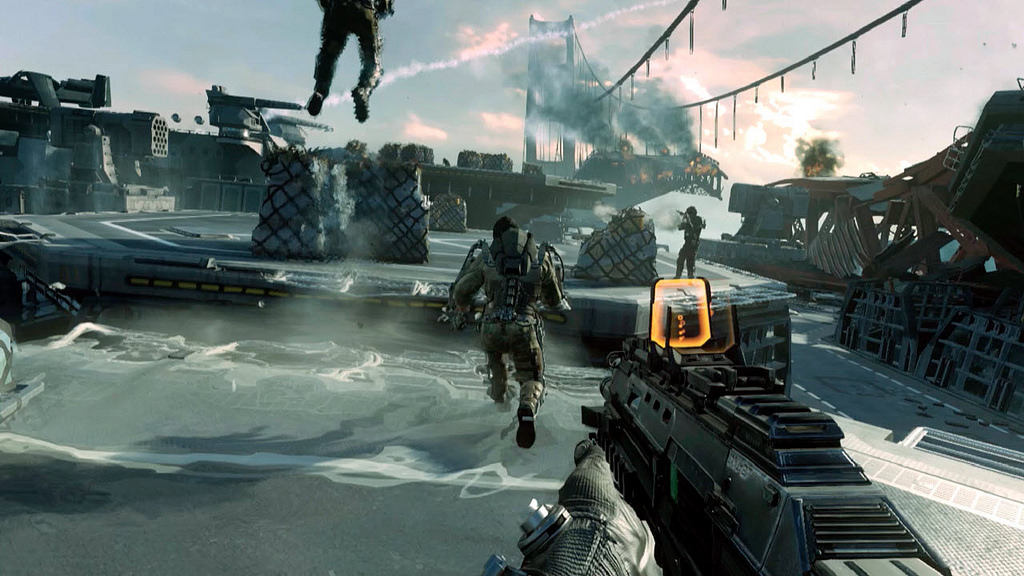

Rather, neuroscientists are casting a broader net but asking a very specific question: can commercial hits such as the physics-based Portal or first-person shooters like Call of Duty promote cognitive traits that transfer to real life?

For example, could playing these off-the-shelf games nurture attention, hone reflexes or even boost memory—general cognitive skills—that the player can go on and use for other things in life?

All about plasticity

To Dr. Adam Gazzeley, a professor at UC San Francisco who designs games that combat age-related memory decline, gaming has the potential to fundamentally improve how we process information.

The bottom line, says Gazzeley, is to harvest the brain’s ability to adapt to stimuli—its plasticity.

Back in 2016, his team demonstrated how a (scientifically) well-designed game, NeuroRacer, could boost the elderly’s multi-tasking skill to that of a twenty-year-old’s. The training was transferable, in that the participants also experienced improved working memory and attention—lasting at least half a year after the initial gaming. What’s more, the participants showed increased recruitment of their prefrontal cortex during the game, a brain region involved in higher thinking, suggesting that the game had fundamentally altered information processing.

NeuroRacer was custom designed, rather than off-the-shelf. It was therapeutic, rather than educational. But to Gazzeley, the study provided a proof-of-concept that games could trigger plasticity widely in the brain, influencing a whole suite of cognitive tools—reasoning, inhibition, working memory—rather than a single domain.

It’s an extraordinarily bold claim. And evidence is building—though not quite there.

Learning to Learn

According to Dr. Daphne Bavelier, a cognitive neuroscientist at the University of Geneva who studies the effect of fast-paced action games on brain plasticity, the field is gaining steam, and for good reason.

In a chance discovery, Bavelier noticed that one of her undergraduate students was extraordinarily good at the computerized attention tasks used in their studies. As it turns out, he regularly played first- and third-person shooter games, such as Call of Duty and Medal of Honor.

Could playing such “mind-numbing” games actually have a profound impact on attention control—the ability to focus?

In a series of studies, Bavelier and others found that the benefits associated with action games ranged all the way from low-level perception to higher-level cognitive flexibility. For example, her team found that 5 to 15 hours of gameplay every week promoted better vision—and the ability to pick out detail in a cluttered environment.

Gamers also seem to be better at keeping track of multiple objects in a computerized test: non-gamers can manage three objects, whereas gamers can track double the amount. What’s more, on average they‘re better at multitasking in general, increasing speed but maintaining accuracy when faced with multiple tasks—suggesting that the general trope of video games causing attention problems may not be the case.

In another study, Bavelier tested a group of participants on their ability to mentally manipulate 3D figures. It’s a difficult task that probes spatial cognition, an essential skill for many math and engineering applications.

The team then asked the participants to play 10 hours of video gameplay—distributed in 40-minute shots—over a period of two weeks. At the post-test, their performance significantly improved on the computerized task—and the benefit was still measurable five months later.

What could be driving these positive effects? According to Bavelier, games could be teaching players how to learn. Specifically, gamers don’t necessarily perform better when confronted with a new task, but show a much steeper learning curve compared to non-gamers, at least for certain motor and perceptual skills. In a way, games provide a “template” that players can rely on for grasping similar tasks in the future.

Not Quite Ready

But it’s not all roses in the neuroscience of gaming.

A 2013 randomized controlled study found that improvements in game scores for children with low levels of working memory didn’t extend to other skills, such as math, reading, writing or following instructions in the classroom. Working memory is the ability to keep information in mind to help us problem solve—for example, identifying a pair of rhyming words in short spoken poems. The study concludes that the training only helped performance on similar tasks, likely because of repeated practice.

(No one has yet determined whether using the kid-friendly Angry Birds or Minecraft works better than working memory training games per se...we'll have to wait and see!)

These contrasting results could be due to differences in study design. According to Dr. Martha Farah, a cognitive neuroscientist at the University of Pennsylvania, when testing the benefits of gaming it’s critical to focus on the nature of the game, the targeted user population, and the ability that the game is actively trying to improve. While first-person shooters may not help grade-school children (or even harm them), they do seem to benefit college-aged young adults.

It’s also unclear whether gaming is the most cost-effective way to improve cognition. After all, if you’re playing a game, you’re not spending that time reading, writing, going for a run or interacting with friends—all of which have a positive effect on the brain.

Then there are the side effects. Gaming can go too far, leading to symptoms similar to addiction. But Bavelier stresses that moderation is key: studies have found that distributed gaming in small doses (30 minutes a day, 4 or 5 days a week for 12 weeks) shows the strongest and longest-lasting effects. There’s also the concern that violent video games may instigate violent behavior in real-time—a controversial claim, but not without merit.

But that’s still mostly anecdotes. A small splattering of studies has begun unveiling a link between game play and higher school performance and social skills, but in all, the data is not quite there (yet).

So what’s the verdict? Are video games efficient learning tools?

As Bavelier puts it: we shouldn’t turn our back on the problems but we should leverage the media for what it has to offer—a doorway to relaxation, a medium for fun, and a preparation for future learning.

Follow the Topic

Your space to connect: The Psychedelics Hub

A new Communities’ space to connect, collaborate, and explore research on Psychotherapy, Clinical Psychology, and Neuroscience!

Continue reading announcement

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in