Bringing Ancient Art to Life: Breathing Life into Shanshui Art with AI and Perlin Noise

Published in Chemistry, Computational Sciences, and Plant Science

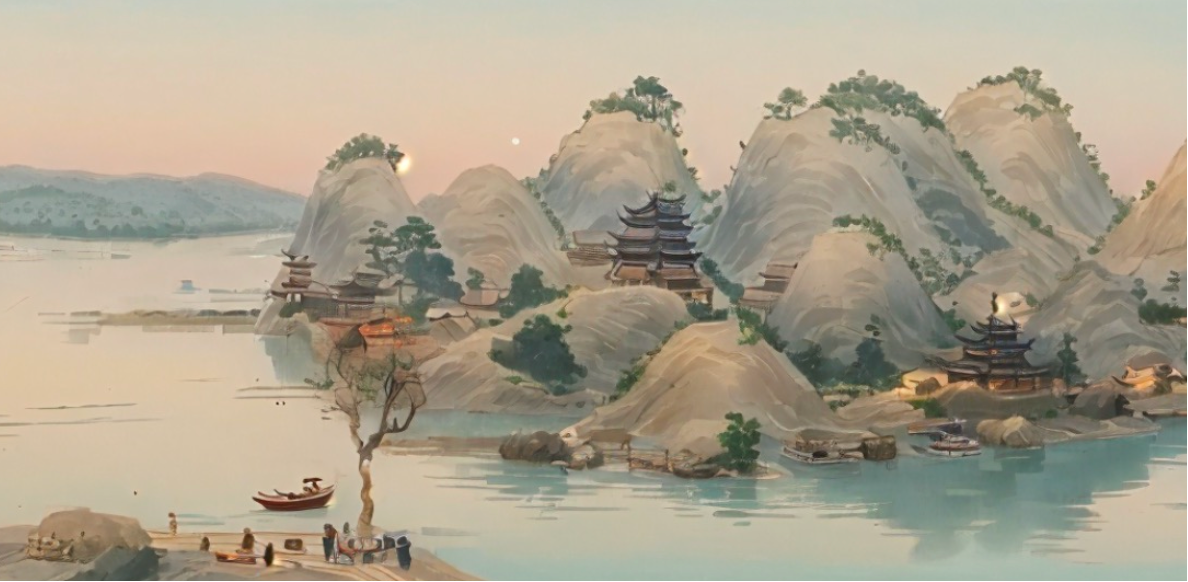

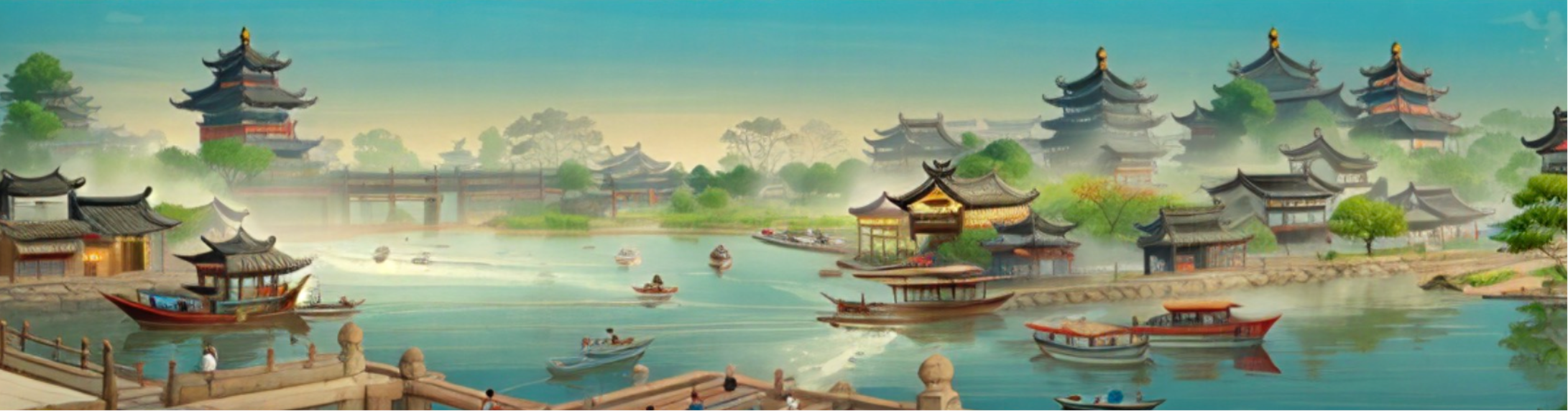

In our recent paper, “Generative AI Shanshui Animation Enhancement using Perlin Noise and Diffusion Models,” we set out to explore exactly that: using modern generative AI to animate classical Shanshui art without losing its soul.

The Challenge: Preserving Art in the Age of AI

Generative AI has made incredible strides in image and video synthesis, but traditional art forms like Shanshui painting remain a tough nut to crack. The main hurdles are:

-

Limited training data: There aren’t enough high-quality, digitized Shanshui paintings to train a model from scratch.

-

Aesthetic complexity: Shanshui isn’t just about shapes—it’s about composition, brushstroke style, mood, and cultural nuance.

Simply fine-tuning a diffusion model on a few Shanshui images wasn’t enough. We needed a way to guide the AI to understand the structure and spirit of the art, not just mimic it.

Our Approach: A Hybrid Creative Pipeline

We built a modular system that combines several AI techniques into a coherent creative workflow:

1. Generating the Skeleton with Perlin Noise

Instead of starting from noise or random latent vectors, we used Perlin Noise—a classic computer graphics algorithm—to generate the foundational “skeleton” of the landscape. Perlin Noise gives us natural-looking, continuous variations that mimic the organic flow of ink and brushwork. Mountains, ridges, and water paths emerge in a way that already feels artistic, not algorithmic.

2. Guiding Diffusion with ControlNet and GPT-4

We then used Stable Diffusion paired with ControlNet to “fill in” the skeleton with style, color, and detail. ControlNet ensured the generated structure stayed true to the original sketch, while GPT-4 helped generate rich, descriptive prompts that captured the essence of Shanshui—terms like “misty mountains,” “flowing river,” “distant pine trees,” and “soft ink wash.”

This prompt engineering step was crucial. It allowed us to steer the diffusion model toward artistic authenticity without needing thousands of training examples.

3. From Image to Animation with AnimateDiff

Here’s where the magic happens: turning a static painting into a living animation. We developed an Image-to-Video (I2V) Encoder that prepares the generated landscape for AnimateDiff, a diffusion-based video generation model. By introducing controlled noise and temporal dynamics, we created smooth, coherent motion—clouds drifting, water flowing, leaves rustling—all while preserving the painting’s style.

4. Refining with Textual Inversion and LoRA

To further enhance quality, we used Textual Inversion to teach the model what not to generate (e.g., “blurry,” “oversaturated”), and experimented with LoRA fine-tuning to adapt the model more closely to Shanshui aesthetics. Interestingly, we found that a well-designed Perlin Noise backbone often outperformed LoRA in maintaining structural integrity and stylistic purity.

Follow the Topic

-

Discover Artificial Intelligence

This is a transdisciplinary, international journal that publishes papers on all aspects of the theory, the methodology and the applications of artificial intelligence (AI).

Related Collections

With Collections, you can get published faster and increase your visibility.

Transforming Education through Artificial Intelligence: Opportunities, Challenges, and Future Directions

Artificial Intelligence (AI) is rapidly changing the educational field by enabling personalized learning, intelligent tutoring systems, automated assessments, learning analytics, and administrative automation.

This collection invites original research, systematic reviews, and visionary perspectives on the transformative impact of AI in education. It aims to explore how AI technologies can enhance equity, inclusion, and efficiency in educational settings across different contexts, including higher education, K-12, vocational training, and lifelong learning. This collection will address technical, pedagogical, ethical, and policy aspects, fostering interdisciplinary perspectives and evidence-based insights.

This Collection supports and amplifies research related to SDG 4 and SDG 9.

Keywords: Artificial Intelligence, AI in Education, Educational Technology, Data Analytics, AI Ethics

Publishing Model: Open Access

Deadline: May 31, 2026

AI for Image and Video Analysis: Emerging Trends and Applications

The application of AI in image and video analysis has revolutionized a wide range of domains, offering more accurate and efficient visual data processing. Thanks to advances in neural networks, large-scale datasets, and computational power, AI algorithms have surpassed traditional computer vision techniques in performance. This transformation has had a profound impact on areas like healthcare (where AI aids in diagnosing diseases through medical imaging), security (with real-time video surveillance), and entertainment (enhancing video quality and enabling automated content tagging). As AI continues to evolve, new challenges emerge, including the need for explainability, handling large datasets efficiently, improving robustness in real-world environments, and addressing biases in AI models. These open questions necessitate continued research, collaboration, and discourse. The proposed Collection focuses on the intersection of artificial intelligence (AI) and image and video analysis, exploring the latest advancements, challenges, and applications in this rapidly evolving field. As AI-powered techniques such as deep learning, computer vision, and generative models mature, they are increasingly being leveraged for tasks like image classification, object detection, video segmentation, activity recognition, facial recognition, and more. These technologies are pivotal in industries including healthcare, security, autonomous vehicles, entertainment, and smart cities, to name a few. We invite researchers and practitioners to submit articles related to, but not limited to, the following topics:

- Deep learning techniques for image and video analysis

- AI-based object detection and recognition

- Image segmentation and annotation using AI

- Video classification and activity recognition

- Real-time video surveillance and security systems

- AI for medical image analysis and diagnostics

- Generative adversarial networks (GANs) for image and video generation

- AI in autonomous driving and smart transportation systems

- AI-powered multimedia search and retrieval

- Human-Computer Interaction (HCI) through AI-based video analysis

- AI techniques for image and video compression

- Ethical concerns and responsible AI in image and video analysis

This Collection supports and amplifies research related to SDG 9 and SDG 11.

Keywords: computer vision; image segmentation; object detection; video surveillance

Publishing Model: Open Access

Deadline: Sep 15, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in

Hello I am an artist (landscape oil painter). I like the way you have animated these paintings. I would like to email you for further discussion.

Welcome