FHIBE: A New Standard for Ethical AI Evaluation

Published in Social Sciences, Computational Sciences, and Philosophy & Religion

When my team at Sony AI began working on fairness in computer vision, we quickly realized that the problem wasn’t only in the models — it was in the data used throughout the development process. Despite growing attention to fairness in AI, the datasets used to benchmark model performance have hardly evolved. Many rely on web-scraped images, gathered without consent, diversity, or the necessary annotations to understand how models perform across different populations. AI developers wanting to check their models for bias were thus left in a difficult position: either use problematically sourced public datasets that might expose them to privacy and IP risks or choose not to benchmark their models for bias.

FHIBE (Fair Human-Centric Image Benchmark) was created to offer a new path forward. FHIBE is the world’s first publicly available, consent-driven, globally diverse dataset for evaluating bias across a wide variety of human-centric computer vision tasks.

When we started FHIBE, we assumed that applying ethical best practices that our team and others in the field had set out would not be so difficult, but it turned out that there were many challenges to implementing such best practices. The project took over three years, diverse authors spanning many sociotechnical areas of expertise, and the support of many cross-functional teams, not to mention the partnership of our data vendors, quality assurance specialists, and the many global participants in our dataset.

From the beginning, the FHIBE project centered on human dignity and agency. Consent and compensation for data-rightholders were at the heart of our data collection design. The informed consent process was designed to comply with data protection laws, including the EU’s GDPR, clearly explaining how images and annotations would be used and ensuring that participants could revoke consent at any point. Participants were also fairly compensated at or above local minimum wage, in line with International Labour Organization standards. The images were also manually reviewed to remove identifiable background content, such as license plates or bystanders, ensuring that what remained was consensually collected.

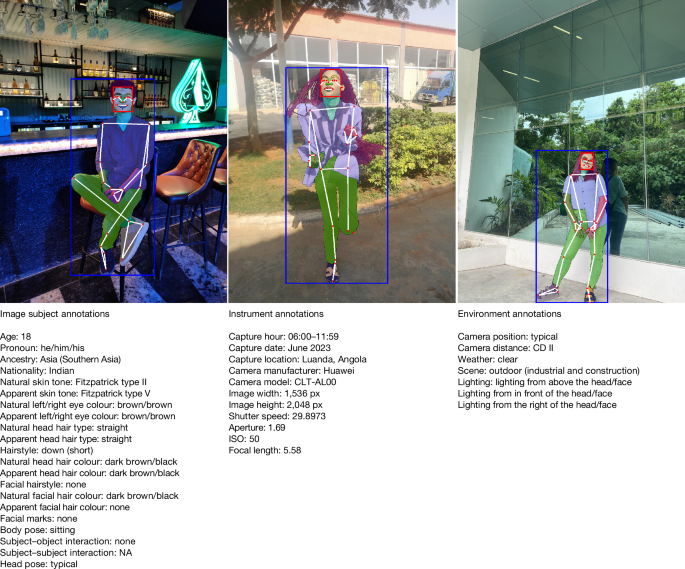

Rather than relying on inferred attributes or third-party labeling, FHIBE uses self-reported demographic data, reducing the risks of individuals being mislabelled. The richness of FHIBE’s annotations on demographics, physical attributes, environment, and instruments further enable AI developers to conduct granular bias diagnoses to pinpoint failure modes of their models. This can empower them to better understand how to improve their model’s performance and build fairer models.

Importantly, FHIBE’s Terms of Use restrict its use for bias evaluation and mitigation, not model training. The demographic information thus can only be used to evaluate bias rather than perpetuate it.

As part of our project, we tested models like RetinaFace and ArcFace using FHIBE to evaluate face detection and verification across varying skin tones, facial structures, and lighting environments. Pose estimation and person detection models were also assessed for accuracy disparities.

Using FHIBE, we found that models tended to perform better for younger individuals and those with lighter skin tones, though results varied by model and task. This variability highlights why intersectional bias testing across combinations of pronoun, age, ancestry, and skin tone is essential. FHIBE’s annotation depth allows for such granular diagnoses, helping researchers identify not just whether disparities exist, but why they emerge.

When applied to foundation models like CLIP and BLIP-2, FHIBE revealed biases that would be difficult to detect using existing benchmarks. CLIP misgendered individuals based on hairstyle and presentation. BLIP-2 disproportionately associated African ancestry participants with rural environments. It also disproportionately gave toxic responses associating those with African ancestry with criminal activity.

By using FHIBE during model validation or deployment testing, teams can better understand how AI systems behave across identities, environments, and lighting conditions—before those systems are released into everyday use.

We hope FHIBE will establish a new standard for responsibly curated data for AI systems by integrating comprehensive, consensually sourced images and annotations. FHIBE is proof that global, consent-driven data collection is possible, even if difficult.

To learn more about FHIBE, watch our short film, “A Fair Reflection,” and to access FHIBE, visit https://fairnessbenchmark.ai.sony/.

Follow the Topic

-

Nature

A weekly international journal publishing the finest peer-reviewed research in all fields of science and technology on the basis of its originality, importance, interdisciplinary interest, timeliness, accessibility, elegance and surprising conclusions.

Related Collections

With Collections, you can get published faster and increase your visibility.

Carbon Dioxide Removal

Publishing Model: Hybrid

Deadline: Jan 16, 2027

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in