Will opacities be the next accuracy bottleneck in exoplanet retrieval?

Published in Astronomy

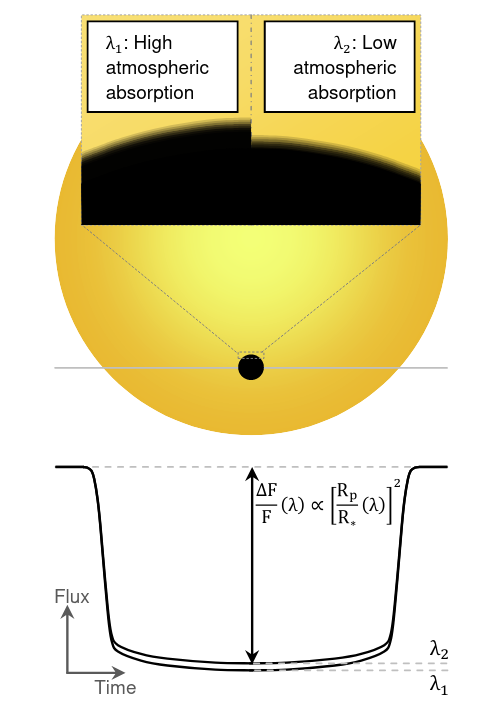

Unlocking the secrets of the exoplanetary atmospheres is a feat that now appears within reach after the successful first months of operation of the James Webb Space Telescope (JWST). One of the prime techniques to be used in this endeavor is called transmission spectroscopy. The technique involves detecting molecular fingerprints as the light passes through the atmosphere of the planet. As we cannot spatially resolve exoplanets, such molecular fingerprints are derived from larger transit depths at different wavelengths where both the planet and its atmosphere blocked the stellar light aiming at us (see Figure 1). Once a transmission spectrum is obtained, opacity models are used to decrypt the code and reveal what molecules are present in the observed atmosphere and in what amount.

Figure 1: Schematic representation of the basics of transmission spectroscopy. The flux drop during a transit is dependent on the wavelength observed as the planetary atmosphere has a wavelength-dependent capacity to attenuate the light from the background star. Figure from de Wit & Seager 2013.

One of the constraints when it comes to studying extrasolar worlds is that it will not be possible to visit them anytime soon. So whether we like it or not, - all our eggs lie in one basket - remote sensing. And to confidently move the field forward, we need to understand how good the models used to analyze and interpret data are, especially when the data at hand suddenly became orders of magnitude more precise and rich in information, thanks to JWST.

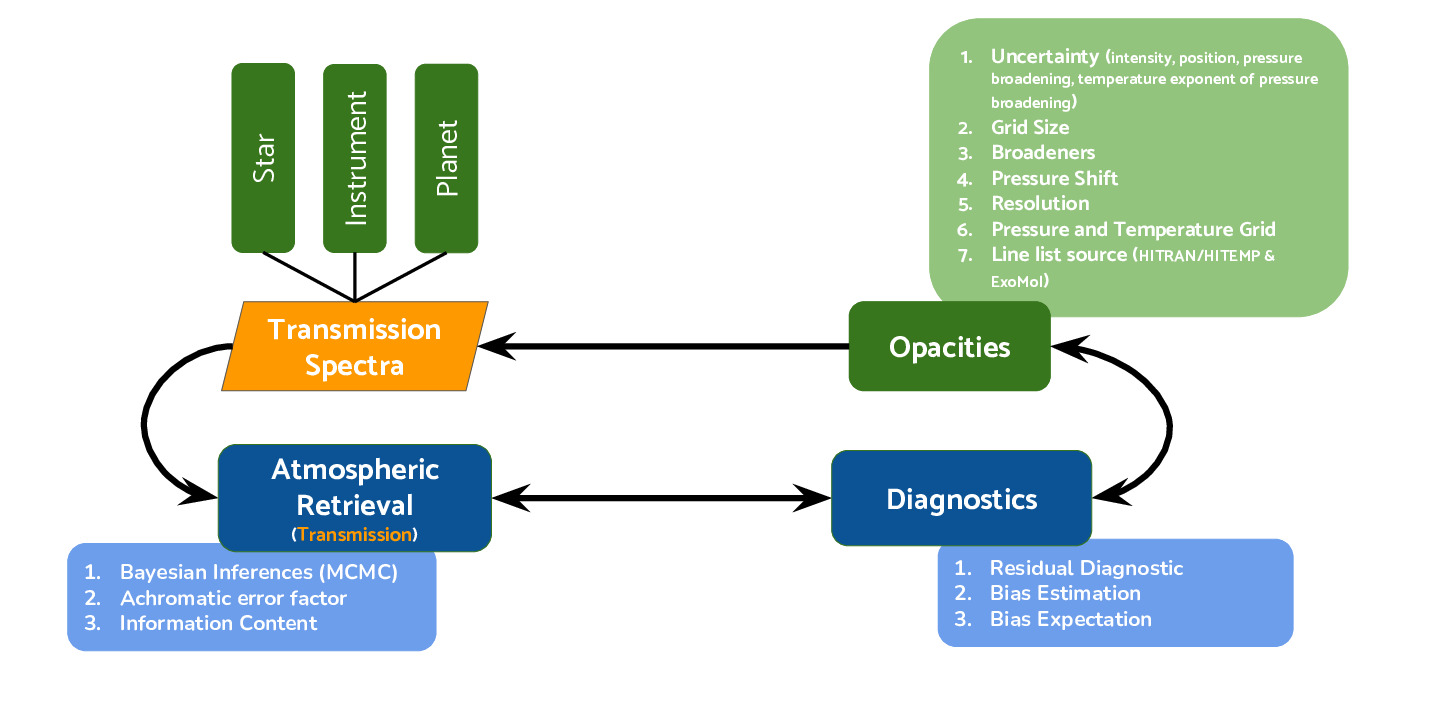

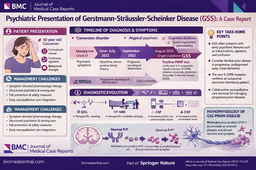

Figure 2: Framework for sensitivity analysis of retrieved atmospheric properties to opacity model. The four building blocks of the transmission spectra are shown in green, analysis techniques are marked in blue, and the orange highlights the remote sensing technique at the center of this perturbation/sensitivity analysis. The arrows are shown to indicate the direction of the information flow. Figure from Niraula, de Wit, et al. 2022.

Indeed, all models are an approximation of the truths. And, in the famous words of George E. P. Box, “All models are wrong, but some are useful.” Understanding the limitations and representativeness of our models is, therefore, pivotal. There are four major components of a transmission model (see Figure 2): (1) the host star emitting the background spectrum, (2) the planetary atmosphere (and its properties) that will be interacting with the stellar light, (3) the processes describing how the light and the matter will interact within the planetary atmosphere (the opacity model), and (4) the instrument that will collect the light and transform it into the data we can work with. Our study focuses on component (3), whose contribution to the noise budget of future exoplanet retrieval was until now unexplored.

Via a sensitivity/perturbation analysis, we explore the extent to which the constraints we derive on their temperature, pressure, and composition profiles are affected by the limitations of opacity models when these are fully propagated in retrieval techniques. To this end, we bridged the gap between astronomers and spectroscopists to move beyond perceiving opacity models as black boxes. We first listed the various assumptions and sources of uncertainties behind standard opacity models and databases (including the HITRAN/HITEMP and ExoMol databases). We then generated different perturbed opacity models to track how each of these assumptions and uncertainties affects the model itself and, most importantly, the inferences derived when decoding an exoplanet transmission spectrum with the models.

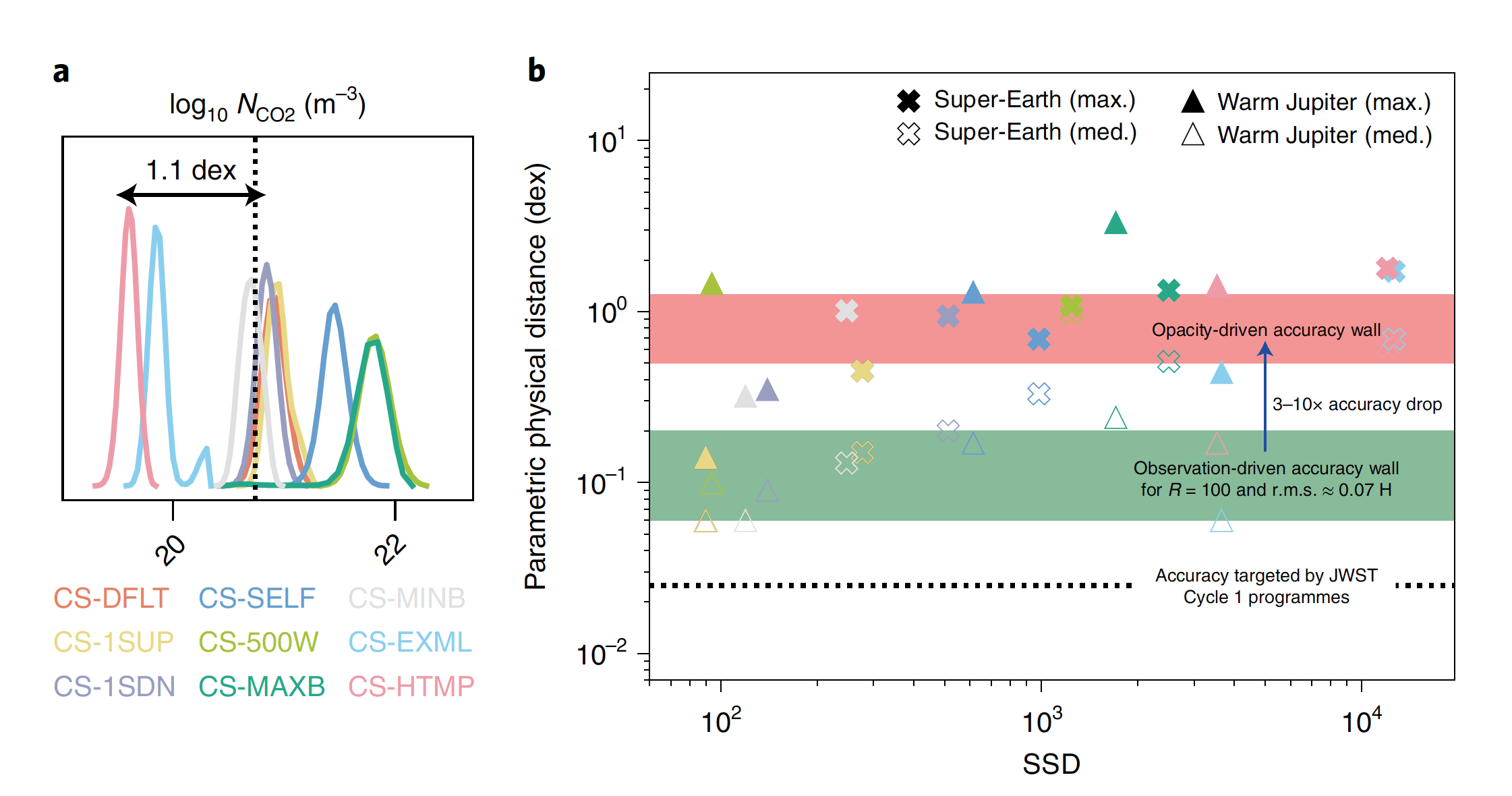

We found that despite notable perturbations, all the opacity models provided a good fit to exoplanet spectra. This result implies that the perturbations to the opacity models are adequately compensated by biases in the atmospheric properties, leaving us ‘blind’ to imperfections of our models. Specifically, we found that the atmospheric properties, such as molecular abundances, can be biased by more than 20 standard deviations and 0.5–1.0 dex (that is, 3–10 times) away from the truth (Figure 3). These biases are primarily due to limited knowledge of line-broadening and far-wing effects, as well as differences between line lists and underlying data sources.

So where do we go from here? Now that the major limitations of opacity models have been highlighted we can move forward and support a “data-driven” targeted improvement of these models. Doing so will require significant investments in laboratory measurements and high-performance computations to extend the parameter space within which we can constrain with precision how possible atmospheric constituents interact with light under exotic (i.e., not Earth-like) temperatures, pressures, and background compositions. It is possible to determine how light gets absorbed by different mixes of gases in laboratories, as well as via theoretical tools involving quantum mechanics. Naturally, each approach comes with its caveats allowing for their complementarity. Experiments are complicated by the important resources required to investigate the wide range of conditions possibly relevant to exoplanetary sciences, while theoretical calculations increase steeply in complexity with the number of atoms involved, especially where inter-molecular collisions need to be accounted for and billions of electronic transitions are possible.

Although there may be a long road ahead to ensure the reliable decoding of atmospheric spectra of worlds unlike Earth, the effort will be well worth the reward: confidently unlocking the mystery of planet formation and evolution, confidently assessing the (in)habitability of other worlds. So let’s double down on the cross-disciplinary work needed to tackle this challenge going to ensure we’re making the very best of this groundbreaking observatory that is JWST.

Follow the Topic

-

Nature Astronomy

This journal welcomes research across astronomy, astrophysics and planetary science, with the aim of fostering closer interaction between the researchers in each of these areas.

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in