Mastering Complexity: AI Surrogates for High-Dimensional System Modeling in Science and Engineering

Published in Computational Sciences and Mathematics

Imagine being able to predict the behavior of complex physical systems in real-time, something that's been nearly impossible with traditional methods due to their heavy computational demands. Enter neural operators—an approach that creates emulators for systems described by partial differential equations (PDEs). These powerful tools can approximate the intricate relationships in high-dimensional spaces, making real-time predictions not just possible, but practical for the first time. Neural operators marked a groundbreaking shift in the field with the Deep Operator Network or DeepONet inspired by the universal approximation theorem for operators being the first one proposed by Lu et al. in 2019, which enabled fast inference and high generalization accuracy. Neural networks are functions that map data to data but operators are higher level abstractions that map functions to functions. Given that neural operators are trained online, the inference time is negligible, hence they can be employed as surrogate models for real-time forecasting, e.g. in applications in autonomy, design, control and optimization.

Overcoming the Challenge of High Dimensionality

Even though neural operators excel at learning surrogates for PDEs, they still face notable challenges. These models primarily operate in a data-driven manner, necessitating the acquisition of a large and representative labeled dataset beforehand. Complex physical systems often require high-fidelity simulations on fine spatial and temporal grids, resulting in extremely high-dimensional datasets. Traditional numerical simulators like FEM are costly, typically allowing for only a few hundred observations, if not fewer. This scarcity of data leads to sparse datasets that may not adequately represent the input/output distribution space. Additionally, high-dimensional physics-based data often contains redundant features, significantly slowing down and complicating network optimization.

Innovating with Latent Space Neural Operators

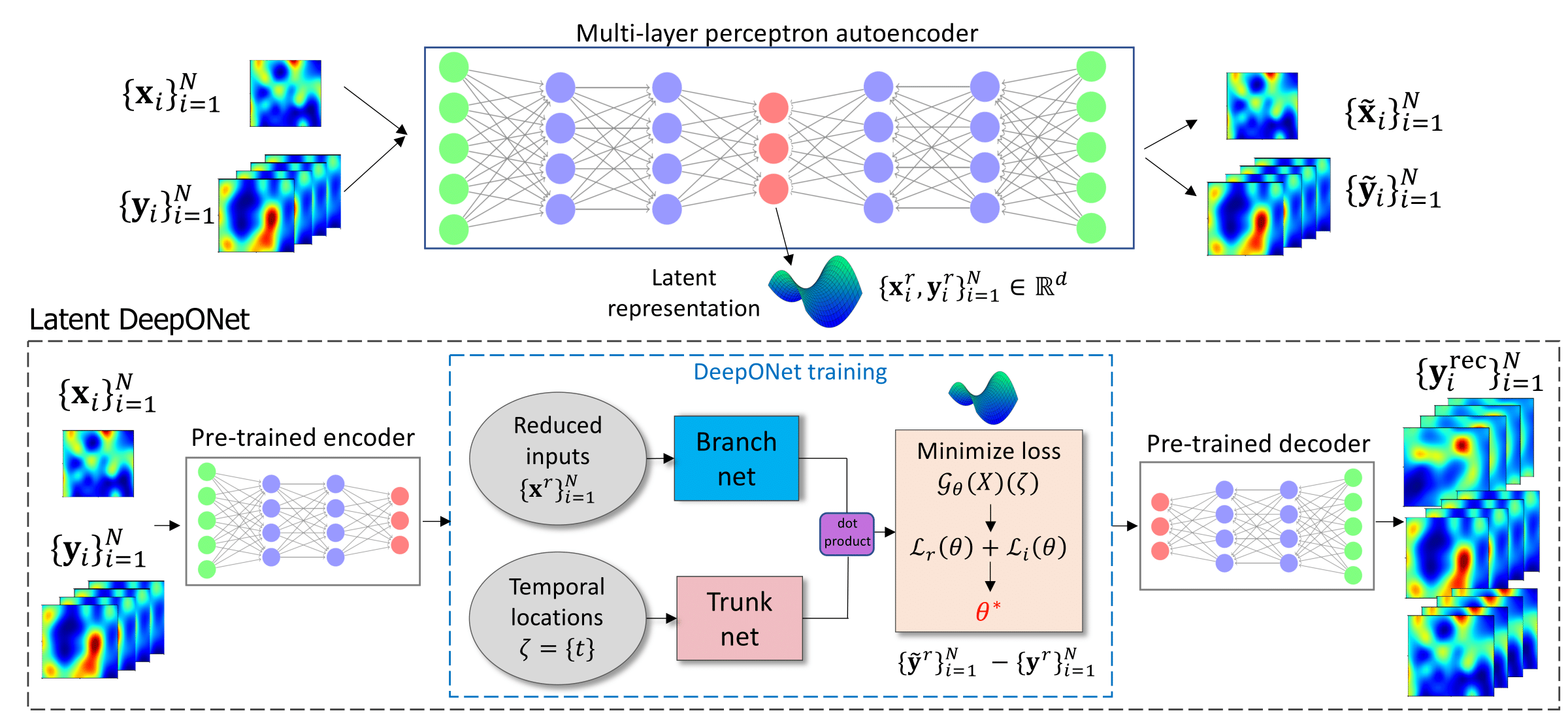

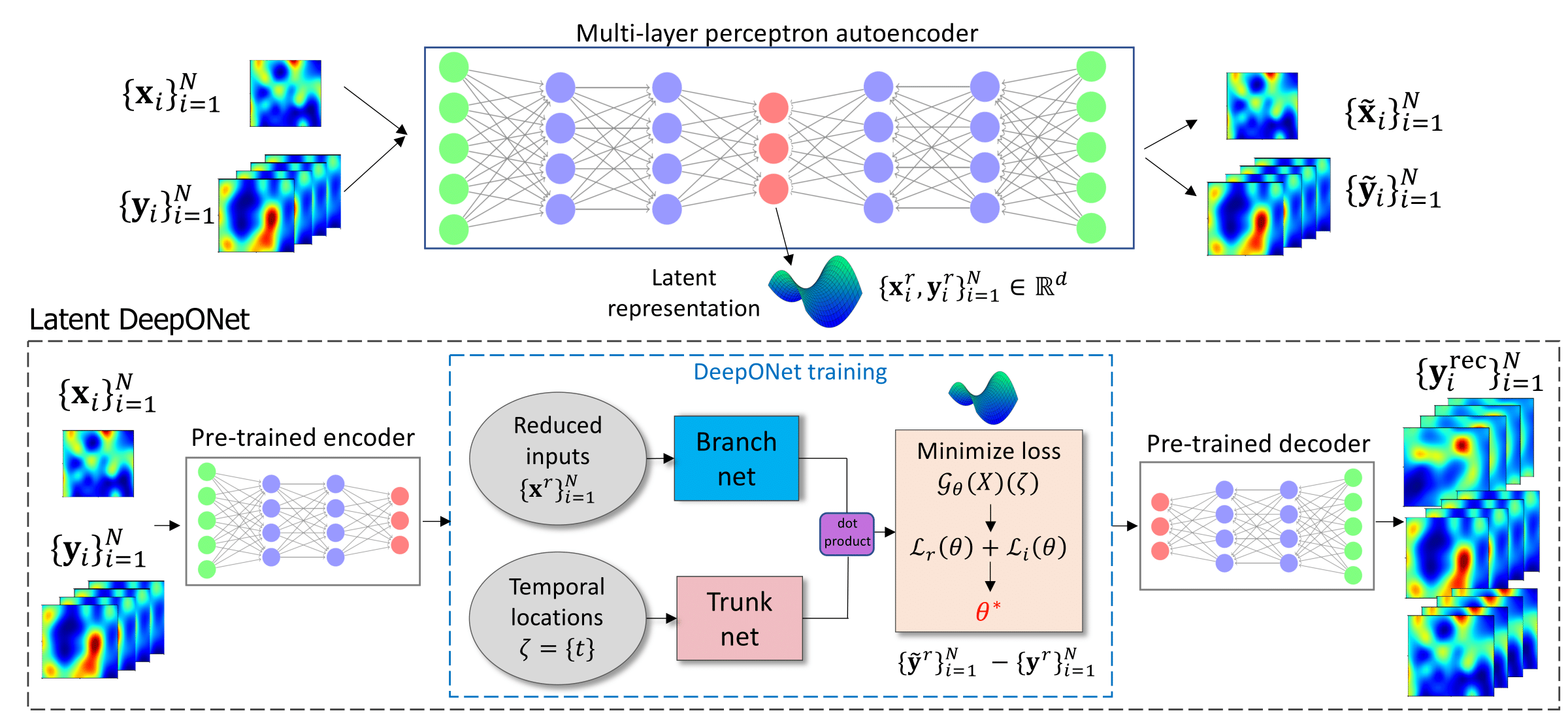

Physical constraints cause the data to exist in lower-dimensional latent spaces (manifolds), which can be identified using suitable linear or nonlinear dimension reduction techniques. This insight inspired our work on developing neural operators within latent spaces, leveraging the system's reduced complexity to enhance the efficiency and accuracy of our neural operators. Our technique utilizes the DeepONet architecture, which is applied to a low-dimensional latent space that effectively captures the essential dynamics (see Fig. 1). This approach enables us to develop efficient and accurate approximators of the underlying operators, significantly improving the practicality and speed of predictions for complex systems.

Latent DeepONet modeling consists of a two-step approach: first, training a suitable encoder-decoder type of model to identify a latent representation for the high-dimensional PDE inputs and outputs, and second, training a DeepONet model and employing the pre-trained decoder to project samples back to the physically interpretable space. One of the main strengths of this approach is its modular design, featuring separate encoder and decoder components. This design facilitates the direct construction of a neural operator and allows for the choice of any suitable dimension reduction model.

Real-World Applications and Achievements

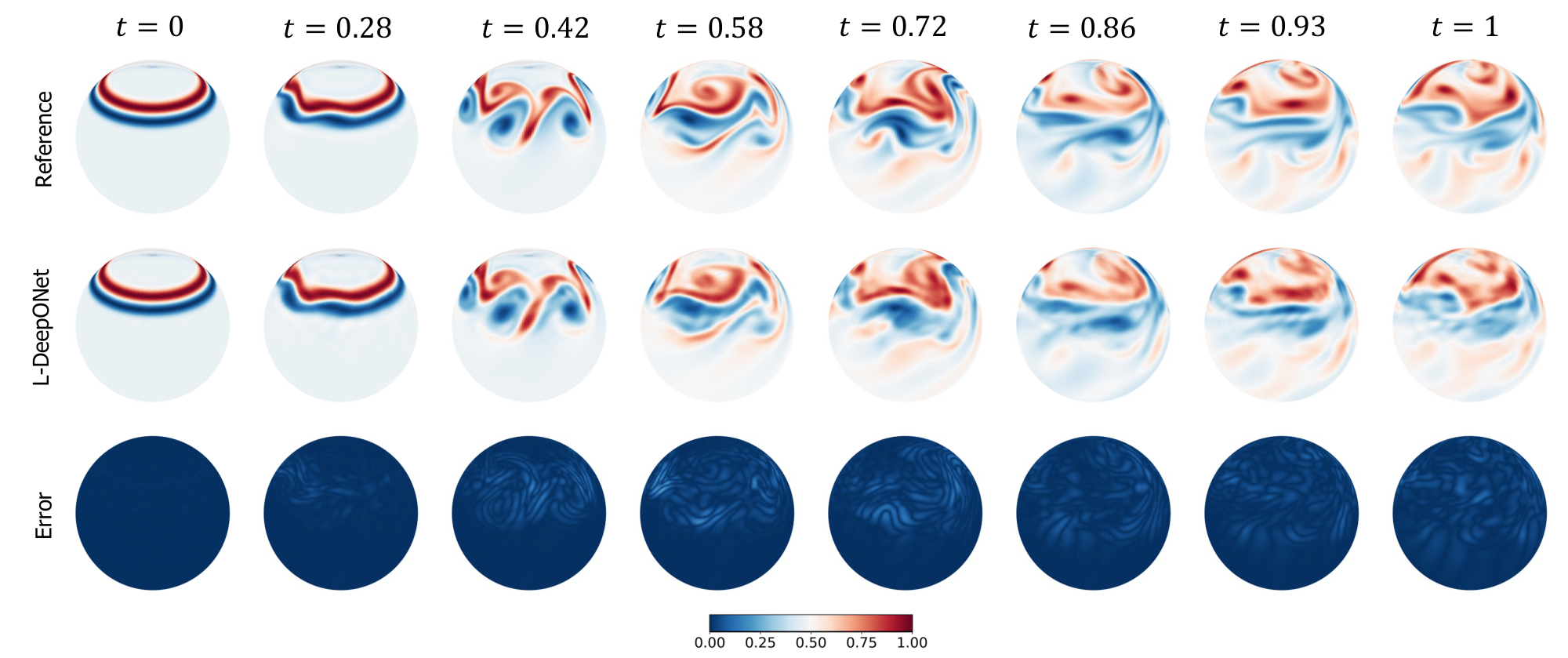

Our method proves its effectiveness across a variety of real-world scenarios, such as predicting material fractures, convective fluid flows, and global climate patterns. These applications reveal that our approach not only surpasses current state-of-the-art methods in prediction accuracy but also drastically enhances computational efficiency, making it possible to learn operators at previously unmanageable scales. A significant highlight is our ability to predict large-scale atmospheric flows with millions of degrees of freedom (see Fig. 2), thereby improving weather and climate forecasting. Attempting to learn operators for such complex problems in their original high-dimensional space has been computationally prohibitive.

Wrapping Up: Insights and Future Horizons

As demonstrated, L-DeepONet serves as a robust tool in scientific machine learning (SciML) and uncertainty quantification (UQ), enhancing the accuracy and generalizability of neural operators in applications that require high-fidelity simulations with complex dynamical characteristics, such as climate models. A comparison study with alternative neural operators showed that L-DeepONet delivers better results and more accurately captures the evolution of time-dependent PDE systems, especially as dimensionality and non-linearity increase. Additionally, L-DeepONet requires fewer computational resources and less memory for training compared to standard DeepONet and FNO, which train on full-dimensional data. Further enhancements can be made to boost both the quality and accuracy L-DeepONet, such as adopting the two-step training strategy proposed by Lee and Shin in 2023, enable the ability to train L-DeepONet using a physics-informed learning manner by directly embedding the physics into the model (Wang et al., 2021) or incorporating the attention mechanism into the architecture. With these advancements, L-DeepONet stands poised to revolutionize real-time predictions in science and engineering, paving the way for even more complex and efficient applications in the future.

References

Lu, L., Jin, P., Pang, G., Zhang, Z., and Karniadakis, G. E. Learning nonlinear operators via DeepONet based on the universal approximation theorem of operators. Nature machine intelligence, 3(3):218–229, 2021

Lee, S. and Shin, Y. On the training and generalization of deep operator networks. arXiv preprint arXiv:2309.01020, 2023.

Wang, S., Wang, H., & Perdikaris, P. (2021). Learning the solution operator of parametric partial differential equations with physics-informed DeepONets. Science advances, 7(40), eabi8605.

Follow the Topic

-

Nature Communications

An open access, multidisciplinary journal dedicated to publishing high-quality research in all areas of the biological, health, physical, chemical and Earth sciences.

Related Collections

With Collections, you can get published faster and increase your visibility.

Women's Health

Publishing Model: Hybrid

Deadline: Ongoing

Biosensing

Publishing Model: Hybrid

Deadline: Jun 30, 2026

Please sign in or register for FREE

If you are a registered user on Research Communities by Springer Nature, please sign in